At this year’s Mobile World Congress, one theme stood out clearly: the changing role of the edge.

For decades, service providers defined the edge as the end of the network, where the wire stops or the radio signal reaches its limit. Today, that definition is changing. The edge is becoming a platform for distributed applications and enterprise services.

That shift is opening a new opportunity for service providers: delivering compute and intelligence alongside their connectivity offerings. Just ask Verizon.

“Edge means a lot of things to different people,” said Lee Field, Vice President of Global Sales Engineering at Verizon. “What we’re now starting to see, driven by AI requirements, is a move toward on-prem environments.”

Scaling to thousands of sites

AI workloads are beginning to reshape how the edge is defined and deployed. Applications increasingly require low latency, localized processing, and data sovereignty, pushing compute closer to where data is created.

This shift is also influenced by changes in how AI workloads perform. As accelerators and models have improved, network latency, which historically had little impact on inference performance, is now emerging as a key factor in how applications are experienced, making connectivity an increasingly important part of the overall experience.

Unlike most enterprise deployments, service provider infrastructure operates at massive scale. Edge environments can span thousands of distributed locations, from regional facilities to micro data centers and edge sites that were never designed to host modern compute infrastructure. That scale introduces new operational challenges.

“When you come to environments at the scale of five thousand, ten thousand locations and above, consistency becomes critical,” emphasized Verizon’s Field.

For providers like Verizon, edge deployments must be repeatable, automated, and easy to manage across the company’s entire fleet. Systems must be able to install quickly, enroll automatically into operational management applications, and operate consistently regardless of location. In other words, the edge must operate more like a fleet of managed infrastructure instead of a collection of individual systems.

Connecting a new generation of enterprise services

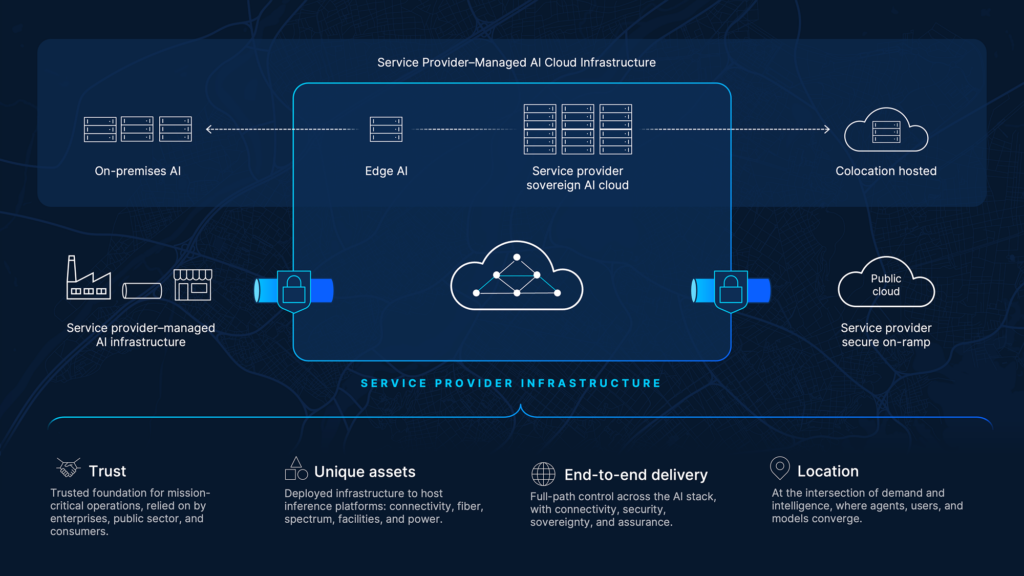

For many service providers, this opportunity is not limited to a single deployment model. It spans on-premises environments at customer locations, edge infrastructure across their footprint, and AI infrastructure within the service provider network itself, forming a distributed foundation for delivering AI-powered services.

Figure 1. AI delivery across a service provider–managed AI cloud infrastructure spanning on-premises environments to edge locations. Benefits listed include trust, unique assets, end-to-end delivery, and location.

By deploying distributed compute platforms like Cisco Unified Edge across their footprint, service providers can support emerging workloads such as:

- AI inference for enterprise applications

- Localized data processing

- Distributed analytics and video workloads

- Low-latency services for retail, healthcare, and manufacturing

With trusted relationships, extensive network and infrastructure assets, and the ability to deliver services end to end across distributed environments, service providers are well positioned to bring AI capabilities closer to where users, data, and applications converge.

Verizon, an early user of Cisco Unified Edge and partner in the product’s launch, is exploring how to use the platform to support both internal infrastructure modernization and new enterprise service offerings. This could include bundling edge capabilities enabled by platforms like Cisco Unified Edge into business connectivity offerings, giving customers access to localized compute resources as part of their network environment.

Bringing data center capabilities to the edge

Supporting this new generation of AI workloads requires infrastructure designed specifically for distributed environments.

Cisco Unified Edge was built to address this challenge, bringing compute, networking, storage, and security together to operate consistently across thousands of locations.

By extending centralized operations through Cisco Intersight, service providers can manage edge infrastructure at scale with capabilities such as:

- Automated provisioning

- Fleet-level lifecycle management

- Integrated security and compliance

- Centralized monitoring and support

This approach gives service providers the foundation to build, operate, and deliver their own edge-based services while maintaining consistency across a highly distributed footprint.

A new job description for the edge

The telecommunications industry has always been about connecting people, devices, and systems. That now includes placing intelligence closer to where decisions are made.

As AI inferencing and other data processing expands beyond centralized cloud environments, the edge is becoming a critical platform for service providers to build and deliver the next generation of services to their customers.

As Lee Field put it: “What got us here isn’t going to get us to that AI-ready platform for the future.”

For service providers around the world, that future is already taking shape, transforming connectivity into a foundation for intelligent, distributed services.

Learn how Cisco Unified Edge helps service providers build and deliver distributed AI services at scale

Additional resources: