Someone recently asked me about the origin of the Data Virtualization category name. From my unique perspective as the software marketer who drove the term and category’s successful adoption, I enjoyed the chance to share the Data Virtualization category story with them. And in this blog, I thought I might also share it with you.

So, like every good story, I’ll begin at the beginning.

It All Started with Enterprise Information Integration

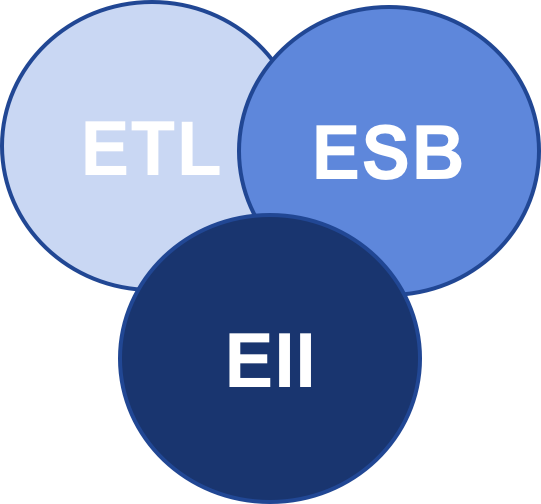

In the early 2000s the data management term Enterprise Information Integration (EII) came to the fore. EII was driven by advancements in high-performance query optimization that made data federation at enterprise scale possible for the first time. Wikipedia’s entry on EII claims the vendor Metamatrix, was the source of the term. And organizations such as TDWI and Gartner identified EII as an essential complement to Extract, Transform, and Load (ETL) and Enterprise Service Bus (ESB), often depicted by three intersecting circles as follows:

In 2005, JP Morgenthal published EII’s first book, “Enterprise Information Integration: A Pragmatic Approach.” In that same year, SIGMOD also published a groundbreaking EII compendium white paper authored by Alon Y. Halevy; et al., entitled “Enterprise information integration: successes, challenges and controversies.”

Always on the lookout for new enterprise software opportunities, nearly 30 venture-backed software firms were quick to move into the EII market. Perhaps you remember Avaki, Ipedo, Journee Software, Metamatrix, XAware and more.

Data Services Arises as EII Plus

Service Oriented Architecture (SOA) was also a hot market at that time. Although the goal of SOA is to enable business processes (rather than business intelligence and analytics), business processes also required data on demand. Examples might include SOA Data Services to look up prices, customer discounts, ship-to-addresses and more within a SOA-enabled order management process.

Gartner’s October 2005 “Management Update: Data Services: The Intersection of Data Integration and SOA,” by Ted Friedman and Jess Thomson was amongst the first pieces of analyst research to address data from the SOA point of view. Gartner’s definition of Data Services expanded the role of EII beyond BI to also include support for business processes. Technically it also expanded EII beyond SQL into more SOA type approaches that leveraged then-emerging technologies such as SOAP and XML.

I joined Composite Software, one of the venture-backed EII firms, as Chief Marketing Officer in 2006. Because Composite’s CEO, Jim Green, had led the definition of CORBA and was a founder of Active Software, a leading SOA provider, Jim saw the same trends that Gartner saw. And thus, Jim and I positioned Composite away from the other EII vendors, instead focusing on the SOA Data Services segment of the market.

Forrester Plants the Data Virtualization Seed

Forrester had a different take on Data Services than Gartner, publishing landmark research in January 2006 entitled “Information Fabric: Enterprise Data Virtualization.” In this work, Forrester analysts Noel Yuhanna and Mike Gilpin were the first amongst the analyst community to introduce the term “Data Virtualization.” This work expanded upon the earlier SQL and BI-only focus of EII, as well as the services concepts of SOA Data Services. It introduced the concept of an Information Fabric architecture that aspired to be the logical place to go for all enterprise information.

Wachovia Implements Data Virtualization

At Composite, a number of our customers began to implement the concepts espoused by Gartner and Forrester. Technologists at Wachovia’s investment banking group were an example of these pioneers. Led by Tony Bishop, they called their new implementation Data Virtualization, and it supported the entire bank’s customer and investment activity information needs.

Composite Evangelizes Data Virtualization Category Name

By the summer of 2007, the Composite field had seen great success promoting the Data Services concept. However, too many of the prospects wanted to implement more transactional SOA capabilities first, before expanding to meet the BI requirements that Composite could fulfill. This good news / bad news result drove Composite’s management team to meet in early 2007 in Half Moon Bay California to discuss our “category problem”.

After two days of hashing out various options and messaging, we decided to take the Data Virtualization leap, concurrent with the launch of Composite Information Server 4.5 in June 2007.

Slow and Steady Wins the Race

The CIS 4.5 announcement press release was just the first step in a ten-year category build with many milestones on this journey. Below are just a few highlights:

- The first data virtualization video was published on YouTube in February 2008.

- A series of contributed articles from 2008 through 2013 that established a drumbeat of data virtualization messages into the market. “Making the Case for Data Virtualization” and “Maximizing Data Virtualization Benefits” are examples of the over 50 contributed articles that I authored.

- The first dedicated Data Virtualization Conference, Data Virtualization Day, was held on October 6, 2010 in New York City. Ted Friedman of Gartner provided the keynote address, complemented by data virtualization customer presentations from NYSE, Pfizer and Wells Fargo.

- Judith Davis and I published Data Virtualization’s first book, “Data Virtualization: Going Beyond Traditional Data Integration to Achieve Business Agility” in September 2011, followed by Rick van der Lans’ book in 2012.

- And the analysts continued their research and reporting with Forrester’s first Data Virtualization Wave Report – “The Forrester WaveTM: Data Virtualization, Q1 2012” in January 2012 and Gartner’s first “Market Guide for Data Virtualization” in July 2016.

And today the beat goes on, especially on the analyst front as I noted in my recent blog, “It’s Summertime, So Analysts Turn Up the Heat on Data Virtualization.”

How Will the Data Virtualization Category Story End?

For those of you looking for a fast read, I don’t see the Data Virtualization category story ending anytime soon. Market penetration, as estimated by several analyst firms, is still less than 20%. And when you also consider Gartner’s recent Strategic Planning Assumption that predicts 40% lower data integration costs at organizations that use Data Virtualization, you can see a long future ahead.

So, it’s not too late to write your own Data Virtualization story.

Cheers to your journey!

Jim, you have been in the thick of it. Enjoyed reading your post. I think the action is evolving in the direction of real-time integration of trickle feeds not just into in-memory pub-sub layers and in-memory analytics but also all the way down to Hadoop farms.

By now, XQuery which in the old ways was a contender (Ipedo tried to compete with Composite on that front) has fallen by the wayside. SQL remains. Schema evolution is the evergreen problem statement no matter which stack is being used.

I have seen some recent work in this area that I find appealing in terms of removing the binary choices between big data or fast data on the one hand and between fresh data and consistent data on the other.

Bob,

I received a reference hit from LinkedIn on Jim attracting me to the story. Imagine my surprise when I see a reference to myself. Thanks for the attribution (last name spelled incorrectly though). Boy, this topic matter brings back memories.

JP

JP – My regrets. Will be fixed this morning. -Bob

Jim, I would love to hear your thoughts on the Platform approach we take with SAP HANA including the built in ETL and EII (Smart Data Integration). Do you see advantages or disadvantages?

And on a different note, when do you think Intelligence visualization will replace Data visualization (context and recommendations vs. pure data discovery tools when people want to discover answers, not just interesting facts IMO

Sorry, meant Bob

Good to see my old friend Jim Green recognized for his hard work. Well deserved.

Composite Software caught my attention while I was at Bank of America circa 2010, and I had some good conversations with Jim Green. Data virtualization seems like the obvious solution to the problem of physical data locality. I believe that virtualization on steroids is the future answer that will eliminate ETL, EII, ESB, and all the other ad-hoc methods of data movement. See my article, “Let’s Not Get Physical: Get Logical” at https://www.eckerson.com/articles/let-s-not-get-physical-get-logical

Ted… Great to hear from you. Long time since your excellent “Real Time is the Right Time” presentation at Data Virtualization Day in NYC. Your article, “Let’s Not Get Physical: Get Logical” at https://www.eckerson.com/articles/let-s-not-get-physical-get-logical was an excellent piece of thought leadership on your part. And reading it made me proud of the chance to associate with pioneering data virtualization customers such as you…… Bob