If a heart monitor can’t keep a consistent connection to the nurses’ station, is the patient stable or in distress? If a WAN link to a retail chain store goes down, can the point of sale still process charge cards? If gas wellheads are leaking methane and the LTE connection is unavailable, how much pollution goes untracked? These critical applications are candidates for edge processing. As organizations design new applications incorporating remote devices that cumulatively feed time-critical data to analytics back in the data center or cloud, it becomes necessary to push some of the processing to the edge to decrease network loads while increasing responsiveness. While it is possible to use public clouds to provide processing power for analyzing edge data, there is a real need to treat edge device connectivity and processing differently to minimize time to value for digital transformation projects.

Edge Computing Workloads Are Uniquely Demanding

There are three attributes in particular that need careful consideration when networking edge applications.

Very High Bandwidth

Video surveillance and facial recognition are probably the most visible of edge implementations. HD cameras operate at the edge and generate copious volumes of data, most of which is not useful. A local process on the camera can trigger the transmission of a notable segment (movement, lights) without feeding the entire stream back to the data center. But add facial recognition and the processing complexity increases exponentially, requiring much faster and more frequent communication with the facial analytics at the cloud or data center. For example, with no local processing at the edge, a facial recognition camera at a branch office would need access to costly bandwidth to communicate with the analytic applications in the cloud. Pushing recognition processing to the edge devices, or their access points, instead of streaming all the data to the cloud for processing decreased the need for high bandwidth while increasing response times.

Latency and Jitter

Sophisticated mobile experience apps will grow in importance on devices operating at the edge. Apps for augmented reality (AR) and virtual reality (VR) require high bandwidth and very low (sub-10 millisecond) latency. VoIP and telepresence also need superior Quality of Service (QoS) to provide the right experience. Expecting satisfactory levels of service from cloud-based applications over the internet is wishful thinking. While some of these applications run smoothly in campus environments, it’s cost prohibitive in most branch and distributed retail organizations using traditional WAN links. Edge processing can provide the necessary levels of service for AR and VR applications.

High Availability and Reliability

Many use cases for IoT edge computing will be in the industrial sector with devices such as temperature/humidity/chemical sensors operating in harsh environments, making it difficult to maintain reliable cloud connectivity. Far out-on-the-edge, devices such as gas field pressure sensors may not need real-time connections, but reliable burst communications to warn of potential failures. Conversely, patient monitors in hospitals and field clinics need consistent connectivity to ensure alerts are received when patients experience distress. Retail stores need high availability and low latency for Point of Sale payment processing and to cache rich media content for better customer experiences.

Building Hybrid Edge Solutions for Business Transformation

Cisco helps organizations create hybrid edge-cloud or edge-data center systems to move processing closer to where the work is being done—indoor and outdoor venues, branch offices and retail stores, factory floor and far in the field. For devices that focus on collecting data, the closest network connection—wired or wireless—can provide additional compute resources for many tasks such as filtering for significant events and statistical inferencing using Machine Learning (ML). Organizations with many branches or distributed store fronts designed for people interactions, can take advantage of edge processing to avoid depending on connectivity to corporate data centers for every customer transaction.

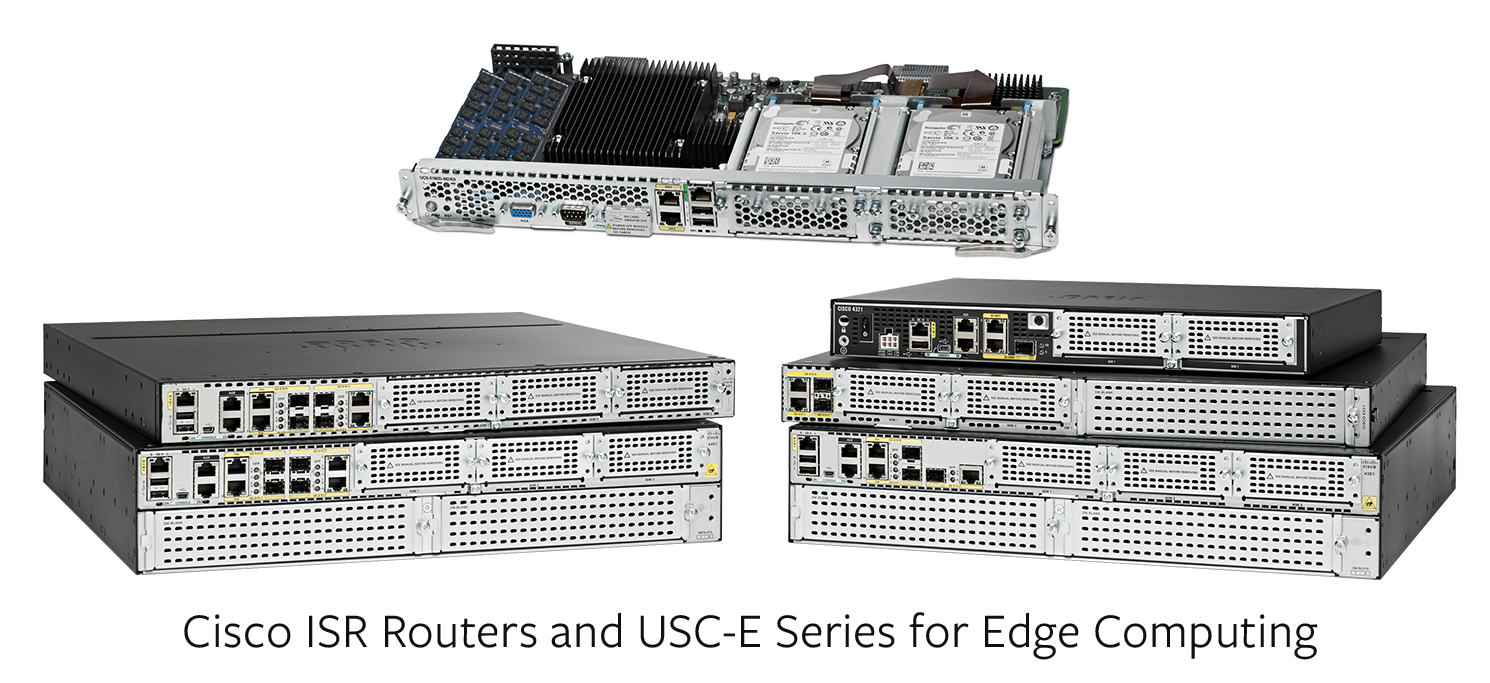

An example of an edge computing implementation is one for a national quick serve restaurant chain that wanted to streamline the necessary IT components at each store to save space while adding bandwidth for employee and guest Wi-Fi access, enable POS credit card transactions even when an external network connection is down, and connect with the restaurant’s mobile app to route orders to the nearest pickup location. Having all the locally-generated traffic traverse a WAN or flow through the internet back to the corporate data center is unnecessary, especially when faster response times enable individual stores to run more efficiently, thus improving customer satisfaction. In this particular case, most of the mission-critical apps run on a compact in-store Cisco UCS E-series with an ISR4000 router, freeing up expensive real estate for the core business—preparing food and serving customers—and improving in-store application experience. The Cisco components also provide local edge processing for an in-store kiosk touch screen menu interface to speed order management and tracking.

The Cisco Aironet Access Point (AP) platform adds another dimension to edge processing with distributed wireless sensors. APs, like the Cisco 3800, can run applications at the edge. The capability enables IT to design custom apps that process data from edge devices locally and send results to cloud services for further analysis. For example, an edge application that monitors the passing of railway cars and track conditions. Over a rail route, sensors at each milepost collect data on train passages, rail conditions, temperature, traffic loads, and real-time video. Each edge sensor attaches to an AP that aggregates, filters, and transmits the results to the central control room. The self-healing network minimizes service calls along the tracks while maintaining security of railroad assets via sensing and video feeds.

Bring IT to the Edge

There are thousands of ways to transform business operations with IoT and edge applications. Pushing an appropriate balance of compute power to the edge of the cloud or enterprise network to work more closely with distributed applications improves performance, reduces data communication costs, and increases the responsiveness of the entire network. Building on a foundation of an intent-based network with intelligent access points and IOS-XE smart routers and Access Points, you can link together edge sensors, devices, and applications to provide a secure foundation for digital transformation. Let us hear about your “edgy” network challenges.

There are thousands of ways to transform business operations with IoT and edge applications. Pushing an appropriate balance of compute power to the edge of the cloud or enterprise network to work more closely with distributed applications improves performance, reduces data communication costs, and increases the responsiveness of the entire network. Building on a foundation of an intent-based network with intelligent access points and IOS-XE smart routers and Access Points, you can link together edge sensors, devices, and applications to provide a secure foundation for digital transformation. Let us hear about your “edgy” network challenges.

Challenges which needs to be taken care of:

(1) Usually, the program is written in one programing language and compiled for a certain target platform, since the program only runs in the cloud. However, in the edge computing, computation is offloaded from the cloud, and the edge nodes are most likely heterogeneous platforms. In this case, the runtime of these nodes differ from each other, and the programmer faces huge difficulties to write an application that may be deployed in the edge computing paradigm

(2) Similar to all computer systems, the naming scheme in edge computing is very important for programing, addressing, things identification, and data communication. However, an efficient naming mechanism for the edge computing paradigm has not been built and standardized yet. Edge practitioners usually needs to learn various communication and network protocols in order to communicate with the heterogeneous things in their system. The naming scheme for edge computing needs to handle the mobility of things, highly dynamic network topology, privacy and security protection, as well as the scalability targeting the tremendously large amount of unreliable things. Traditional naming mechanisms such as DNS and uniform resource identifier satisfy most of the current networks very well. However, they are not flexible enough to serve the dynamic edge network since sometimes most of the things at edge could be highly mobile and resource constrained. Moreover, for some resource constrained things at the edge of the network, IP based naming scheme could be too heavy to support considering its complexity and overhead.