Special Thanks to Amy Chang, Arjun Sambamoorthy, Ruchika Pandey, Ben Risher, Adam Swanda

AI-powered integrated developer environments (IDEs) like Cursor, VS Code, and Windsurf now include agents that utilize Model Context Protocol (MCP) servers, run skills, and generate entire codebases. But as these tools gain access to file systems, APIs, and shell commands, a dangerous model of implicit trust has emerged. Developers are handing over the keys to their environments, and potentially accepting third-party tools and dependencies without verifying if they are secure.

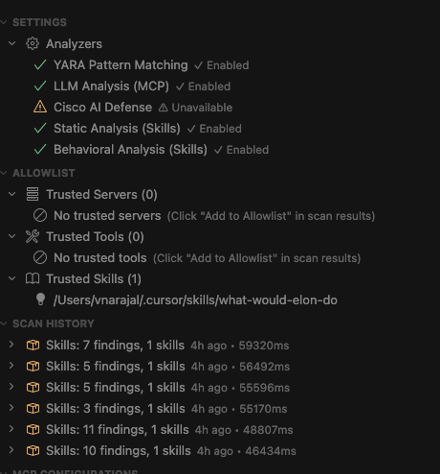

We have integrated our open source scanners, including our most popular tools (Skill Scanner and MCP Scanner), into an IDE extension. The AI Agent Security Scanner for IDEs brings security visibility and control to the AI development toolchain right into your development environment. In addition to scanning MCP servers, agent skills, and AI-generated code, it also includes a tool called Watchdog, which helps prevent context manipulation by ensuring sensitive files are continuously tracked and notifying users of any changes, helping mitigate issues like persistent memory poisoning.

The Problem: New Attack Surfaces

MCP servers have become the connective tissue between AI agents and external services. A single MCP server can grant an AI agent access to databases, file systems, cloud APIs, and shell commands. Agent skills—reusable instruction sets that shape AI behavior—can also inject arbitrary prompts, execute scripts, and modify system configurations. While integral features for our AI-enabled world, they also create a new attack surface. Some known examples of compromise include:

- Prompt injection via tool descriptions: A compromised MCP server can embed hidden instructions in tool metadata that redirect agent behavior without the developer’s knowledge.

- Integrating compromised tools: Attackers can compromise even trusted tools to execute malicious functions such as data wiping.

- Supply chain poisoning: Tampered skill definitions or MCP configurations can persist across sessions, affecting every developer on a team.

- Configuration tampering: Hook injection, auto-memory poisoning, and shell alias manipulation can compromise the IDE environment itself.

Traditional application security tools were not designed for this. Static Application Security Testing (SAST) scanners analyze source code syntax.

Software Composition Analysis (SCA) tools check dependency versions. Neither understands the semantic layer where MCP tool descriptions, agent prompts, and skill definitions operate.

How the AI Agent Security Scanner for IDEs Works

The scanner operates on a defense-in-depth model, consisting of proactive vulnerability prevention during code generation, static analysis of server configurations, behavioral inspection of agent skills, and continuous post-setup integrity monitoring. This multi-layered strategy is executed through four integrated capabilities:

- MCP Server Scanning

The scanner discovers and analyzes MCP server configurations on your machine. It inspects tool descriptions, server configurations, and endpoints for hidden instructions, exfiltration patterns, cross-tool attack chains, and suspicious commands. - Agent Skill Scanning

Skills for Cursor, Claude Code, Codex, and Antigravity are analyzed for command injection, obfuscation, privilege escalation, and supply chain indicators. The scanner examines skill definitions and any referenced scripts or binaries without executing them. - Secure AI-generated code

Project CodeGuard’s security rules are embedded directly into the agent’s context, covering 20+ security domains ranging from input validation and authentication to cryptography and session management. These rules guide AI-generated code toward secure patterns from the start, rather than catching vulnerabilities after the fact. - Watchdog

Watchdog continuously monitors critical AI configuration files for unauthorized modifications. It detects hook injection, auto-memory poisoning, shell alias injection, and MCP configuration tampering using SHA-256 snapshots with HMAC verification. When a change is detected, developers can view diffs, restore from snapshots, or accept the change as a new baseline.

Multiple Analysis Engines, Local-First by Default

The scanner layers multiple analysis engines for comprehensive coverage:

Built for the Developer Workflow

The scanner integrates natively into the IDE experience:

- Security Dashboard with at-a-glance severity overview and trend analysis

- Inline decorations in MCP configuration files highlighting specific findings

- Findings tree with one-click navigation to affected tools and descriptions

- Watchdog panel with diff views and snapshot restoration

- CodeLens annotations on MCP server definitions

- Export to JSON, Markdown, or CSV for integration with security workflows

- Scan comparison to track security posture over time

- Allowlist management for trusted servers, tools, and skills

- Cursor hooks that enforce scan results at MCP execution time — blocking, warning, or prompting based on configurable severity thresholds

Figure 1: Screenshot of the IDE Extension panel showing scan history and other panels.

Figure 1: Screenshot of the IDE Extension panel showing scan history and other panels.

Privacy by Design

The scanner was built with a clear privacy principle: your code stays on your machine.

- No source code is transmitted during scanning

- MCP tools and skill code are never executed — only metadata and descriptions are analyzed

- API keys are stored in the OS keychain via VS Code SecretStorage

- VirusTotal checks use hash-only lookups by default; file upload requires explicit opt-in

- Telemetry is optional and contains no scan content, API keys, file paths, or PII

Getting Started

- Install from the VS Code Marketplace or search “AI Agent Security Scanner for IDEs” in your IDE

- Run the Setup Wizard (launches automatically on first install)

- Open the Command Palette and run Scan All (MCP + Skills)

Within seconds, you will have visibility into the security posture of MCP servers and agent skills in your environment.

The AI agent ecosystem is evolving rapidly. The security tools protecting it need to evolve just as fast. We invite the developer and security communities to try the scanner, file issues, contribute, and help us build the security layer that AI-assisted development deserves.

Check out the documentation and more information available here.