Market disruptions don’t occur every day or even in all industries. However, when they occur, for the most part, they end up vastly improving the experience or product that consumers benefit from. Nothing exemplifies this transformation more than the ride sharing market. Several large upstarts have shaken the core tenets of the transportation industry and indirectly led to reforms.

How did this disruption happen so quickly? For something as simple as ride sharing to get from point A to point B, the use of technology and big data analytics seems almost a non-sequitur but if we peel off the top few layers, we’ll find that there was a fundamental change in how the problem was approached and then solved – using big data and broader set of analytics. The use of data collection, processing and analysis was not important for consumer (rider) or service provider (driver) but it allowed for a seamless and efficient transaction between the driver and rider.

Not All Platforms Are Created Equal

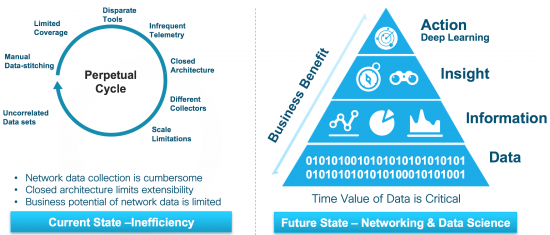

Underneath the hood of the ride sharing experience is a rich and complex data platform that handles a number of functions – route optimization, geo-location analysis, rider and driver context, payment estimates, billing information, ratings and feedback, and distributed intelligence on demand and supply. The “dirty layer” of data is critical but not exposed to users – this is key to its success. Can we apply these techniques to our business? Networking seems a far cry from ride sharing but many of the facets are common. If the same approach was used with network telemetry data, perhaps the inefficiencies seen in the current approach could be removed? The graph below on the left shows the current state of how data collection and analysis done. On the right is the desired state of converting network telemetry into insights and meaningful actions.

How would this platform for collecting, processing and analyzing network data be architected? What are the key functions that it needs to have in order to solve the traditional networking problems but also enable business insights? An ideal reference architecture should have the following approach:

How would this platform for collecting, processing and analyzing network data be architected? What are the key functions that it needs to have in order to solve the traditional networking problems but also enable business insights? An ideal reference architecture should have the following approach:

- Improves network efficiencies

- By using distributed data processing that can create deductions and insights

- Source from streaming telemetry, machine learned data and insights from network and transit traffic

- Efficiently and securely transport data and insights to a repository

- Eliminates analytical constraints

- Integrating network engineering and data sciences onto a single processing stream

- Providing contextual interpretation with network data graphs through outside sources

- Make the processing and analysis scalable and extensible

- Addresses developer friction

- Allow access to data and insights without having to learn networking

- Create an open ecosystem with standards-based APIs, query language and Devnet

- Offer flexible deployment with built-in multi-cloud and on-premise support with multi-tenancy

If these tenets can be followed and designed into the architecture, the network telemetry data could be transformational and deliver the new level of experience in a space that is normally perceived as stodgy and complex.

Thank you for sharing your insights. Excellent article.

Nice post. I study one thing more challenging on completely different blogs everyday. It should at all times be stimulating to read content from different writers and follow a little something from their store. I’d want to use some with the content material on my blog whether or not you don’t mind. Natually I’ll give you a link in your internet blog. Thanks for sharing.

http://www.purevolume.com/sarar42n/posts/14176532/Information+About+Roofing+Contractor+And+Also+Roofing+Shingles

Thanks for your feedback.

Youre so cool! I dont suppose Ive read anything like this before. So nice to seek out someone with some original thoughts on this subject. realy thank you for starting this up. this website is one thing that is needed on the web, someone with a little originality. helpful job for bringing something new to the web!

http://www.connectabl.es/index.php?title=Really-Significant-Facts-About-Anti-Aging-Treatments–You-Must-Definitely-Know-This-x