Here at Cisco, we consider edge computing to mean that data is processed before it crosses any wide area network (WAN). Crossing the WAN—whether to send data to a traditional data center on-prem or in the cloud—introduces latency and incurs additional costs, both of which edge computing is intended to avoid. That being said, there is still flexibility in terms of where you establish the edge – AKA how “edgy” your edge compute is. As with most technologies, the best choice is relative to your use case, which we’ll explore below.

There are two questions to ask when determining how edgy you want your edge computing to be:

No. 1—Where is data most useful?

The first criteria for determining where to put edge compute is based on the use case itself. Consider: where will data be the most useful? Sometimes it’s more beneficial to have the data closer to the edge, and other times it’s more important to centralize and aggregate the data. For example, security systems such as those in a connected car, need to take action at the edge, and in real-time. It makes more sense, therefore, to keep compute at the edge. Administrative and predictive applications, alternatively, are more useful when data is aggregated. In that case, the data benefits from being centralized.

While the usefulness of the data is critical, it’s not the only consideration.

No. 2—Is it worth the cost?

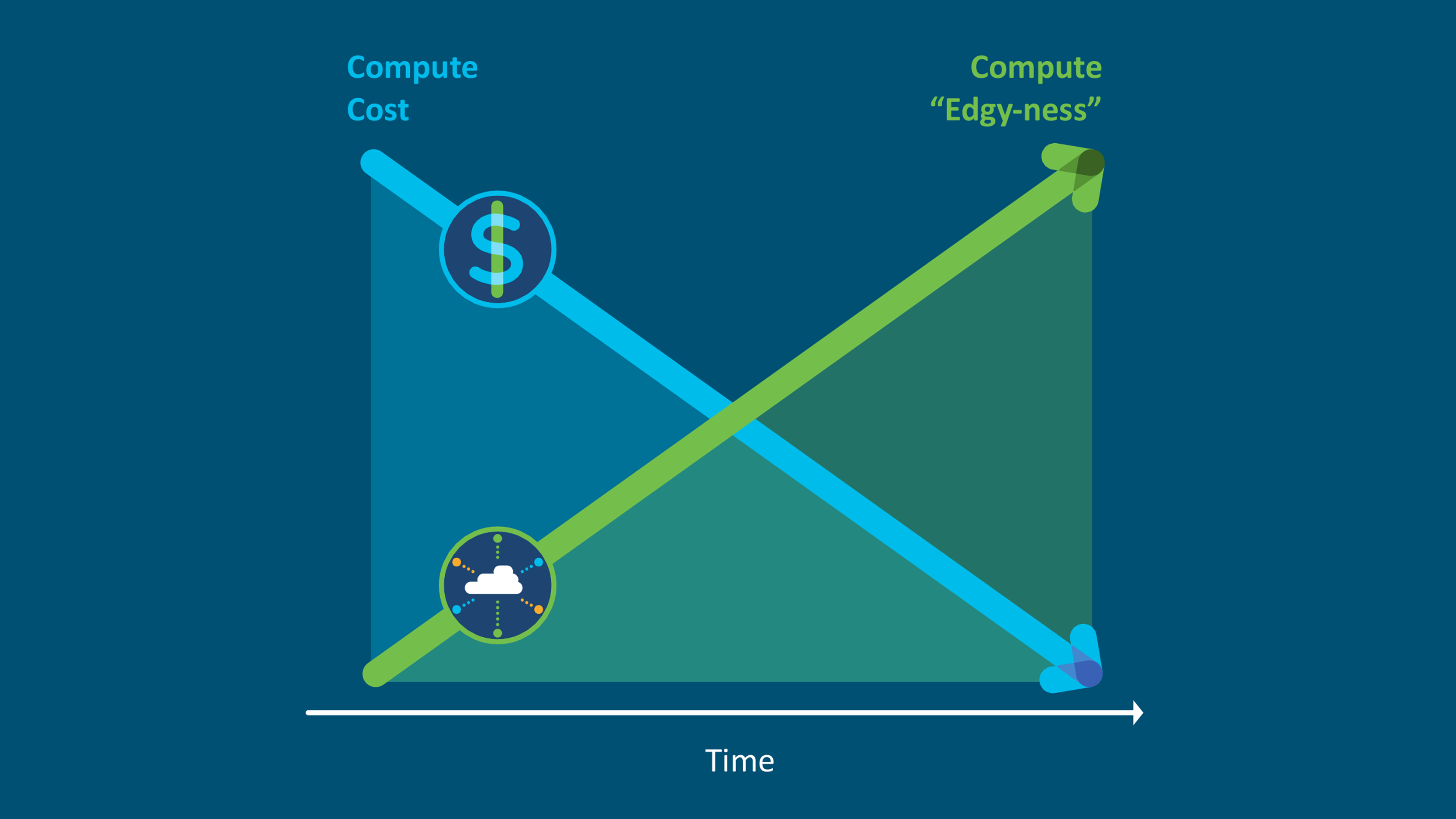

There’s a cost trade-off when you put compute at the edge. The further to the edge you go, the lower the cost of transporting the data BUT the higher the compute costs.

Let me explain this with an example. Consider a video camera deployment. You have two options:

1) You deploy a fleet of cheap video cameras on a simple network and do all your compute in the cloud, where you have access to advanced services and cost-efficient hardware. Your compute costs will be low, but your transportation costs will be high because you need to backhaul all of your data to the cloud services provider.

2) You deploy a fleet of very expensive cameras with compute on or very near them (that is, at the edge). Whether you choose to purchase intelligent cameras with machine learning integrated or an appliance that does the machine learning, your compute costs will be high and your backhaul costs will be low.

Unfortunately, even in the video camera use case, the answer isn’t as clear as you’d hope. If a Department of Transportation deploys cameras for wrong driver detection, myriad factors come into play that impact the cost. Considerations include:

- Distance between intersections

- If a high-speed connection is available

- If the resources are available to maintain and upgrade the system

All these factors need to be carefully weighed in a cost/benefit analysis.

By definition, edge computing reduces latency and data transportation costs—but those benefits come at a cost. As you determine how edgy your edge computing should be, consider where your data delivers the most value and weigh that value against the costs incurred. If you need a deeper dive into edge computing, check out my blog post on IoT benefits and use cases.