So this is the Million Dollar Question, right? You, along with the executives sponsoring your particular VDI project wanna know: How many desktops can I run on that blade? It’s funny how such an “it depends” question becomes a benchmark for various vendors blades, including said vendor here.

Well, for the purpose of this discussion series, the goal here is not to reach some maximum number by spending hours in the lab tweaking various knobs and dials of the underlying infrastructure. The goal of this overall series is to see what happens to the number of sessions as we change various aspects of the compute: CPU Speed/Cores, Memory Speed and capacity. Our series posts are as follows:

- Introduction – VDI – The Questions you didn’t ask (but really should)

- VDI “The Missing Questions” #1: Core Count vs. Core Speed

- VDI “The Missing Questions” #2: Core Speed Scaling (Burst)

- VDI “The Missing Questions” #3: Realistic Virtual Desktop Limits

- VDI “The Missing Questions” #4: How much SPECint is enough

- VDI “The Missing Questions” #5: How does 1vCPU scale compared to 2vCPU’s?

- VDI “The Missing Questions” #6: What do you really gain from a 2vCPU virtual desktop?

But for the purpose of this question, let’s look simply at the scaling numbers at the appropriate amount of RAM for the the VDI count we will achieve (e.g. no memory overcommit) and maximum allowed memory speed (1600MHz).

As Doron already revealed in question 1, we did find some maximum numbers in our test environment. Other than the customized Cisco ESX build on the hosts, and tuning our Windows 7 template per VMware’s View Optimization Guide for Windows 7, the VMware View 5.1.1 environment was a fairly default build out designed for simplicity of testing, not massive scale. We kept unlogged VMs in reserve like you would in the real world to facilitate the ability for users to login in quickly…yes that may affect some theoretical maximum number you could get out of the system, but again…not the goal.

And the overall test results look a little something like this:

|

E5-2643 Virtual Desktops |

E5-2665 Virtual Desktops |

||

| 1vCPU, 1600MHz |

81 |

130 |

|

| 2vCPU, 1600MHz |

54 |

93 |

|

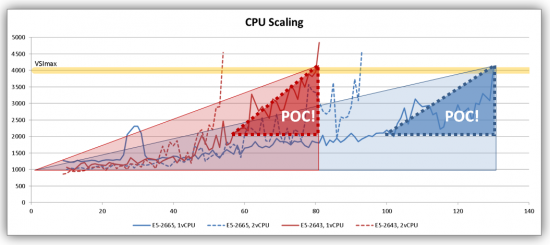

As explained in Question 1, cores really do matter…but even then, surprisingly the two CPUs are neck and neck in the race until around 40 VM mark. Then the 2 vCPU desktops on the quad core CPU really take a turn for the worse:

Why?

Co-scheduling!

When a VM has two (or more) vCPUs, the hypervisor must find two (or more) physical cores to plant the VM on for execution within a fairly strict timeframe to keep that VM’s multiple vCPUs in sync.

MULTIPLE vCPU VMS ARE NOT FREE!

Multiple vCPUs create a constraint that takes time for the hypervisor to sort out every time it makes a scheduling decision, not to mention you simply have more cores allocated for hypervisor to schedule for the same number of sessions: DOUBLE that of the one vCPU VM. Only way to fix this issue is with more cores.

That said: the 2 vCPU VMs continue to scale consistently on the E5-2665 with its double core count to the E5-2643. At around the 85 session mark, the even the E5-2665 can no longer provide a consistent experience with 2vCPU VDI sessions running. I’ll stop here and jump off that soap box…we’ll dig more into the multiple vCPU virtual desktop configuration in a later question (hint hint hint)…

Now let’s take a look at the more traditional VDI desktop: the 1 vCPU VM:

With the quad-core E5-2643, performance holds strong until around the 60 session mark, then latency quickly builds as the 4000ms threshold is hit at 81 sessions. But look at the trooper that the E5-2665 is though! Follow its 1 vCPU scaling line in the chart and all those cores show a very consistent latency line up to around the 100 session mark, where then it becomes somewhat less consistent to the 4000ms VSImax of 130. 130 responsive systems on a single server! I remember when it was awesome to get 15 or so systems going on a dual socket box 10 or so years ago, and we are at 10x the quantity today!

Let’s say you want to impose harsher limits to your environment. You’ve got a pool of users that are a bit more sensitive to response time than others (like your executive sponsors!). 4000ms response time may be too much and you want to halve that to 2000ms. According to our test scenario, the E5-2665 can STILL sustain around 100 sessions before the scaling becomes a bit more erratic in this workload simulation.

Logic would suggest half the response time may mean half the sessions, but that simply isn’t the case as shown here. We reach Point of Chaos (POC!) where there is very inconsistent response times and behaviors as we continue to add sessions. In other words: It does not take many more desktop sessions in a well running environment that is close to the “compute cliff” before the latency doubles and your end users are not happy. But on the plus side, and assuming storage I/O latency isn’t an issue, our testing shows that you do not need to drop that many sessions from each individual server in your cluster to rapidly recover session response time as well.

So in conclusion, the E5-2643, with its high clock speed and lower core count, is best suited for smaller deployments of less than 80 desktops per blade. The E5-2665, with its moderate clock speed and higher core count, is best suited for larger deployments of greater than 100 desktops per blade.

Next up…what is the minimum amount of normalized CPU SPEC does a virtual desktop need?