Examining the FaaS on K8S Market

When AWS introduced the first Function-as-a-Service (FaaS) runtime, Lambda, in 2014 and subsequently enabled developers to evolve beyond Microserrvices to create Serverless application architectures, it was inevitable that there would eventually be on premises variants. At its core a FaaS runtime requires a container engine of some sort that it can insert functions into, so it is no surprise that almost four years after the Lambda announcement that there are now five FaaS runtimes installable on top of Kubernetes (K8S) that have more than 3,000 stars on GitHub. A sixth behemoth looms on that horizon as well.

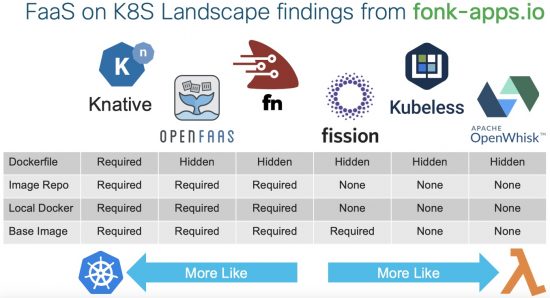

A group of Cisco employees who are interested in this topic recently founded fonk-apps.io, a collection of simple web applications that run on the five FaaS runtimes with 3,000 or more stars on GitHub as a way of understanding this space better. In this blog we’ll explore the different approaches that each FaaS runtime takes and what the developer experience is like, summarized in this graphic:

Google’s Knative

The 800-pound gorilla in this space is Google’s Knative. Announced at NEXT in San Francisco this past July, the set of components “focuses on the common but challenging parts of running apps, such as orchestrating source-to-container builds, routing and managing traffic during deployment, auto-scaling workloads, and binding services to event ecosystems.” What is not clear is whether or not Knative wants to be a full-fledged FaaS runtime to challenge the other projects on this list or if it merely wants to be the underpinning for all of them. It’s early enough and Google can bring enough muscle to the table to make either extreme, or somewhere in between, a possible outcome.

If you take a look at the Knative Node.js Hello World example, you can see that developing in Knative requires almost exactly the same set of steps any other K8S application would take. Some would argue that hardly makes it Serverless if a developer still has to manage a Dockerfile, an image repository, a local copy of Docker to build against, and reference base images, but in some cases it might be necessary to dig deeper into that level. It’s so early in the lifespan of Knative that it isn’t quite clear what it will become next.

Alex Ellis and OpenFaaS

If there’s an underdog story on this list it’s what Alex Ellis and friends have done with OpenFaaS. While all the others have some formal company backing, Alex is a guy with a dream that turned it in to 14,000 GitHub stars, by far the most of the open source projects compared here. Alex has a passionate group of developers building OpenFaaS with him and he’s a popular speaker at a variety of events, including our own Cisco DevNet Create this past April.

Working through the FONK Guestbook OpenFaaS example using Node.js reveals that OpenFaaS uses a templating system that hides the existence of a Dockerfile from the developer. It’s easy to find should someone want to make lower level changes, but the OpenFaaS CLI takes care of build, push to repo, and deployment steps and the base framework includes an API gateway that immediately makes functions callable from tools like curl.

Oracle and the Fn Project

The most recent project to reach the 3,000 GitHub star threshold is the Fn Project, which was born from a subset of the team that worked on Iron.io pretty early in the FaaS movement. They joined Oracle and have contributed most of the engineering, but the project itself is independent.

With Fn, the Dockerfile is obscured from the developer — the CLI helps manage build, push, and deploy steps, but a power user can simply bring their own Dockerfile and the CLI will build that as the function. What’s exciting about Fn is the emergence of their new FDK, which is slowly rolling out on a variety of languages to help accelerate function development and provide access to HTTP primitive objects to make building REST API’s with Fn far more flexible.

“Oracle has been making major investments in developers, open source, and serverless. We are platinum members of the CNCF, core contributors to Kubernetes and other projects, and have a rapidly growing serverless group responsible for the Fn Project and Oracle Functions, an upcoming managed service based on unforked open-source Fn. It’s an exciting place to be while helping drive the industry forward and serverless into the enterprise.”

— Chad Arimura, VP Serverless Advocacy, Oracle

A popular way for developers to interact with the public cloud FaaS runtimes is the Serverless Framework, which simplifies constructs that are slightly different in each FaaS engine so that developers can focus on the functionality of the individual functions. Fn is one of three FaaS runtimes on this list that supports the Serverless Framework, which makes the developer experience a little more universal across different FaaS targets.

Platform 9’s Fission

Fission, which is primarily worked on by Platform 9 engineers, uses a base image it calls an “environment” that functions get injected into. This removes the burden of having a local Docker instance to build custom images against or having to manage container images in a repository of any kind but gives the developer the flexibility of providing a custom runtime environment if needed.

Most of the entries already covered on this list instantiate function-specific containers that always stay resident in memory, hence why the image building process is exposed to them. Fission, however, maintains a pool of containers that contain language runtimes, but only get the function code loaded into them upon function invocation, a model much closer to how the public cloud services like Lambda do it. If you work through the FONK Guestbook Fission Python example, you can see this first hand. Fission also has a very flexible API gateway that enables developers to build routes independent of function names and paths.

Bitnami’s Kubeless

Kubeless, sponsored by Bitnami, provides Serverless Framework support and the FONK Guestbook Kubeless Node.js example, utilizes it to eases the developer experience considerably. Kubeless has what might be the most comprehensive set of examples on this list, making it easy to learn. But in perhaps what is the first sign of a shift in this market, Kubeless founder Sebastien Goasguen recently left Bitnami for a new start up called TriggerMesh, which is a “Knative Platform in the Cloud and On-Premise” according to its website.

Still, according to the recently published “Survey on Serverless Technologies” conducted by The New Stack, Kubeless was the most popular choice among FaaS on K8S platforms, showing that it has prominence in this young marketplace. The Kubeless Serverless Framework plugin is very easy to use and provides a wide variety of ingress options, making exposing functions built with it for a REST API a clean development experience.

IBM and Apache’s OpenWhisk

IBM was the first public cloud vendor to open source their FaaS engine, OpenWhisk, that they then partnered with the Apache Foundation on. Although technically still an Apache incubator project, OpenWhisk is used by IBM in production and has the most contributors of any of the FaaS on K8S runtimes on this list. It puts the most thought into security, with built in access keys, some multi-tenancy, and HTTPS support for the API gateway. They also support a Serverless Framework plug-in and OpenWhisk is the second FaaS runtime on this list that maintains a pool of containers that contain language runtimes, but only get the function code loaded into them upon function invocation.

It is installable natively on Docker but if you run through the FONK Guestbook OpenWhisk Node.js example you’ll find it works on K8S as well. IBM Distingusied Engineer Michael Behrendt said, “Going forward, we’ll continue to focus on a great developer experience, enterprise-strength security, & performance and integration with the Kubernetes and Knative ecosystem.” Not only does IBM run OpenWhisk in production, Adobe’s upcoming I/O Runtime will be based on OpenWhisk and Red Hat has developed a GitHub repo that demonstrates how to run OpenWhisk on OpenShift.

What’s Next?

From security to data gravity-based latency reduction, there are lots of reasons why companies might be interested in FaaS on K8S as the Serverless application architecture movement continues to gain momentum. The current landscape offers a variety of choices in terms of the developer experience and the mechanics of executing the functions. Clearly the market will not support 6-7 vendors long term, so some form of consolidation is inevitable, but just who takes control of this space isn’t quite clear yet.

This is among the reasons fonk-apps.io was created, so that an apples-to-apples comparison between leading FaaS on K8S contenders can be more easily made among these very different choices. Some organizations might favor a developer experience more closely related to the native K8S model where more control is possible over the container within which a function executes. Others might prefer to have developers more quickly iterate by focusing on the functionality within the functions themselves. A version of both models will likely survive the impending market consolidation and keeping an eye on what emerges will make for an interesting future. Regardless of the outcome, you’ll find instructions on fonk-apps.io for how to install each of these FaaS runtimes on the DevNet Cisco Container Platform Sandbox for free so you can try them out for yourself.

Pete, Great Job, a very well-written blog to understand the basics behind the FaaS framework and industry landscape. A really good use case for Cisco Container Platform.

Hi,

I think the author may have missed the build support in Knative — Dockerfiles are one of several ways to get a container image with can be used with Knative. For node.js, you might look at the node.js example using a buildpack for an example of a non-Dockerfile deployment:

https://github.com/knative/docs/tree/master/serving/samples/buildpack-function-nodejs

Hi Evan,

Thanks so much for taking the time to point this out. I definitely missed it and that is a cleaner way to build than what the default Hello World example provides.

I guess that begs the question, though, as to why there are multiple ways of performing the build? And why the Hello World one would use the more cumbersome choice?

It's early in the project and I get how different techniques evolve over time or that you might choose one over the other to minimize learning curve for people coming from native Kubernetes development, so that's what I'd guess the reason is.