Written by David Ward, CTO of Engineering and Chief Architect, and Maciek Konstantynowicz, Distinguished Engineer; Chief Technology & Architecture Office

For those that don’t want all the gory details, this is a short version of the longer blog conversation that can be found here. The longer blog goes into quite a bit of detail on the technology; please check it out.

“Holy Sh*t, that’s fast and feature rich” is the most common response we’ve heard from folks that have looked at some new code made available in OpenSource. A few weeks back, the Linux Foundation launched a Collaborative Project called FD.io (“fido”). One of the foundational contributions to FD.io is code that our engineering team wrote, called Vector Packet Processing or VPP for short. It was the brainchild of a great friend and perhaps the best high performance network forwarding code designer and author, Dave Barach. He and the team have been working on it since 2002 and it’s on its third complete rewrite (that’s a good thing!).

The second most common thing we’ve heard is “why did you Open Source this?” There are a couple of main reasons. First, the goal is to move the industry forward with regard to virtual network forwarding. The industry is missing a feature rich, high performance/scale virtual switch router that runs in a user space and has all the modern goodies from hardware accelerators; built on a modular architecture. VPP can run either as a VNF or as a piece of virtual network infrastructure in OpenStack, OpenNFV, OpenDaylight or any of your other fav *open*. The real target is container and micro-services networking. In that emerging technology space, the networking piece is really really early and before it goes fubar we’d like to help and avoid getting “neutroned” again.

Why Forwarding & VPP are Important

So, why is the forwarding plane so important and where cool kids are hanging out? Today’s MSDCs, Content delivery networks and Fintech are operators of some of the largest and most efficient data centers in the world. In their journey to get there they have demonstrated the value of four things: 1) using speed of execution as a competitive weapon, 2) taking an industrial approach to HW&SW infrastructure, 3) automation as a tool for speed and efficiency and 4) full incorporation of a devops deployment model. Service providers have been paying attention and looking to apply these lessons to their own service delivery strategies. VPP enables not only all the features of Ethernet L2, IP4&6, MPLS, Segment Routing, Service Chaining, all sorts of L2 and IP4&6 tunneling, etc., but it does it out of the box. Unbelievably fast on commodity compute hardware and in full compliance with IETF RFC networking specs.

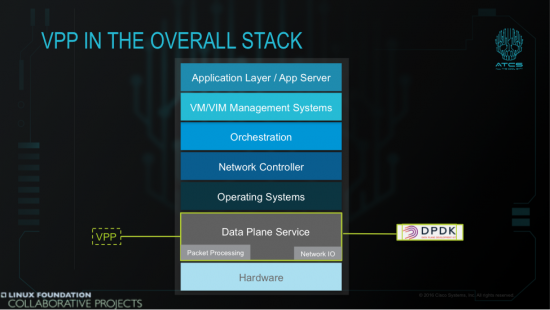

Most of the efforts to towards SDN, NFV, MANO, etc have been on control, management and orchestration. FD.io aims to focus where the rubber hits the road: the data plane. VPP is straight up bit banging forwarding of packets, in real-time, at real linerates, zero packet loss. It’s enabled w/ high performance APIs northbound but not designed for any specific SDN protocol; it loves them all. It’s not designed for any one controller, it also loves them all. To sum up, VPP fits here:

What we mean is a “network” running on virtual functions and virtual devices, a network running as Software on computers. Therefore, VPP-based virtualized devices, multi-functional virtualized routers, virtualized firewalls, virtualized switches, host-stacks and often function-specific NAT or DPI virt-devices as software bumps in a wire, running in computers, and in virtualized compute systems, building a bigger and better Internet. The question service designers & developers, engineers and operators have been asking is how functional and deterministic are they? How scalable? How performant – and is it enough to be cost effective?

Further, how much their network behavior and performance depend on the underlying compute hardware, whether it’s x86 (x86_64), ARM (AArch64)or other processor architectures?

If you’re still following my leading statements, you would know answer to that, but lot’s of us would be guessing and both will be right! Of course it does depend on hardware. Internet services are all about processing packets in real-time, and we love a ubiquitous and infinite Internet. More Internet. So this SW-based network functionality must be fast, and for that the underpinning HW must be fast too. Duh.

Now, here is where reality strikes back (Doh)! Clearly, today there is no single answer:

- Some VNFs are feature-rich but with non-native compute data planes, as they’re just a retro-fittings or reincarnations of their physical implementation counterparts: the old lift and shift from bare metal to hypervisor

- Some are better performing with native compute data planes, but lack required functionality, and are still a long way to go to implement required network functions coverage to realize the levels of network service richness used to and demanded by the network service consumers.

- VPP tries to answer what can be done, what technology or rather set of technologies and techniques can be used to progress virtual networking towards the actual fit-for-purpose functional, deterministic and performant service platform it needs to be to realize the promises of fully-automated network service creation and delivery.

Deterministic Virtual Networking

A quick tangent on what I mean by reliable and deterministic network. Our combined Internet experience taught us few simple things: fast is good and never fast enough, high scale is good and never high enough and delay and losing packets is bad. We also know that bigger buffer arguments are a tiresome floorwax-and-dessert-topping answer to everything. Translating this into the best practice measurements of network service behavior and network response metrics:

– packet throughput,

– delay, delay variation,

– packet loss,

– all at scale befitting Internet and today’s DC networks,

– all per definitions in [RFC 2544] [RFC 1242] [RFC 5481].

So here is what we need and are to expect by these simple metrics:

Repeatable linerate performance, deterministic behavior, no (aka 0, null) packet loss, realizing required scale, and no compromise.

If this can be delivered by virtual networking technologies, then we’re in business; as an industry. Now that’s easy to say and possible for implementations on physical devices (forwarding asics have an excellent reason to exist and continue into the future), built for the sole purpose of implementing those network functions, but can it be done on COTS general purpose computers? The answer is: it depends for what network speed, for 10Mbps, 100Mbps, 1Gbps today’s computers work… ho, hum. Thankfully COTS computing now has low enough cost and enough clock cycles, cores and fast enough NICs for 10GE. Still a yawn for building real networks. For virtualized networking to be economically viable, we need to make these concepts work for Nx10 | 25 | 40GE, …, Nx100, 400, 1T and faster.

FD.io & VPP Resources

The best part of open source projects is the opportunity to work with the code. In the open. You can get instructions on setting up a dev environment here and look at the code here. Finally check out the develop wiki here. There are a ton of getting started, tutorials, videos, docs on not only how to get started but how everything works. Even videos of Dave Barach. Please don’t mind the choice of language; we don’t let him out of his cave very much.

The code is solid and completely public and under the Apache license. We’re already using an “upstream first” model at Cisco and continuing to add more features and functionality to the project. I encourage you to check it out as well as consider participating in the community as well. There’s room for newbies and greybeards. We have a great set of partners and individuals contributing to and utilizing FD.io already in the open source community and a good sized ecosystem already emerging. Clearly from this conversation you can tell I think VPP is a great step forward for the industry. It has a great potential for fixing a number of architectural flaws in current SDN, orchestration, virtual networking and NFV stacks and systems. Most importantly it enables a developer community around the data plane to emerge and move the industry forward by having a modular, scalable, high performance data plane with all the goodies; readily available.

Realizing a Vision

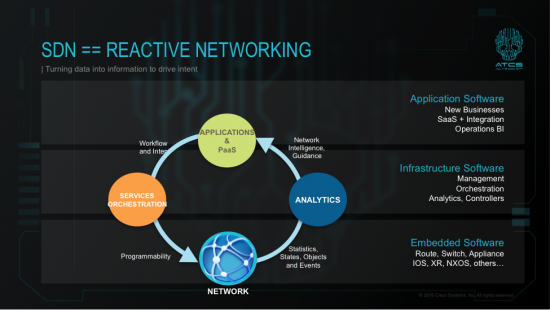

Five or so years ago we began evangelizing this diagram as a critical target for SDN and establish a trajectory for the industry.

We have been making progress towards that target in the open source community. Services orchestration now includes SDN controllers (check). The network can now be build around strong, programmable forwarders == VPP (check). Providing a solid analytics framework is immediately next and work is already underway. This year, through work with Cloud Foundry, we hope to realize our goal in making the network relevant in the PaaS layer. These latter endeavors will be the subject of future blogs.

It’s great to see my old buddy Dave Barach is still the master bit banger. Nice job.

What Dave Barach, Dave Ward an Maciek have released is a truly gift to the world and its impact to the industry cannot be overstated. I am also delighted to see that Cisco finally “has got it” and is finally sensing the tectonic shift that is underway in networking.

Thank you very much, I learned a lot from your post, best regards..