Recently, the conversations I have been having about Software Defined Networks have shifted from supplying agile networking for VM provisioning and live migrations to looking at the problem through the lens of the application team. In the past, I spoke about provisioning VMs and moving VMs as a surrogate for the application. An application and a VM are not always in a one-to-one ratio. This is a convenient simplification for everyone except perhaps the IT operations teams provisioning multi-server, tiered, or distributed server applications.

In this blog post, I want to complement Gary Kinghorn’s blog, The Promise of an Application Centric Infrastructure (ACI), to briefly share insights from talking with many IT operations managers and architects responsible for traditional enterprise applications as well the new distributed applications for cloud infrastructure. What they are saying has profound implications for cloud infrastructure.

Conventional IT organizations have dedicated teams managing their applications, compute, network, security, and storage infrastructure. These functional organizations must work together much like runners in a relay race to manage the lifecycle of the applications used by an enterprise. These runners need to be agile but the racecourses are not the same every race.

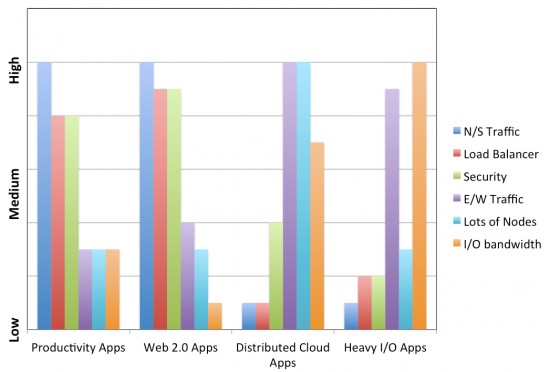

When you look at some categories of applications side by side, the implications on business agility – the speed that a business can execute on a strategy (esp. one dependent on IT) – and the requirements on applications, network and security teams become apparent.

- Productivity applications like Microsoft Exchange and Web 2.0 applications like SharePoint for collaboration support lots of client – server traffic (this is North – South traffic) for the hundreds or thousands of end users of these applications within the enterprise. Characteristic of these server deployments as they scale up users, the load is balanced across the edge servers using server load balancers or applications delivery controllers. Additionally, since these applications are highly exposed to threats from the external network, these applications have priority requirements for security devices to prevent Denial of Service attacks and deliver secure access.

- To scale I/O intensive applications such as SQL Server databases, IT organizations use clustered data base servers to handle the transactions or queries with deterministic network performance between servers and storage arrays which can be measured by latency and assured bandwidth.

- New distributed cloud and big data applications like Hadoop can employ tens or hundreds of servers with unique I/O patterns between servers and terabytes of collected data which require guaranteed I/O characteristics for optimal performance between servers, local data, and the big data repositories. The traffic patterns are between servers and shared storage within the data center and are often characterized as heavy East-West data center traffic patterns.

Every installation has its unique fingerprint of application requirements but the chart below is useful to provide a comparison and contrast of the requirements for these categories of applications.

IT organizations that want to work faster need to define applications requirements according to these major dimensions and learn to accelerate the workflow of application deployment across pooled network, security, compute and storage infrastructure.

Last June, Cisco revealed its vision for Application Centric Infrastructure, an innovative secure architecture that delivers centralized application driven policy automation, management and visibility for physical and virtual networks from a single point of management. It provides a common programmable automation and management framework for the network, application, security, services, compute, and operations teams, making IT more agile while reducing application deployment time.

How about an application centric infrastructure being incorporated within a load balancer with various application specific templates?

Naresh, you may be interested in the solution briefs with ACI ecosystem partners F5 and Citrix. You may find them here

Cisco and F5: Building Application Centric, Service Enabled Data Centers

http://www.cisco.com/en/US/solutions/collateral/ns340/ns517/ns224/ns945/solution-brief-c22-730004.html

Cisco and Citrix: Build Application Centric, Application Delivery Controller-Enabled Data Centers.

http://www.cisco.com/en/US/solutions/collateral/ns340/ns517/ns224/ns945/solution-brief-c22-730002.html

Application Network Profiles can absolutely be created specific to various applications to incorporate with load balancers. Write me to learn more and get started.