History has been very clear that for broad industry adoption of the 800G generation, an optical pluggable form factor must be judged on:

(1) Backwards compatibility with previous generation optics

(2) 100G+ electrical performance

(3) Excellent thermal power dissipation

The recent QSFP-DD MSA updates ensure all of these, but I’d like to focus on the thermal performance here. Starting with 400G modules and continuing with 800G pluggable modules, the challenge of thermals and keeping optical pluggable modules cool has be front of mind for many.

Let’s be clear, no one wants optical modules to consume high power, and the vast majority of modules deployed today operate well below these maximums we design to for the system.

However, from a system designer perspective, it is important to know what the maximum a system can deal with while maintaining the module’s optimal operating performance. And from a network operator’s perspective, it is important to know that the module type can support all the different reach options you want to deploy.

With 400G, we saw the coherent optical modules fitting into the QSFP-DD form factor which provided network operators with maximum flexibility with the systems they deploy which could truly be flexible in supporting copper cables through to coherent optics from the same port. This ability is accelerating the exciting new Cisco Routed Optical Networking solution.

With the rise of 800G modules, the QSFP-DD MSA took the opportunity to enhance both the electrical and thermal capabilities to be ready for all variants of modules. The updated D6.0 specifications were just released which includes the QSFP-DD800 and QSFP112 module definitions. The MSA also published an informative thermal whitepaper showcasing the flexibility that the QSFP module family has when it comes to thermal designs. The fixed heatsink on the nose of the module outside the faceplate, combined with a flat-top module inside the cage enables optimized heatsink designs that can provide excellent thermal performance while minimizing airflow impedance (which means you can run the fans slower and save more power).

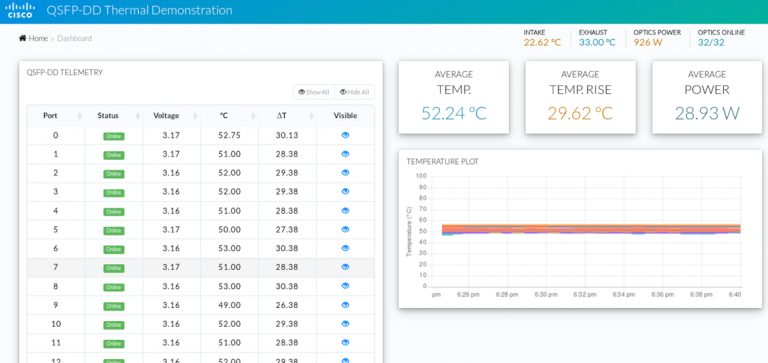

For OFC 2021, we thought we’d showcase what all of this means with a simple QSFP-DD800 thermal demo. Using a prototype system capable of supporting 32 ports of QSFP-DD800 in a 1RU system, we installed 32 thermal load modules from MultiLane all set for 30W each.

The key parameters we worry about with a system’s ability to cool a pluggable module is the case temperature and the temperature rise compared to the ambient temperature.

With all 32 thermal modules set to their maximum setting of 30W, the fixed 1RU system was able to easily handle keeping them cool. An average temperature rise of ~ 30°C is well below the max target of <40°. The average temperature readings of ~52.3°C also gives no concerns to impacting any of the sensitive optical components in the modules even if the ambient temperature was to rise. Since the system was fully populated with thermal modules and therefore not running traffic, the ASIC would be running at lower power which explains the lower exhaust temp. The current monitors aren’t highly accurate, so the GUI shows the modules drawing <30W despite the settings on the modules.

While this is a fairly simple demo, the goal was to make it clear that building systems to cool very high-power pluggable modules is feasible and should not be a concern to anyone. Thirty watts is excessive for any expected optical module, but the improvements made in QSFP-DD800, and the module and system design experience gained by the industry shows that cooling even a coherent optical QSFP-DD800 module is feasible – and with margin!

In conclusion, preliminary hardware testing on a real 25.6 Tb/s 1RU system shows the continuing ability of the QSFP family of devices to be capable of supporting the 800G generation. The enhanced performance of QSFP-DD800 means it is ready for deployment.

Acknowledgements: Many thanks to the Cisco team that developed this exciting demo: Mete Yilmaz, Anthony Ngo, John Kelly, Sharon Adam and Maung Soe.

Hi Mark,

Excellent article on the subject! I was there when its old version was showcase in OFC 2019, but I missed this OFC 2021.

Mark, do you mind to confirm what were the exact module case temperature monitor locations as reported in this article, such as they might be in module nose area, module case hot spot near center of the heat sink base, etc.

Thanks,

David Chen

Molex

Cisco has an amazing technology when it comes to Optical Transport