This is the start of a planned series of posts around the impact that new protocols are making on the way many of us deal with network security today.

The protocols we have been using on the internet, mainly TCP with HTTP 1.1, have shown that they cannot deal with today’s requirements for fast and efficient handling of content. As the protocols are changing, we also need to make sure, that our network security strategy is in line with the requirements. This is mainly a concern for gateway security that is targeted to inspect incoming and outgoing traffic. How is this being impacted?

Let’s start to talk about a protocol that is already specified in a RFC and is growing fast: HTTP/2

https://tools.ietf.org/html/rfc7540

The reason for HTTP/2 is mainly that HTTP/1.1 is old and no longer an efficient way to deal with modern requirements. One example where HTTP/1.1 is coming short is the so called “Head-of-Line” blocking. HTTP/1.0 had one connection open for every request. Highly inefficient and slow. HTTP/1.1 introduced a method called pipelining. This means that we can request several objects of content in one request. The problem is that all objects need to be served in the order that they were sent.

Example:

Client: “Please send me a picture of a dog, a cat and a house!”

Server:” Here is a picture of a dog, a cat , and …(searching … searching… waiting…) a house!”

You get the point, right? This is called “Head-of-Line” blocking. Similar to your shopping experience in the supermarket and there are too few registers open. And you have to wait….

What does HTTP/2 make better?

HTTP/2 uses the following main features:

Header compression:

HTTP Headers can take up a lot of space. Lots of different headers, lots of information being transmitted. HTTP/2 has an algorithm to compress those headers when they are transmitted

TCP Connection re-use and multiplexing:

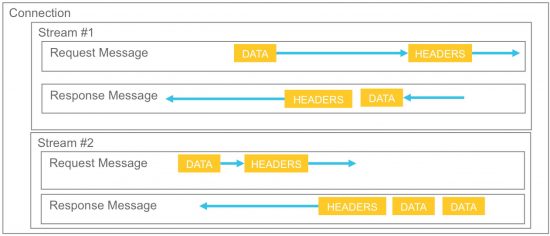

HTTP/2 is organizing itself into streams and connections.

The data is sent in several streams. The streams itself are containing different frames of information and all of those streams run over a single connection. Less TCP connections, but those connections live much longer.

Server can push content:

That is something interesting, as the server can proactively transmit information that have not been explicitly been requested by the client.

Prioritization of Streams:

With HTTP/1.1, as described above, we have transmitted the information in the exact order as they were arriving. With HTTP/2 we can actually prioritize certain streams. So we can already start to display content that is really needed and if some other content has a delay, we are not stopping all information from flowing.

Binary format:

HTTP/2 is no longer a text format that needs to be parsed, but is now a binary format. Usually, a computer can deal much better with a binary format but we as humans, well, we need someone to interpret it. So our network equipment needs to understand this format and display it in the human readable way, for example if you want to debug problems. Wireshark, for example, has already updated its libraries and can understand HTTP/2 binary data.

All of those features are making HTTP/2 much a much more efficient protocol and a real step forward.

So, now that we have a basic understanding of HTTP/2, what is the point of impacting network security?

The RFC allows HTTP/2 to be sent in clear text or encrypted. But the main browser vendors like google with chrome and mozilla with firefox have decided that they will only support HTTP/2 in an encrypted way. So while it is allowed and supported to use HTTP/2 in clear text, you will most likely rarely encounter HTTP/2 without encryption. So the move to HTTP/2 increases the amount of encrypted traffic on the internet dramatically.

A good overview of the usage of HTTP/2 can be found here:

https://w3techs.com/technologies/details/ce-http2/all/all

Every website using HTTP/2 will also move to TLS for encryption, if they did not use TLS before. And especially the popular websites like facebook.com, google.com, and many more have already made the move to HTTP/2.

A intercepting gateway like a NGFW (next gen firewall) or a proxy has now to deal with much more TLS traffic than before.

The binary format needs to be understood by intercepting gateways. without a parser for the binary format of HTTP/2, the signatures for Anti-virus or application visibility will most likely not detect much.

There is another small notion to the RFC, in section 9.1.1 of the RFC:Connection re-use:

https://tools.ietf.org/html/rfc7540#section-9.1.1

This means that an established connection can be re-used to request different URI over that already established connection. Well, not every URI but as long as the certificate has a valid SAN (Subject alternative Name), the URI can change.

Example:

“news.yahoo.com” might be classified in your proxy or NGFW as “News Category” but “sports.yahoo.com” might be classified as “Sports Category”. How are you dealing with such a request on your proxy / ngfw ?

Take a look at the certificate from “youtube.com”:

What will your filtering network device do now if over a single TCP connection there are requests to google.com (search engine) and also youtube.com (video portal). How do you deal now with bandwidth limitation for video filtering?

So, in summary, our network security model needs to change through the usage of new and encrypted protocols.

We can no longer rely only on Deep Packet Inspection in the network alone but need to consider other strategies in combination. Security on the endpoint, anomaly detection in the network, anomaly and threat detection through analyzing log traffic just to name a few.

Learn more about this in my upcoming talk at CiscoLive! Berlin 2017:

BRKSEC-3006 TLS Decryption using the Web Security Appliance (WSA)

http://www.ciscolive.com/emea/learn/sessions/content-catalog/?search=BRKSEC-3006

Great Blogpost on this extremely interesting and important topic, Tobias. I’m looking forward to the next posts in this series!

Excellent article, very well written and too the point!

Already looking forwards to the next post. Great information Tobias

Great article

Thanks very informative. Now I want to learn more about this.

Very good article for understanding the basics of HTTP2.

Looking forward for the follow up posts on this

Great article that has been ‘dumbed down’ so that some technical and most not technical managers can understand how modern technology impacts older architecture and deployments.

It is hard for some shops that are not familiar with the resource load that encryption adds to enterprise network components. It is amazing to me how the concept is lost to many that think they really understand it.

valuable post