Detecting User Interaction Evasion Techniques

Malware sometimes checks for user interaction as a form of evasion to avoid being detected by antiviruses and other security software, especially sandbox analysis environments. Threat Grid, Cisco’s advanced sandbox analysis environment, has recently added a new feature called User Emulation Playbooks to help ensure malware is properly detected. We will be covering both common evasion techniques and the details of the functionality of these playbooks throughout this post.

Detecting and stopping malware is a difficult problem to solve. As the methods of detection and prevention become more advanced so too do the techniques used by malware authors. Sandboxes are excellent tools that allow analysts to run malware in a safe environment in which malicious behavior can be observed. Unfortunately, a major drawback is that sandboxes without user interaction exhibit vastly different behavior than that of a real system. Most automated sandbox systems tend to run submitted samples but perform little-to-no user activity. Real users are likely to move the mouse, and click and type on their keyboards after running a program. The behavior of users allows malware to easily distinguish between real systems and automated analysis environments. Many types of malware will not execute fully in a sandbox.

Malware sandbox evasion has moved from simple sleeps designed to outlast sandbox runtimes to input checks and complex dialogue boxes that require clicking specific areas on the screen. Over the past year Threat Grid has seen an increase in the number of samples submitted which require some form of user input in order to fully execute their payloads. This blog post discusses how Threat Grid addresses some of these techniques with what we call user emulation Playbooks.

User interaction as a Sandbox Evasion Technique

In order to halt the full execution of its malicious payload, malware has many options, such as detecting a single click or a keystroke, or mouse movement. Even a PowerPoint file can be used in this way by using the hover over function built in to execute a command. As Figure 1 illustrates, this is a native option for Microsoft Office that can be easily exploited by attackers.

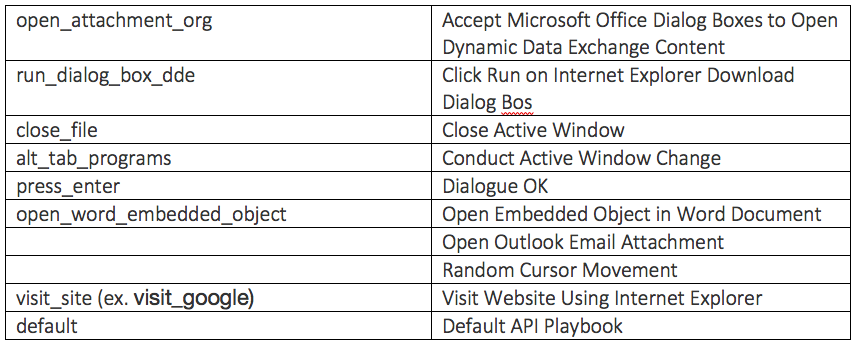

Executables can do similar checks by calling a wide variety of library functions. A common one is GetLastInput, which is used in a loop that waits for a non-null return value from the function. GetLastInput will return the tick count of when the last input event was received for the session created by the calling application. Simply put, the function will return non-null if any input has been performed since the launch of the program using the function. This is an obvious way for malware to check for input to a system, and thus wait out sandboxes without any user interaction.

Malware samples can also make use of the GetCursorPos function, which works much the same as GetLastInput but checks only mouse movement, to evade sandboxes.

Malware authors can use more advanced techniques that also use mouse coordinates as a decryption key. This requires the mouse to be in a specific yet randomly generated position on screen in order for execution to proceed.

Other techniques require the user to click or double-click a specific target in order to run. The most common example is embedded materials inside of document files. While this method does prevent execution in simplistic sandboxes, execution relies on successful social engineering.

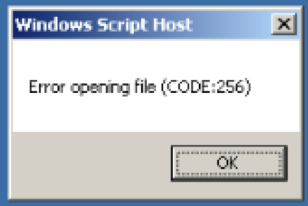

Another example of a sandbox evasion technique using social engineering is popup dialogue boxes that users need to click on. The example below shows an example of an ‘error’ dialogue box that triggers the rest of the malware payload once the OK button is clicked.

The advantage of popups and dialogue boxes for attackers is that they require accurate clicking, which is harder to emulate than simply inputting random clicks or keystrokes. The key to triggering evasive malware becomes less about whether a sandbox can simulate key or mouse input, which is easy, but more about applying basic problem solving to the input. In order to do so, it is necessary to incorporate an input framework into analysis environments. Threat Grid’s Input Automation is such a framework, addressing these sandbox evasion techniques with scripts in the Lua programming language we call Playbooks.

User Emulation Playbooks in Threat Grid

Threat Grid has always allowed user interaction with samples through our Glovebox technology. By its very nature, Glovebox requires an actual user present to perform any input within the sandbox instance. This presents two problems. First, it requires a user to spend time interacting with the sandbox. Second, it also often requires users to have some knowledge of what inputs are required to trigger the malicious payload. Playbooks are designed with these problems taken into consideration, by automating the common user behaviors that malware will check for.

Due to the varied nature of input checks, it is necessary to have several different input scripts in order to cover the different cases. Playbooks allow Threat Grid to behave as if a user is present and operating the sandbox’s keyboard and mouse. The actions outlined in the selected playbook, such as mouse movement and keystrokes, are performed during sample analysis. If a sample is submitted that requires the user actions in the playbook, these requirements are fulfilled and the sample continues its execution.

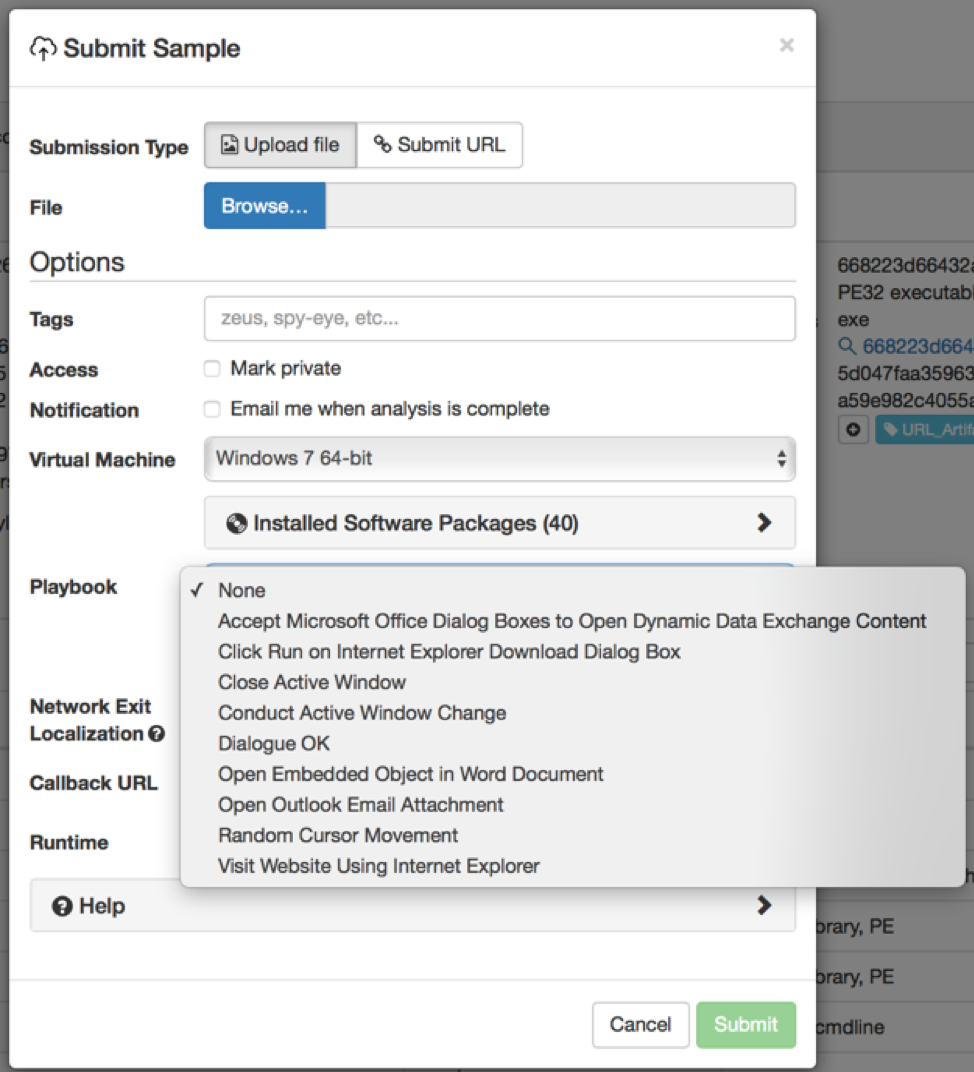

Playbooks are available to both manual and API submissions. For manual submissions the Playbooks are listed in a dropdown menu inside the sample submission window as illustrated in Figure 4.

Playbooks User Experience

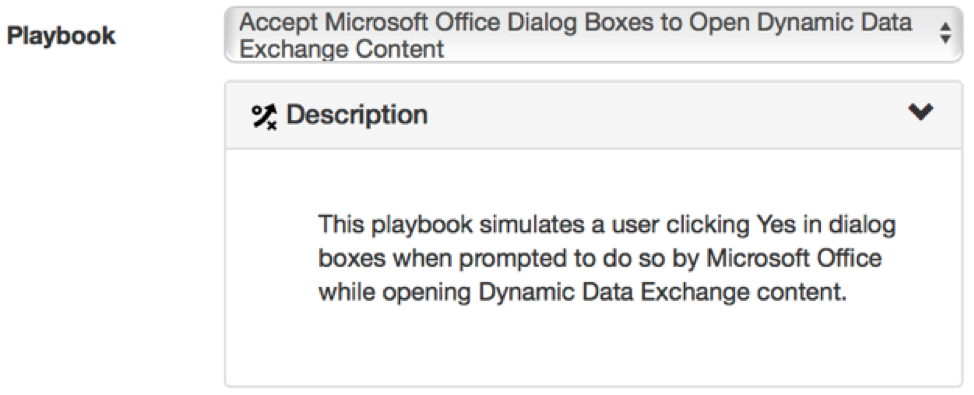

Playbooks can be selected before running a sample inside the manual sample submission window. The various playbooks can be selected via the dropdown menu; the description of the selected playbook is displayed in the description pane:

In order to emulate real user activity as much as possible, playbooks use the same input channel that users interacting via Glovebox use. This may cause odd or anomalous behavior if a user tries to interact with a sample while a playbook is running. Additionally, enabling playbooks may result in behavior being reported that is a result of the playbook itself and not necessarily that of the submitted sample. Therefore, Threat Grid has whitelisted behavioral indicators that can result from the selected playbook. This may result in a couple of behavioral indicators being omitted from an analysis. Threat Grid has taken great care to ensure that the whitelisting will not interfere with the conviction of a sample.

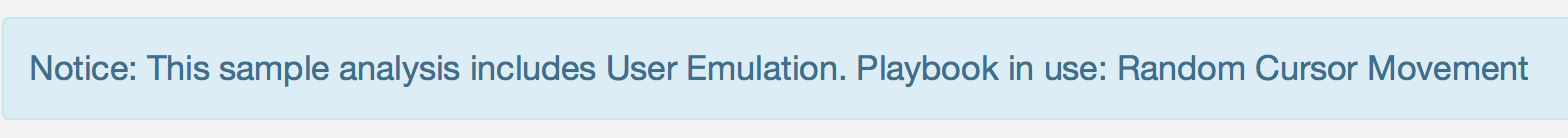

When enabling a playbook through the user interface the sample will have the following banner displayed, informing any user watching the sample that playbooks have been enabled for the submitted sample run.

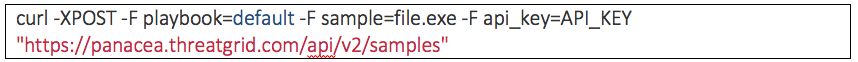

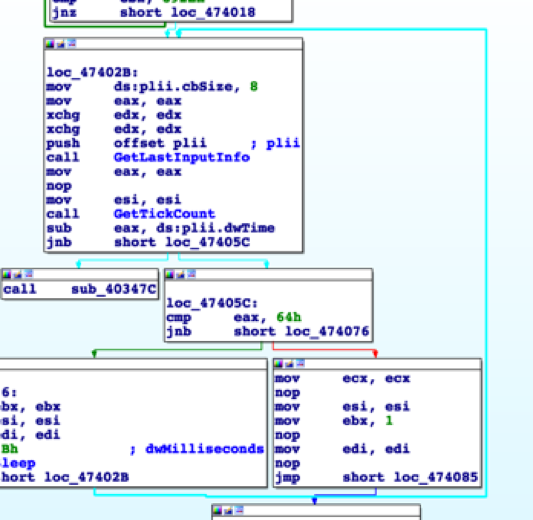

All API submissions have the Default playbook enabled. However, if a user wishes to submit a sample with a playbook other than the default, they can add the `playbook=` option to their submission URL as in the following example:

The playbook short names are:

User emulation playbooks seek to increase the efficacy of Threat Grid’s automated analysis by covering cases where user activity is required to trigger the full payload of malicious submissions. Continued development will be made to playbooks, with later releases allowing more exact behavior to be performed in even more of an automated fashion.

Sample detection with Playbooks

The following are three examples of the use of playbooks on Threat Grid.

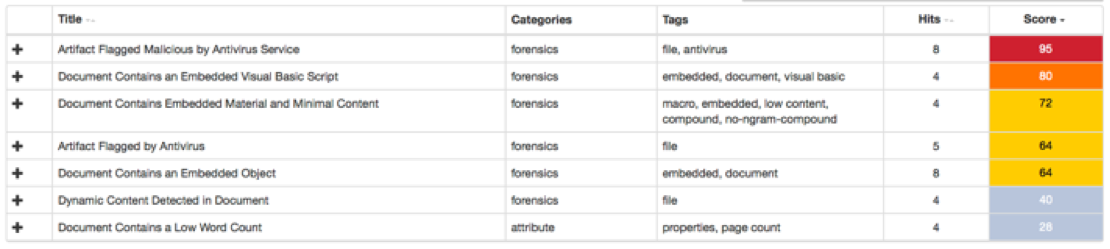

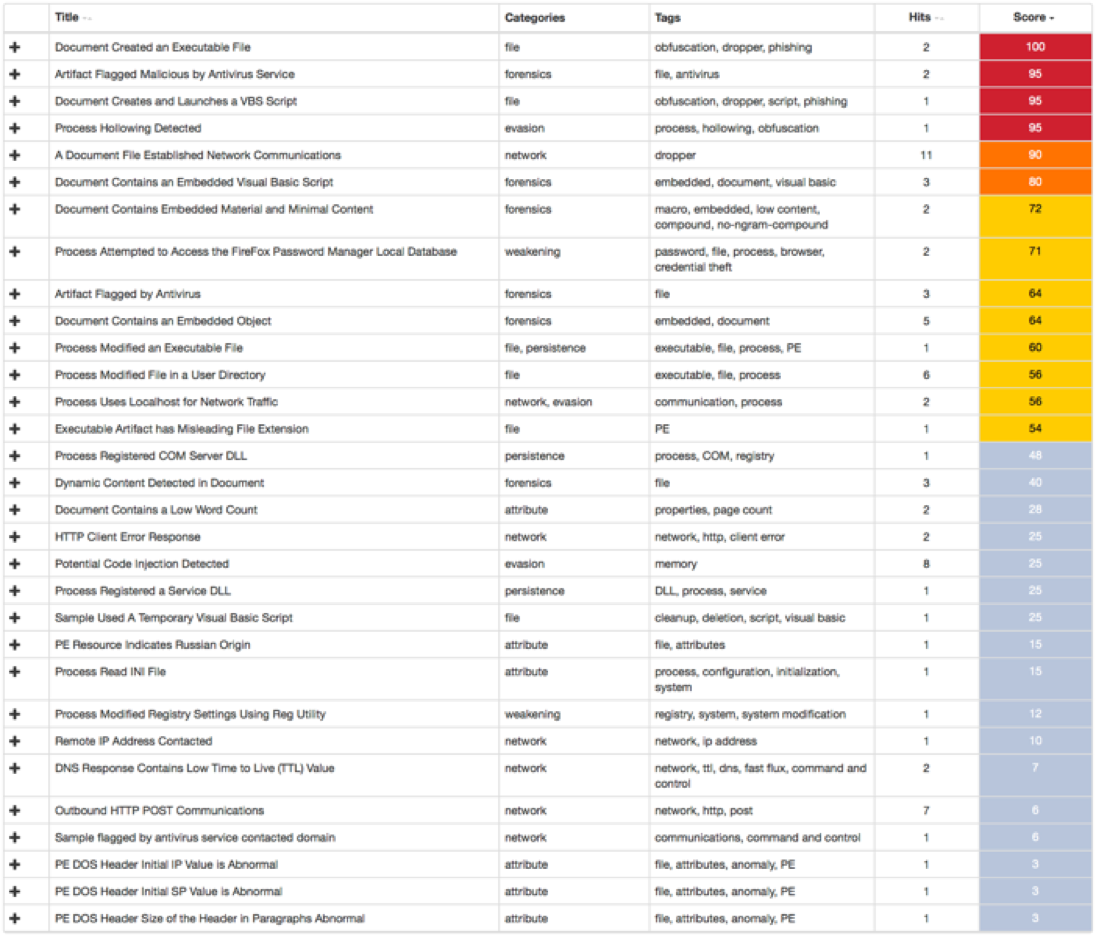

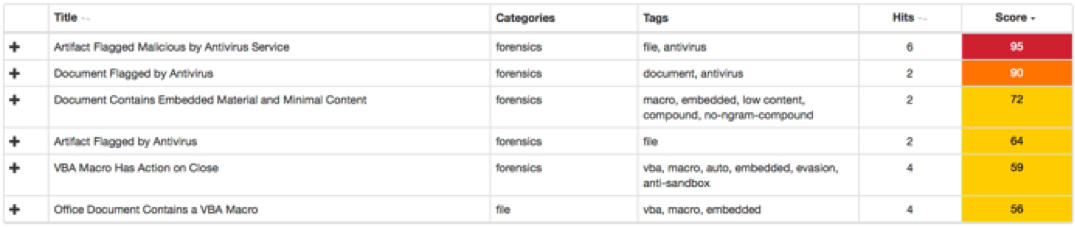

The first example is of a document that includes embedded content. Thus, the malicious payload of the document will only execute if the icon is double-clicked. If the document is submitted and the embedded content is not double-clicked, Threat Grid would only detect the following behavioral indicators:

Since the behavioral indicators `Document Contains an Embedded Object` and `Document Contains Embedded Material and Minimal Content` were detected, we can use the Open Embedded Object in Word Document playbook.

The following is a GIF of this playbook in action. It can be observed that the playbook double-clicks the icon and a command line is then triggered, presumably executing the payload.

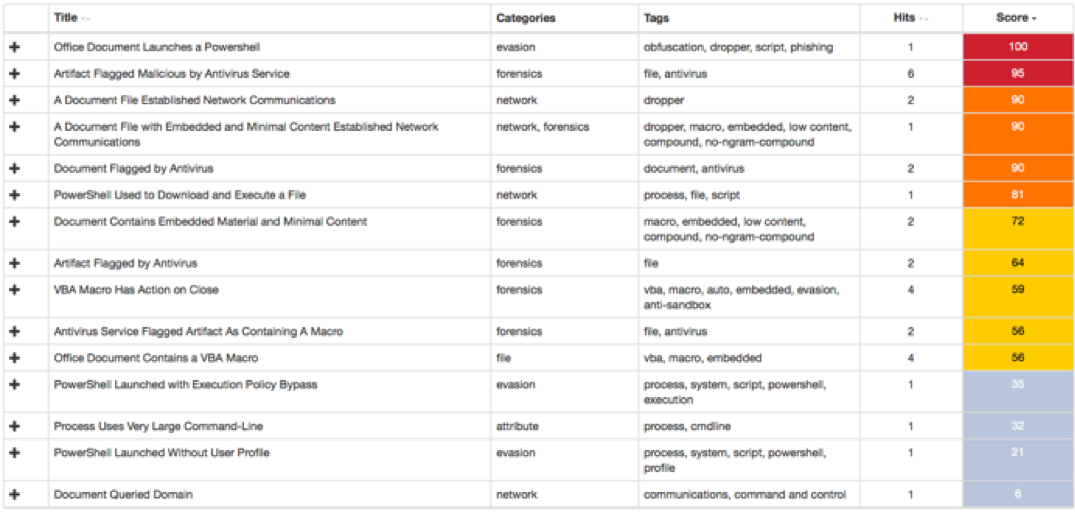

Threat Grid returns a larger set of behavioral indicators when the payload is triggered. The following image shows the set of behavioral indicators triggered after running the Open Embedded Object in Word Document playbook on the previous sample. The score of the sample increases to 100 due to new behavior indicators obtained with the playbook.

The Word document creates an executable file when the playbook double clicks on its embedded object. This behavior increases the sample’s score to 100 and it can only be observed with the Open Embedded Object in Word Document playbook enabled.

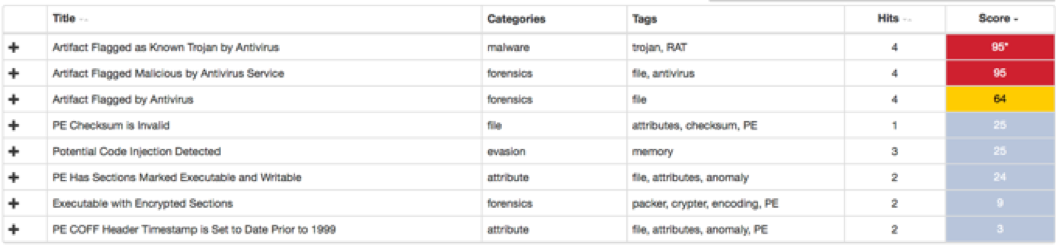

The second example, which was briefly explained with Figure 2, is a Rovnix (Trojan) variant that expects mouse movement. The malicious payload of this sample only executes when the system moves the cursor to a new position on the screen. The following behavioral indicators are triggered if the sample is submitted and Threat Grid does not move its cursor to a new position when analyzing the sample:

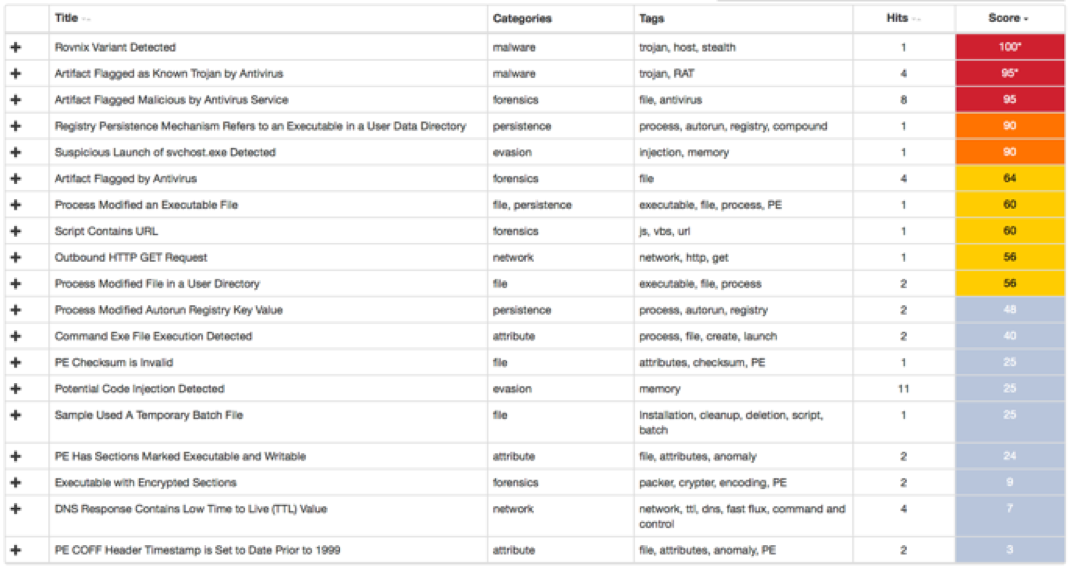

As explained before, the default playbook for every API submission will randomly move the mouse cursor on the screen and thus execute the expected user interaction by the sample. The following behavioral indicators appear after the sample is submitted with the default playbook enabled indicating the sample triggered correctly and also convicting it as malicious:

The third and final example is of a Word document that triggers its payload through the autoClose() function. This function allows documents to trigger specific behavior only when the document window gets closed. Malware authors use this technique to evade a sandbox as it usually would not close the file when analyzing it. Threat Grid will close this file when analyzing it if the Close Active Window playbook gets selected. If not, then the document will not close and thus will not trigger its malicious payload through the autoClose() function. As Figure 12 illustrates, Threat Grid detects the autoClose() function in the sample but since the playbook was not selected the function’s behavior does not trigger. Therefore, Threat Grid will only detect the following behavioral indicators when the playbook is not selected:

On the other hand, if the “Close Active Window” playbook gets selected it will close the sample’s window subsequently triggering the autoClose() function. The following behavioral indicators are triggered after the sample is submitted with the “Close Active Window” playbook enabled, convicting the sample as malicious:

The Word document launches a Powershell when the playbook closes its window. This behavior increases the sample’s score to 100 and it can only be observed with the Close Active Window playbook enabled.

Future plans

As part of its core mission to investigate malware Tactics, Techniques and Procedures (TTPs) and provide users with high efficacy indicators and detections, Cisco’s Advanced Threat Research and Efficacy Team is continually working with our engineering teams to build solutions like User Emulation Playbooks. In order to enable user emulation to work more effectively there are plans to develop more context-aware and intelligent playbooks as well as their automatic selection based on the sample. Before running a submission, static analysis will be run on the sample to check for certain criteria. Document samples will benefit most from this feature since embedded material and functions such as autoClose() can be easily identified by static analysis. The playbooks specifically designed to trigger such behaviors can then be used with the sample run.

In general, malicious installers and samples with dialogue boxes still pose somewhat of a problem for sandboxes. These kinds of Window popups require clicking very specific areas on screen in order to trigger more behavior from a sample. Image detection is being worked on in order to detect popups and common dialogue Window choices. The playbooks will then be able to intelligently recognize images, icons etc. and perform the appropriate actions. Once the image detection mechanism is in place, several new playbooks will be created to take advantage of the new capabilities.

While the Threat Grid team makes every effort to ensure that playbooks are as versatile and comprehensive as possible, the actions of a single playbook may not be enough to trigger all of the activity traps in a sample. In a future release, we’ll be making it possible to chain playbooks together in a specific order via the Sample Submission UI. This will allow users to build and customize their own playbooks to account for minor variabilities, such as, for example, running time or number of clicks.

Many malicious authors are aware of the use of sandboxes by security software. They use multiple techniques, like the ones we discussed in this blog post, to detect when a sample is being analyzed by a sandbox in order to stop its execution. The biggest value playbooks bring to users is bypassing common evasion techniques by making the security software not look like a sandbox but a “real” system with a human user. This prevents malware samples from stopping their execution and enables the sandbox to analyze and detect them as malware.

Cisco continues to rapidly iterate on new features and methods and is dedicated to building you the best threat investigation products available. To learn more about Cisco Threat Grid, check out our demo video at: cisco.com/go/threatgrid