In the security industry, practitioners must be always aware of one fact: adversaries are constantly working on finding new ways to avoid detection. Today, we’re going to focus on one of the common avoidance techniques — obfuscation. Command-line obfuscation is the process of intentionally altering command-lines to prevent automated detection without changing the original functionality of the command.

Types of Obfuscation

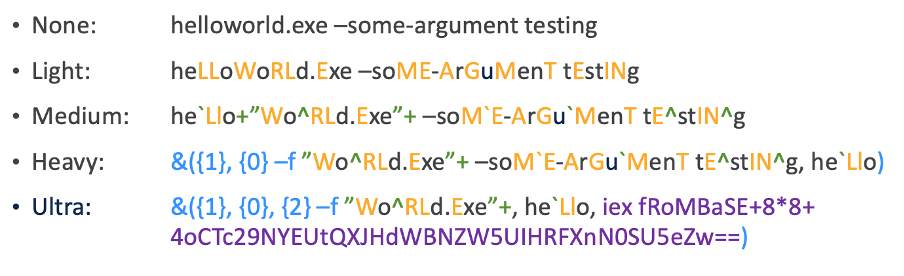

There are multiple publicly available tools, such as Invoke-Obfuscation, that provide a glimpse into the techniques used by adversaries. Invoke-Obfuscation is a PowerShell tool originally created to demonstrate available obfuscation techniques but also aimed to help defenders simulate obfuscated payloads. This tool can then be used to simulate attack scenarios and analyze the defenders’ detection capabilities. We have experimented thoroughly with this tool and identified a few basic levels of obfuscation.

Let’s have a closer look at these examples. Each color represents a different technique. In the simplest form, Light obfuscation changes the case of the letters on the command-line. On the Windows command-line, the case of the letters does not matter, and they can be changed randomly without affecting functionality.

The Medium level takes obfuscation a step further by introducing characters that are either ignored or do not affect the command execution, such as caret “^” or tick “`” symbols. Heavy obfuscation adds another technique: changing the order of the arguments on the command-line. During the execution, the arguments are reordered according to the numbers in the curly braces, thus not changing the functionality.

Lastly, the Ultra level of obfuscation uses Base64 encoded commands to hide the content of the command-line. To avoid signature-based detection, the “Base64” keyword is replaced by “base8*8”. During the command execution phase, the iex (invoke-expression) interprets base8*8 and converts it to Base64 before executing the encoded command.

We looked at a few theoretical examples of obfuscation, but how does this technique look in the wild? The example on which we’ll demonstrate the different obfuscation techniques is especially stealthy as it utilizes the Windows operating system’s native binary msiexec. msiexec is used to install or update legitimate programs, but as we will shortly see, it can also be used to install malware from evil[.]com:

![]()

As malware is being discovered we find new IoCs based on which we can detect and identify the malware. Based on these IoC we can then write signatures to prevent new infections. Therefore, adversaries use the light obfuscation to avoid signature-based detection:

By utilizing ticks and caret symbols, the adversaries make it even harder to detect:

So far, we have looked at different obfuscation techniques and their real-world application. The question now is: how do we detect them? Multiple approaches come to mind when we think of detection. A straightforward approach relies on counting characters that are commonly used in obfuscated commands, such as the caret “^” or tick “`” symbols.

However, this would lead to many false positives since these characters have legitimate uses, for example, in regular expressions. Implementing this method would overwhelm the analysts with too many unactionable events. Another method would be using signatures. Signatures provide an accurate way of detecting these techniques and do not generate many false positives; however, they target techniques used by the specific malware, thus being unable to detect other or modifications of the original obfuscation techniques. Therefore, we need smarter means of detecting them.

Using Large Language Models (LLMs) to detect obfuscation

To create reliable detections of now well-known and future obfuscation techniques, we have trained a large language model (LLM). LLMs can generalize, in our case, known obfuscation techniques into novel ones without generating many false positives. The model consists of two distinct parts: the tokenizer and the language model.

The goal of the tokenizer is to split the input into smaller units called tokens. The tokens are essentially a representation of common combinations of characters that appear in a language.

Standard tokenizers used in NLP are trained on a natural language such as English. Since the command-line has a different structure than natural language, it is beneficial to train a custom tokenizer for our use case.

To illustrate what a tokenizer does, let us have a look at an example of a command-line and its respective tokenized version:

The Input line contains the command-line in its raw form, and the Tokenized input contains the representation of the command-line provided by the tokenizer. Some of the command-line sequences may be split into multiple tokens, but we still need to mark a token as a continuation of the previous one, which is denoted by “##” before the token.

To make it a bit clearer, an equivalent of this in the English language would be splitting a word into multiple syllables and marking syllables that are a continuation of the word with a prepended ##. For the word “tokenizer” it would be the following representation: to, ##ke, ##ni, ##zer.

The second part of the detector is the Large Language Model (LLM). This is the part responsible for the detections. From a plethora of available language models, we chose Electra, as the pre-training of this model closely resembles the task of obfuscation detection.

During the pre-training phase, some of the input tokens are replaced by other likely tokens from the vocabulary, and the goal of the model is to identify replaced tokens. The goal of the pre-training step is to learn the structure of the command-line; therefore, we used tens of millions of command-lines to train the model.

In the next and final step, the so-called fine-tuning phase, the model learns to differentiate between obfuscated and un-obfuscated command-lines. The model processes different samples that include combinations of obfuscation to learn the underlying techniques.

The model is trained to assign a probability of a command being obfuscated to each of the analyzed commands. If the probability is higher than a certain threshold, we consider this command obfuscated and worth investigating. The resulting model achieves impressive results, having high precision and recall on the yet unseen data.

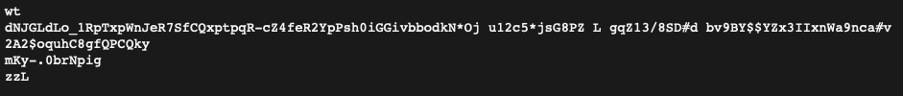

During the analysis of detections based on the LLM obfuscation detector, we found a few new tricks used by well-known malware families such as Raspberry Robin or Gamarue. Raspberry Robin leveraged a heavily obfuscated command-line using wt.exe, which can only be found on the Windows 11 operating system. In another example, Gamarue leveraged a new method of encoding using unprintable characters.

Raspberry Robin:

Gamarue:

The Electra model detected expected forms of obfuscation and new techniques. In combination with the existing security events from other detection engines, the model increases fidelity of the detections presented to the threat analysts.

Conclusion

Obfuscation techniques on the command-line are a strong indication of malicious activity. For example, Gamarue and Raspberry Robin malware campaigns commonly use this technique to avoid detection by traditional EDR products. While obfuscation may have a benign use case, it is often used by threat actors to hide their malicious intent.

Therefore, it’s essential to detect new obfuscation techniques using smart methods as quickly as possible and act on them. We have created a specialized tokenizer for command-line data and an accompanying Large Language Model that detects these techniques and significantly simplifies automated detection without the need to exhaustively enumerate novel techniques.

We’d love to hear what you think. Ask a Question, Comment Below, and Stay Connected with Cisco Security on social!

Cisco Security Social Channels

Instagram

Facebook

Twitter

LinkedIn

CONNECT WITH US