My team’s recent fun event was on a cruise liner on the calm, blue waters of the Pacific Ocean hugging San Francisco. Besides soaking up the panoramic vistas and general bonhomie, I particularly enjoyed my conversation with the skipper. Our worlds were completely apart and yet I found the conversation to be completely relatable. Most specifically, I found so many parallels between his talk of navigating ships and the Agile transformation we are currently navigating at Cisco engineering.

Our engineering development process at Cisco is ever evolving. We are proud to be keeping with the times and overhauling some of our archaic processes, all while keeping the engines humming, producing software that runs several billion devices at thousands of customer premises.

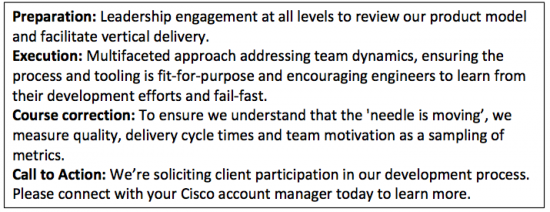

We had several imperatives to launch an agile transformation at Cisco engineering. Primarily, building deeper customer context and therefore smarter products through co-development with customers. We also wanted to improve the overall experience of engineers through the formation of self-governing teams. This allows for faster decision-making and speed. Overhauling our time-to-market and quality were also key factors. And finally, a quick dipstick competitive analysis, especially here in Silicon Valley, showed us that our smaller competitors were adopting agile and reaping benefits. We’re soliciting client participation in our development process through agile/co-dev. Please connect with your Cisco CE today to learn more.

Turning a big ship around, apparently, can take time and effort but exactly how much of it depends on the nature of the carrier. The skipper eloquently spoke of how a cruise ship, designed for luxury travelers, will take longer since it focuses on passenger comfort and doesn’t have the necessary infrastructure onboard to handle a quick volte-face. A battleship by contrast is built for exactly this sort of maneuvering. Essentially, turning an engineering organization on a warpath of waterfall development to an agile model requires nimbleness and skill without disrupting our customer’s lives.

Turning a big ship around, apparently, can take time and effort but exactly how much of it depends on the nature of the carrier. The skipper eloquently spoke of how a cruise ship, designed for luxury travelers, will take longer since it focuses on passenger comfort and doesn’t have the necessary infrastructure onboard to handle a quick volte-face. A battleship by contrast is built for exactly this sort of maneuvering. Essentially, turning an engineering organization on a warpath of waterfall development to an agile model requires nimbleness and skill without disrupting our customer’s lives.

The benefits of our agile transformation have been immense as demonstrated through software stacks like IOS XE and IOS XR built entirely using a customized agile development methodology. Customers get an early window to influence our product development and partner closer with the engineers writing the code. This further results in reduced deploy times and a drop in testing and certification processes. Besides our customers, the benefits for Cisco and the engineering teams are immense. Not just does this move to agile development keep us competitive, we also have better control on the development process and better quality products being built.

I am proud that this next wave of our agile journey will be one of the (if not THE) largest agile transformations, in the world of embedded software development, at this scale. What makes me prouder is that we acted like a battleship even while caring for the comfort of our customers like a cruise liner.

I am proud that this next wave of our agile journey will be one of the (if not THE) largest agile transformations, in the world of embedded software development, at this scale. What makes me prouder is that we acted like a battleship even while caring for the comfort of our customers like a cruise liner.

Do tell me about your agile development journeys in the comments section below and let’s swap notes on best practices.

Good analogy between navigation and agile transformation!

Our team has been in agile mode for a while now, It has had its own challenges/advantages, I would like to see tangible data which shows agile is indeed doing great things as compare to waterfall model. May be in next blog, it would be useful to share any data if possible. Thanks.

Good observation. As with any process, there are advantages and challenges. Focus on continuous improvement and keeping process lean is the key. One big advantage of agile is, the process drives early and frequent interaction (+iteration) with customers, which naturally leads to better quality. The process enables better customer context all through the development.

Venky – An eye opening post. The size of the beast might become more apparent, when we estimate the average revenue generated per “Sprint’ in CSG in the long run in this journey.

One key point you mention – is not miss the end game – focusing on the quality of the product thrown into the lap of customers.. Does not matter how we produced the product, that mandate never goes away !! Lots of points to ponder and execute….

Good point about being able to estimate revenue per sprint. Starting point is to align the sprints in the organization to a common cadence, which is one of the key goals of the current wave of agile rollout.

Good metaphor!

In the embedded software world, we will need a customized & hybrid Agile given that:

1) Normally the SW has dependencies on HW, and HW is hard to be agile due to the cost and turnaround time. Therefore, SW Agile + HW waterfall model is common.

2) Our SP and Enterprise customers have higher quality requirement, and they don’t like to do upgrade and testing with every iteration release. That’s why we still need release level quality assurance.

The key to being agile in the embedded world is to remove the dependency on hardware from the driver. This is the true Supertanker in the ocean of code development. I’ve been involved with several bring-ups where a test cycle for a simple change can take 20 minutes or more to validate the change. Given the rapid nature of change during the bringup period, this can result in hours of time spent compiling/loading/testing, often on trivial errors.

Specifically with our own ASIC’s, we can do a better job of combining the tasks of ASIC validation with the development of a driver which can be integrated with the intended operating systems. Use of test-driven-development (TDD) along with hardware mocks, it becomes much easier to test the behavior of driver code on a device without actually needing access to the physical device. The developer has the ability to rapidly test changes to the driver on their development system, with a feedback in an order of seconds rather than minutes.

Some points to consider when talking about Agile development:

– What is the cycle time between making a software change, and confirming existing behavior is not affected? Are there external dependencies?

– How many resources (ie switches/routers/servers) are required to validate a given feature?

– How are failure cases validated? Can this be performed without damaging a system?

– Are component drivers or critical infrastructure tightly interleaved with a particular implementation? If this module was to be ported over to another system, how much work would be involved integrating it into a new system.

– How easy is it to roll back changes in the case of failure? Are there any automation routines occurring (ie continuous integration) to ensure check-ins do not break previously passing test cases? Or is validation dependent on hands-on testing? Are hooks in place to run specific test cases before changes can be checked into the software repository?