Like the internet, artificial intelligence (AI) becomes more powerful as the number of people using the tools grows. The utility of AI in this context depends on the user themselves. How? By providing their “voice samples” through applications like Siri, Alexa, Cortana and Google Assistant back to the developers. This helps build better algorithms. But these solutions need a solid foundation. One that supports the collection and the processing of the algorithms. But also an infrastructure that can adapt and support to scale, creating a robust solution for optimal utility.

In my previous blog (AI/ML: Shiny Object or Panacea?) I referred to Andre Karpathy’s article titled Deep Reinforcement Learning: Pong from Pixels. In it, he identifies four key “ingredients” for successful AI.

- Data

- Compute

- Algorithm

- Infrastructure.

But I want to dig a little deeper into these four ingredients. It’s important that leaders responsible for achieving AI are aware of the implications. And also the reasons why Artificial Intelligence will continue to become more “mainstream”.

Data and AI

People are dramatically increasing their use and sharing of data in everyday activities. From search engines (by manually typing or using a voice query), getting directions or even simply driving their cars. This generates huge volumes of data every second that is collected and analyzed, generally for improving our way of life.

The companies that provide solutions, either for better driving, better health, better stocking of groceries, or better ways to get things done in general, use this data as their first and most important ingredient in creating their products or services.

AI and Compute

We’re not just talking about huge mainframes with infinite compute. Or even a single kind of computing platform that offers whatever CPU or GPU you request. Compute depends on factors that have expanded based on the way we live and work today. For example, mobile devices today are the “Edge Compute” nodes with inferencing capabilities. Plus they have powerful capabilities built around mobile applications. These are now enabled and may reside in the cloud or on the device, depending on connectivity.

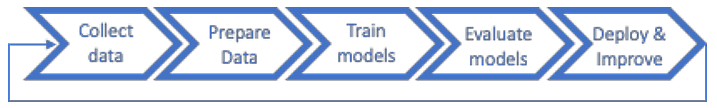

A typical artificial intelligence process offers a logical way to think about the compute. This is from a solution built specifically to support ingesting and normalizing data (extraction, transformation and loading). Also, pre-conditioning the data. This is the core of the AI process as illustrated below.

And within each cycle, the different forms and sizes of compute are varied. This could be using deployed algorithms on edge devices like mobile phones, laptops or the Jetson. Or perhaps the Deep Learning requirements that need a purpose-built design combining the proper balance of CPU and GPU while incorporating NVLink technology (as well as appropriate cooling from most rapid training of the algorithms).

What about Algorithms?

As the various applications and access to technology proliferates, sites such as GitHub and Cisco’s DevNet enable sharing of open algorithms. This is enabling users to improve on publicly available code and save time tailoring it to their specific or unique business or mission needs. Again, the expansion of both availability, access and even the understanding of how to leverage these resources, is making the AI community stronger each day.

The Gartner Hype Cycle for Artificial Intelligence highlights various AI solutions and their relative maturity. The more readily available to developers the data and algorithms are, the more rapidly solutions in greatest demand (or those with the largest benefit) will accelerate. It’s interesting to note that deep learning, machine learning, natural language processing and virtual assistants have passed the Peak of Inflated Expectations (PIE), and heading toward the “Trough of Disillusionment”.

To help accelerate success for the public, Cisco recently opened up the code and tools to its conversational AI platform called Mindmeld. And although autonomous vehicles are also in the Trough with an expectation of more than 10 years till the Plateau, there is plenty of innovation to help advance the myriad subsystems that will support them.

Infrastructure

In “Transforming Business with Artificial Intelligence”, Cisco discusses the need to make your infrastructure intelligent, and emphasizes the need to understand the context of combining the four key ingredients together in the right manner. It’s about optimizing business processes to support the needs of the user and the work. This takes into account availability of the above ingredients. It also manages for the user whether they are working from the cloud, local or hybrid environment.

The infrastructure also helps to enable the unique application needs to be supported. It also helps move workloads seamlessly from the edge to the enterprise, and across multiple virtual and physical environments. Cisco DevNet even offers a free sandbox for users to access the latest C480ML deep learning server with NVIDIA NGC, the GPU-optimized software for deep learning, machine learning, and HPC that takes care of all the plumbing so data scientists, developers, and researchers can focus on building solutions, gathering insights, and delivering business value.

Enterprise AI

As AI continues to develop as a profession, experts in supporting innovations will not just be Data Scientists and Engineers, they will be experts in understanding what end users need to accomplish their jobs more efficiently. With an infrastructure that is proven, scalable and adaptable to support myriad partners in the solution space, businesses and organizations increase the benefit of leveraging an end-to-end Enterprise AI approach that appropriately incorporates the four key ingredients for successful AI.

This could also lead to reduced risk in undertaking new efforts by engaging a partner that incorporates end-to-end offerings across physical and virtual infrastructures. And do so while incorporating AI internally to the network to maximize simplicity, improve security and enable scalability from the Edge to the Enterprise.

Join the conversation

Cisco is proud to be a Platinum Sponsor of this year’s NVIDIA GPU Technology Conference, the premier event on artificial intelligence. Be sure to visit our booth (#104) and we’ll bring you up to speed on the latest Cisco solutions and our expanding AI ecosystem of partners. Plus, be sure to join us for two exciting Cisco led sessions.

When: November 5-6

Where: Washington DC

Government attends for FREE

Social Media: #GTC19

Register today and save 20% with our discount code XD9CISCO20