Hopefully by now you’ve been embracing industry trends around network programmability. Many network engineers are learning programming using popular languages like Python. Indeed, it is a notable part in the blueprint from the DevNet Associate (DEVASC) certification. You may be like me; you learn by doing. We start with pretty simple script development to solve some current need – push config changes to many devices, upgrade software images across a set of branch offices, collect operational data on a regular basis and display it – many opportunities exist.

DevNet Snack Minute Episode 25

At some point, however, you have a need or feel an urge to SCALE. IT. UP. The comfortable, tried-and-true script that did its work faithfully device-by-device no longer meets the need.

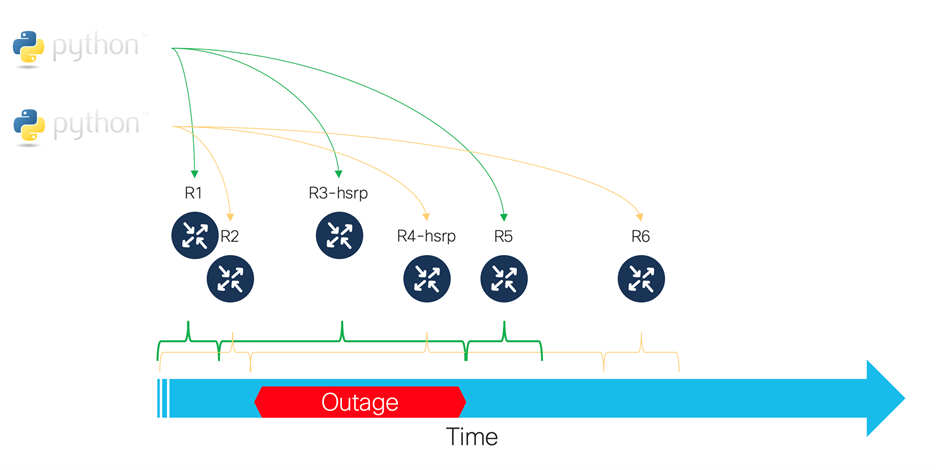

For a while you might get away with running multiple instances of your script in parallel and cross your fingers there are no conflicts. But multiple copies of the same script running can be fraught with risk unless the workloads are perfectly segmented. You don’t want the same operation to run multiple times on the same device, especially not at the same time! Nor do you want functionally paired devices, maybe teaming on HSRP or multilink aggregation and port channels, to be simultaneously brought down.

We develop redundancy for a reason!

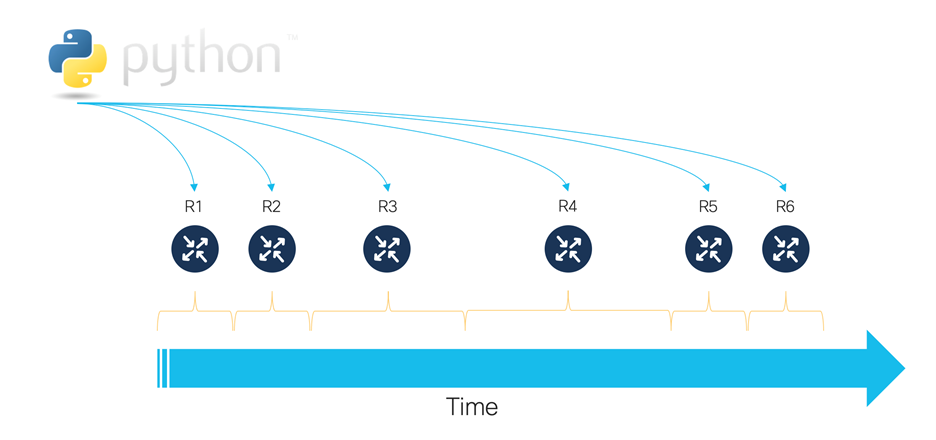

So how do we scale up our workloads? Can we take better advantage of multiple cores in our CPUs or do some other work while we’re waiting for some I/O-intensive activity to complete? There is a whole advanced area of Python concerned with parallelism, concurrency, and multiprocessing. We can start at a more basic, but still effective level with asynchronous I/O and leave threading for times when we actually need that level of sophistication. The Python asyncio module works from a single thread on an event loop or queue that manages the work execution. As you write your Python script you identify common I/O points, such as network API calls, slow file/disk access or PostgreSQL as opportunities to ‘await’ their execution and pass processing opportunities to other tasks. The task state is maintained and when the scheduler returns to check on the completion state, other steps in the logic can continue.

This model works great for situations where you have well-defined logic that follows a sequence and you have to do it for many, unrelated items.

Want to learn more?

For some more context and examples, check out DevNet Snack Minute Episode 24 where we introduce the concept. In the videos you’ll hear more about asyncio and see examples of being able to do more work, more quickly while waiting for remote API servers to process your requests.

DevNet Snack Minute Episode 24

Related resources

We hope you might dig into it further when your need arises. Here are other resources that may help you!

- Asynchronous I/O (docs.python.org)

- Async IO in Python: A Complete Walkthrough (realpython.com)

- asyncio 3.4.3 (pypi.org)

- Resources for developing with Python and Cisco Networking

We’d love to hear what you think. Ask a question or leave a comment below.

And stay connected with Cisco DevNet on social!

Twitter @CiscoDevNet | Facebook | LinkedIn

Visit the new Developer Video Channel