Welcome back to our DevOps series! It is about time to continue exploring what the future of software development looks like, and most importantly how you can get started today.

If you have walked the path of this DevOps series with us, by now you might be wondering how do developers deal with so much complexity around containers, micro-services, schedulers, service meshes, etc… on top of their core knowledge about programming languages and software architectures. It sounds like way too much, huh? That’s exactly how they feel and they main reason why everyone looks for ways to let them focus just on their code.

Decoupling software code from underlying infra

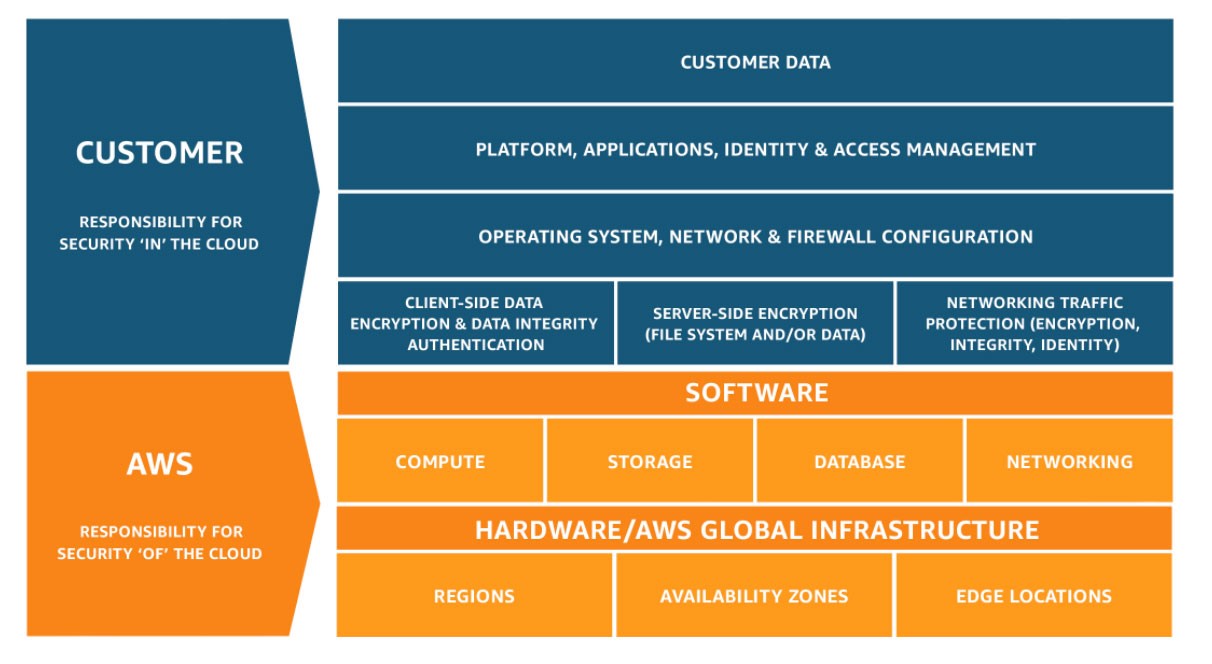

A very interesting approach to solve this challenge is serverless (or Function-as-a-Service, aka FaaS), and it is based on the idea of having someone manage the required infrastructure for you. By decoupling software code from underlying infra you can focus on the software you are developing and not in how your containers or load balancers need to grow, or if you need to update your k8s cluster with the latest security patches. Now you only need to worry about the upper layers of the shared responsibility model.

Figure 1: AWS shared responsibility model

Figure 1: AWS shared responsibility model

Offerings in the serverless arena

Most Cloud providers have an offering in the serverless arena (ie. AWS Lambda, Google Cloud Functions, Microsoft Azure Functions) where you can just submit your code in one of the supported languages and they will take care of the rest. They will handle all the required microservices, have the orchestrator auto-scale them as needed, manage load-balancers, security, availability, caching, etc. And you will only pay for the number of times your code gets executed: if nobody uses your software you don’t pay a dime. Sounds really cool, huh? And it is. But everything comes at a price, and you need to consider something called lock-in.

Code portability to a new environment

If you have worked with native offerings from your own Cloud provider, probably you have noticed that it is really easy to bring your data in and build your application there. But it is not that easy to migrate it out to a different environment when you need to. The main reason is that many of the service constructs you will use to implement your application are native to the specific provider you chose. So when the moment comes to move your workloads somewhere else you basically need to rebuild your app using similar constructs available from your new favorite provider. And that’s exactly the moment when everybody wonders: “wouldn’t it be cool to have a way to transparently migrate my code to a new environment?”. That’s what we call portability.

In the world of containers and microservices, portability is one of the main benefits of running your workloads on Kubernetes (aka k8s). No matter what provider you use, k8s is k8s. Whatever you build in a certain environment will be easily migrated to a different one. So why not using that same approach to serverless? And in fact, as long as k8s is the de-facto standard for DevOps practices, why not run a FaaS runtime engine on top of it? That way it would benefit from k8s portability natively.

FaaS on top of k8s

Well, there are a number of initiatives driving solutions exactly in this direction (FaaS on top of k8s) so in this blog I would like to review some of them with you and see how they compare. And as per the goal of this series we’ll evaluate them by getting our hands dirty to get some real experience. You will have the final word on what works best for your environment!

In this series we will explore 3 different FaaS over k8s solutions and actually put them to work in our evaluation environment:

- OpenFaaS

- Fission

- Kubeless

For our demos you can choose your favorite managed k8s offering, I will go with Google Kubernetes Engine but please feel free to use the one you prefer. That’s exactly the point of using FaaS over k8s… portability. You may learn more about how to use GKE in one of my past posts.

It’s time to start testing some of the most interesting FaaS engines available out there. See you in my next post, stay tuned!

Any questions or comments please let me know in the comments section below, Twitter or LinkedIn.

We’d love to hear what you think. Ask a question or leave a comment below.

And stay connected with Cisco DevNet on social!

Twitter @CiscoDevNet | Facebook | LinkedIn

Visit the new Developer Video Channel