At Cisco, we are continuously pushing the envelope on Data Center Cloud native automation front, with a stream of innovations featuring our talismanic networking product ACI with HashiCorp Terraform, Consul technologies. We have showcased our joint innovations with HashiCorp regularly via multiple Exec blogs, webinars and compelling presentations at ONUG, HashiConf and DevNet events.

In this blog, I am pleased to invite Nicolas Vermandé, Cisco’s cloud native advocate and ACI open-source product development lead, to share his top of mind on infrastructure-as-code and its overlay infrastructure-as-data. Without further ado, let us catch up with Nic.

Nicolas Vermandé

Anti-Pattern Scrutinizer & Cloud-Native Advocate,

ACI Open Source Product Development at Cisco

Codifying infrastructure requirements is a key step when adopting Infrastructure as Code (IaC) and beginning the journey to platform orchestration. This consists in taking bits of the infrastructure, the ones that are repeatable and can easily be identified as patterns and describe what they provide in a declarative fashion. But IT teams need to agree on the data format. Data needs to be structured in a way that can easily be processed by humans or programs. It’s not a concept that is new in the networking industry. For a long time, savvy network engineers have been using excel sheets as a support to plan and track VLAN usage, IP address allocation and security requirements.

Today, in the milieu of software development, automation is driven by Continuous Integration (CI) pipelines and modern cloud-native tooling, and we see a natural shift in the migration of traditional enterprise document management to version control systems. A lot of the heavy lifting can then be natively managed by Git principles (branch, PR, peer review, etc.) and the ecosystem is broad enough to provide hooks into the vast majority of computing platforms and workflow processes.

This is a great opportunity to further test infrastructure snippets as they’re already residing within a controlled repository, but not deployed yet. Because these infrastructure artifacts are defined in a structured format, their schema is well known, and a variety of policies can be applied before deployment to the final target. This reveals a sweet spot to check against compliance and security requirements, and as well as the company best practices. In addition, risks can be mitigated at this very step, by avoiding human errors that are typically flagged at runtime and correcting deviations before they happen. This is actually going one step further than IaC; it is about representing infrastructure input parameters as data and creating an overlay on top of it. As a final decision point, the chain of policy can validate or reject the deployment. It is then easy to extend these principles to network infrastructure and Cisco ACI in particular. ACI has been designed to make external orchestration as easy as possible using HTTP and REST standards, leveraging JSON as a valid input format and a way to serialize relevant information and metadata. As a result, additional automated checks can be enforced at the time of defining ACI policies and adding them to a central repository.

These policies can easily be modeled using HashiCorp Terraform and the HashiCorp Configuration Language (HCL), which are natively supported by tools such as OPA Conftest in the context of requesting policy decisions (i.e., accept or deny the deployment of the infrastructure artifact). This is how one can add ACI policy validation to a CI pipeline. Alternate approaches possible in future involve the use of the Cisco Network Assurance Engine – CNAE and Cisco Nexus Insights..

As ACI is acting as the central network API REST endpoint, it enables external systems to orchestrate ACI components at the infrastructure layer by sending API requests with attributes defined from a higher source of truth, such as the application layer. This way, entire application dependencies can be covered in a top-down approach, where Continuous Integration/Continuous Deployment (CI/CD) pipelines can not only control the application code lifecycle, but also attach infrastructure and platforms requirements to it. In other words, infrastructure-as-data not only allows for compliance checking at rest, but also drives day-2 operations by permanently reconciling the infrastructure platform with the application state as the latter is updated.

Similarly, extending traditional pipelines to infrastructure or platform automation requires the adoption of a structured format to translate inputs into a declarative model. A concrete example of that workflow is the recent introduction of the HashiCorp Network Infrastructure Automation program (NIA) based on Consul-Terraform-Sync. The HashiCorp tool hinges upon Consul services definition, written in JSON or HCL, to dynamically provide a data source for Terraform and ultimately ingest it in ACI.

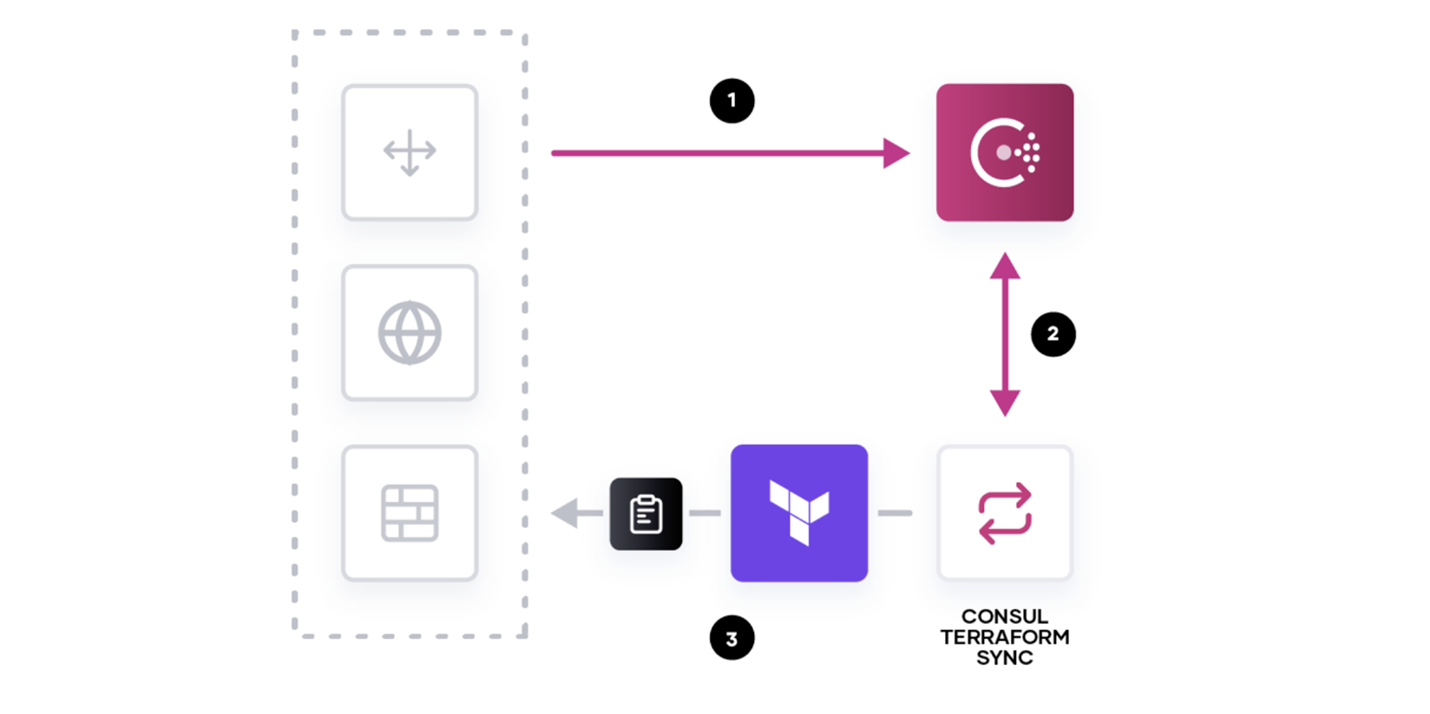

Consul-Terraform-Sync runs the engine that watches Consul state changes at the application layer (based on service health change, new instance deployed, etc.) and forwards the data to a Terraform module that is automatically triggered. This sequence allows your day-2 operations to be constantly aligned with your application state, while 100% of the process is encapsulated into a declarative model. It is depicted in the picture below:

- Consul sends updated service-level information

- Consul-Terraform-Sync receives updated data

- The configured Terraform module is triggered to update the infrastructure with new inputs

On top of the benefits mentioned so far, infrastructure-as-data guarantees that your automation process is easily repeatable and will likely provide consistent results in terms of performance and reliability.

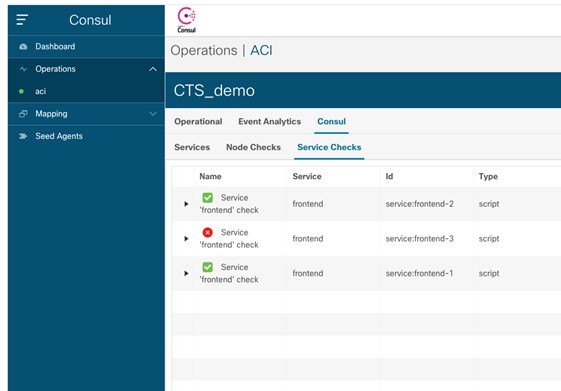

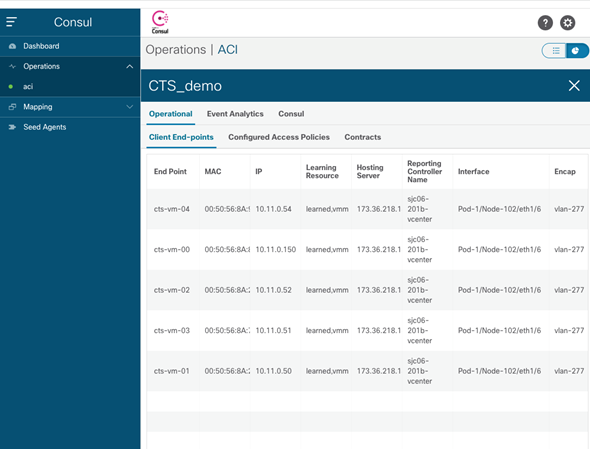

But there’s also a side effect when bonding disjoint domains (app and infrastructure) by using automation. The challenge lies in providing the end-to-end picture, highlighting these bonds, so that operations teams can easily identify the blast radius in the event of infrastructure failures. A proper instrumentation becomes key to the adoption of these automation principles. The following screenshot gives you an example of the Consul ACI App integration, showing Consul specific information co-located with ACI information and tenant-level faults.

The app allows you to easily correlate the failed service id (frontend-3) with the corresponding infrastructure resources, such as the reporting controller, the attached physical fabric interface and the associated VLAN id.

As a conclusion, I’d like to encourage you to think about top-down automation possibilities in your environment in a completely different perspective. Think about day-2 operations and how you could benefit from the infrastructure-as-data reconciliation loops for your operations. I’m sure you’ll find a plethora of use cases. You can also start by exploring these resources below.

Related Resources:

- DevNet Resources, ACI, Terraform and Consul

- Consul Terraform Sync Announcement

- Redefining the Intelligent network with Cisco ACI and HashiCorp Consul