Want to get the most out of your big data? Build an enterprise data hub (EDH).

Big data is rapidly getting bigger. That in itself isn’t a problem. The issue is what Gartner analyst Doug Laney describes as the three Vs of Big Data: volume, velocity, and variety.

Volume refers to the ever-growing amount of data being collected. Velocity is the speed at which the data is being produced and moved through the enterprise information systems. Variety refers to the fact that we’re gathering information from multiple data sources such as sensors, enterprise resource planning (ERP) systems, e-commerce transactions, log files, supply chain info, social media feeds, and the list goes on.

Data warehouses weren’t made to handle this fast-flowing stream of wildly dissimilar data. Using them for this purpose has led to resource-draining, sluggish response times as workers attempt to perform numerous extract, load, and transform (ELT) functions to make stored data accessible and usable for the task at hand.

Constructing Your Hub

An EDH addresses this problem. It serves as a central platform that enables organizations to collect structured, unstructured, and semi-structured data from slews of sources, process it quickly, and make it available throughout the enterprise.

Building an EDH begins with selecting the right technology in three key areas: infrastructure, a foundational system to drive EDH applications, and the data integration platform. Obviously, you want to choose solutions that fit your needs today and allow for future growth. You’ll also want to ensure they are tested and validated to work well together and with your existing technology ecosystem. In this post, we’ll focus on selecting the right hardware.

The Infrastructure Component

Big data deployments must be able to handle continued growth, from both a data and user load perspective. Therefore, the underlying hardware must be architected to run efficiently as a scalable cluster. Important features such as the integration of compute and network, unified management, and fast provisioning all contribute to an elastic, cloud-like infrastructure that’s required for big data workloads. No longer is it satisfactory to stand up independent new applications that result in new silos. Instead, you should plan for a common and consistent architecture to meet all of your workload requirements.

Big data workloads represent a relatively new model for most data centers, but that doesn’t mean best practices must change. Handling a big data workload should be viewed from the same lens as deployments of traditional enterprise applications. As always, you want to standardize on reference architectures, optimize your spending, provision new servers quickly and consistently, and meet the performance requirements of your end users.

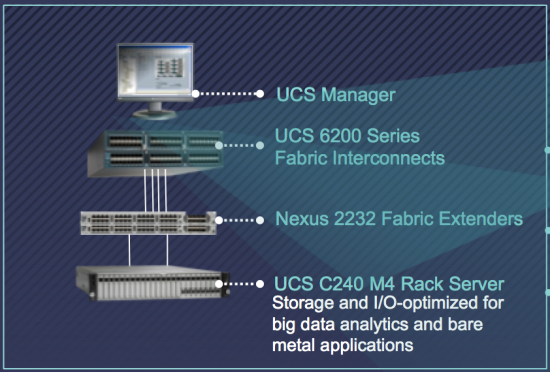

Cisco Unified Computing System to Run Your EDH

The Cisco Unified Computing System™ (Cisco UCS®) Integrated Infrastructure for Big Data delivers a highly scalable platform that is proven for enterprise applications like Oracle, SAP, and Microsoft. It also provides the same required enterprise-class capabilities–performance, advanced monitoring, simplification of management, QoS guarantees–to big data workloads. With lower switch and cabling infrastructure costs, lower power consumption, and lower cooling requirements, you can realize a 30 percent reduction in total cost of ownership. In addition, with its service profiles, you get fast and consistent time-to-value by leveraging provisioning templates to instantly set up a new cluster or add many new nodes to an existing cluster.

And when deploying an EDH, the MapR Distribution including Apache™ Hadoop® is especially well-suited to take advantage of the compute and I/O bandwidth of Cisco UCS. Cisco and MapR have been working together for the past 2 years and have developed Cisco-validated design guides to provide customers the most value for their IT expenditures.

Cisco UCS for Big Data comes in optimized power/performance-based configurations, all of which are tested with the leading big data software distributions. You can customize these configurations further, or use the system as is. Utilizing one of Cisco UCS for Big Data’s pre-configured options goes a long way to ensuring a stress-free deployment. All Cisco UCS solutions also provide a single point of control for managing all computing, networking, and storage resources, for any fine tuning you may do before deployment or as your hub evolves in the future.

I encourage you to check out the latest Gartner video to hear Satinder Sethi, our VP of Data Center Solutions Engineering and UCS Product Management, share his perspective on how powering your infrastructure is an important component of building an enterprise data hub.

In addition, you can read the MapR Blog, Building an Enterprise Data Hub, Choosing the Foundational Software.

Let me know if you have any comments or questions, or via twitter at @CicconeScott.