Linux containers and Docker are poised to radically change the way applications are built, shipped, deployed, and instantiated. They accelerate application delivery by making it easy to package the dependencies along with the application. That means that a single containerized application can operate in different development, test and production environments and platforms (physical and virtual). While the concept of containerization is not new, the benefit of using containers to pull together all the application components (including dependencies and services) into a package for application portability is. As continuous integration and delivery require a very agile Software Development Lifecycle (SDLC) process to move from development to production, containers provides the perfect abstraction to deploy and test across the various platforms. Application containers make it very easy for applications to be deployed on bare metal servers, virtual machines, and public clouds. The reason why containers are relevant has to do with the following principles:

- Highly Efficient: No OS dependencies ensures that there is little performance or run time start-up impact on the applications

- Hardware Agnostic: Can run consistently across any hardware/software platform, irtualization technology, or public cloud1

- Content Agnostic: Can encapsulate any dependencies or payload2

- Security and Isolation: By running all dependencies and services in the container, there is complete isolation of network, resources, and content3

The major customer focus for container technology is Enterprise IT, Application Developers, and Devops. The new Enterprise IT business model has transformed to an agile model where internal infrastructure and cloud infrastructure must be supported as a cohesive systems of resources. As application move from IT to cloud business requirements demand elastic scale-out and ability to move workloads with very low overhead. The bulk of next-gen Enterpriseapplications are being written natively in Linux for the cloud. Directionally the operating system is becoming less and less important and in many cases is an obstacle to scale.

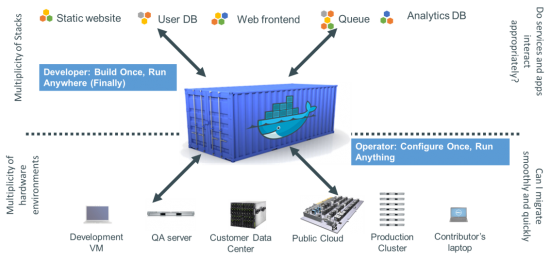

Application and Engineering organizations are changing to devops/devtest; developers are responsible for how code runs; ops and QA teams can take containerized code directly to production. The key value to developers is tied to the idea of build once and run anywhere which has been a desired design method for developers. In the past other technologies like Java were trying to achieve this desired outcome. The ability to run each app in its own isolated container with automated build, testing, integration, and packaging accelerates the time to deliver value to the business.

Devops as widely adopted containers because of the key value of configure once and run anything. Containers makes the entire SDLC more efficient, consistent and most importantly repeatable. Because the containers are lightweight, portability across multiple platforms and their dependencies are removed resulting in much lower testing cycles. Lastly, since the application components are containerized, the quality of code can substantially increase as a result of the abstracting the underlying hardware environment and integrating the dependent services into test automation processes.

Cisco and Redhat collaborated on a whitepaper just released: Why They’re in Your Future and What Has to Happen First. This an excellent starting point for anyone interested in learning more. Cisco Cloud Services is creating an Intercloud of container and micro-services in a cloud native and hybrid CI/CD model across Openstack, Vmware, Cisco Powered, and Public clouds. Look for availability early next year.

—————————-

1Hardware agnostic – any linux OS on any x86 64bit hardware

2Content agnostic – any payload that can run on a linux OS

3Provided proper SELinux or MAC policies are applied on the resources and the containers

How about integrating all Cisco’s Virtual Network Services inside Linux Containers, namely N1KV/AVS, CSR1KV, vWAAS, ASAv, and so on?

We are investigating that and others actively

Hi Ken,

Will #Cisco be integrating Containers within IOS XE or Linuz based OSes?

And #VMWare fears Containers, thus they reported that containers will require VM for agility and better security as it in it’s current state is susceptible or vulnerable to attacks. But I find it quite absurd. Can’t Security to a Container be provided by an envelope or shell like #Microvisor or #MicroContainers provided by Bromium (http://www.bromium.com) rather than sheilding the same within a VM?

Regards,

Neeraj