In the previous posts (1 and 2) we talked about how technical innovation (in networking and containers) can address an operational challenge involving different stakeholders within an organisation.

In the next two starting today, we zoom out to see another pattern of dynamics between business and IT: the pressure for a faster provisioning of resources for a new project and some solutions around it.

Automation, a first step towards Private Cloud

Everyone is aware of the value of the automation.

Many companies and individual engineers have always implemented various ways to save time, from shell scripts to complex programs and to fully automated IaaS solutions.

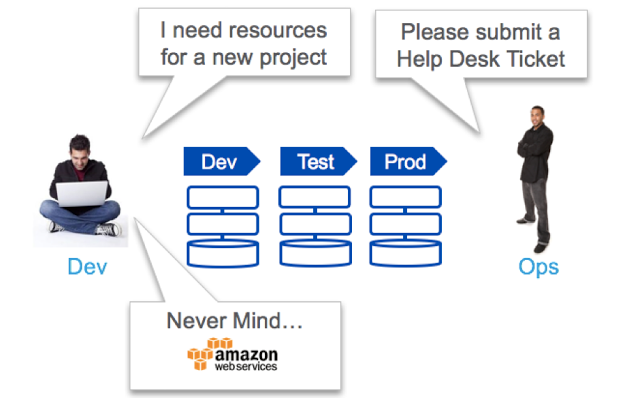

It helps reducing the so-called “Shadow IT”, a phenomenon that happens when developers or Line of Business users can’t get a fast-enough response from the IT and rush to a Public Cloud to get what they need. In most cases they will complete and release their project sooner, but sometimes troubles start with the production phase (unexpected additional budget for the IT, new technologies that they are not ready to manage, etc.).

Shadow IT happens when corporate IT is not fast enough

Do you blame them? Developers do their best to provide value to their stakeholders. The complexity of traditional IT organization is the real cause of the problem, and private cloud is a solution to it.

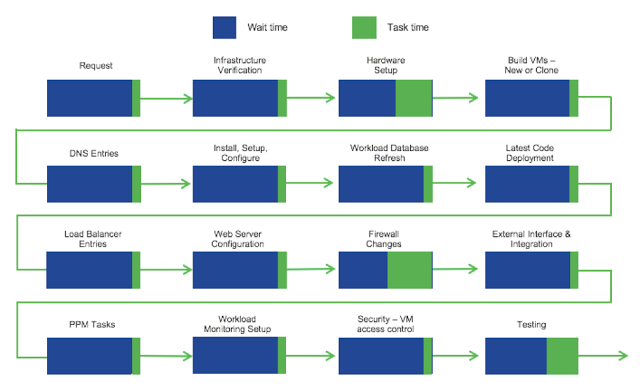

For sure, sometimes we see silos (a team responsible for servers, one for storage, one for networking, one for virtual machines, of course one for security…) and the provisioning of even a simple requests can take too long.

Process inefficiency due to silos and wait time

Pressure on the Infrastructure Managers and Admins

It is safe to say that inefficiency in the company affects the business outcome of every project.

Longer time to market for strategic initiatives, higher costs for infrastructure and people.

Finger-pointing starts, in order to identify who is responsible for the bottleneck.

Efficiency of teams and individuals is questioned and responsibility is cascaded through the organization from project managers to developers, to the server team, to the storage team and finally… the network team being at the end of the chain, if they run out of people to blame 😉

Those on the top (they consider themselves on top of the value chain) believe – or try to demonstrate and I’ve also done it in the past – that their work is slowed down by the inefficiency of the teams they depend on. They try to suggest solutions like: “you said that your infrastructure is programmable, now give me your API and I will create everything I need on demand”.

Of course, this approach could bring some value, but it reduces the relevance of the specialists’ teams that are supposed to manage the infrastructure according to best practices, apply architectural blueprints optimized for the company’s specific business, and know the technology in much deeper detail.

They can’t accept to be bypassed by a bunch of developers that want to corrupt the system playing with precious assets with their dirty hands… 🙂

The definitive question is: who owns the automation?

Should it be left to people that know what they need?

Should it be owned by people that know how technology works and at the end of the day are responsible for the SLA including performance, security and reliability that could be affected by a configuration made by others?

By definition the developer is not an expert on security, if he or she can easily program a switch via its REST API to get a network segment, it’s not the same as making sure that traffic is secured and inspected.

In my opinion, and based on the experience shared with many customers, the second answer is the correct one; IT administrators and infrastructure managers should have the responsibility however there are ways to modernize their role and value.

Offering a self-service catalog

A first, immediate solution could be the introduction of an easy automation tool like Cisco UCS Director, that manages almost every element in a multi-vendor Data Center infrastructure: from servers to networks to storage to virtualization all with a single dashboard.

But what is more interesting is that every atomic action you can do in the web GUI is also reflected in a task in the automation library, allowing you to create custom workflows lining all the tasks for a process that you want to automate.

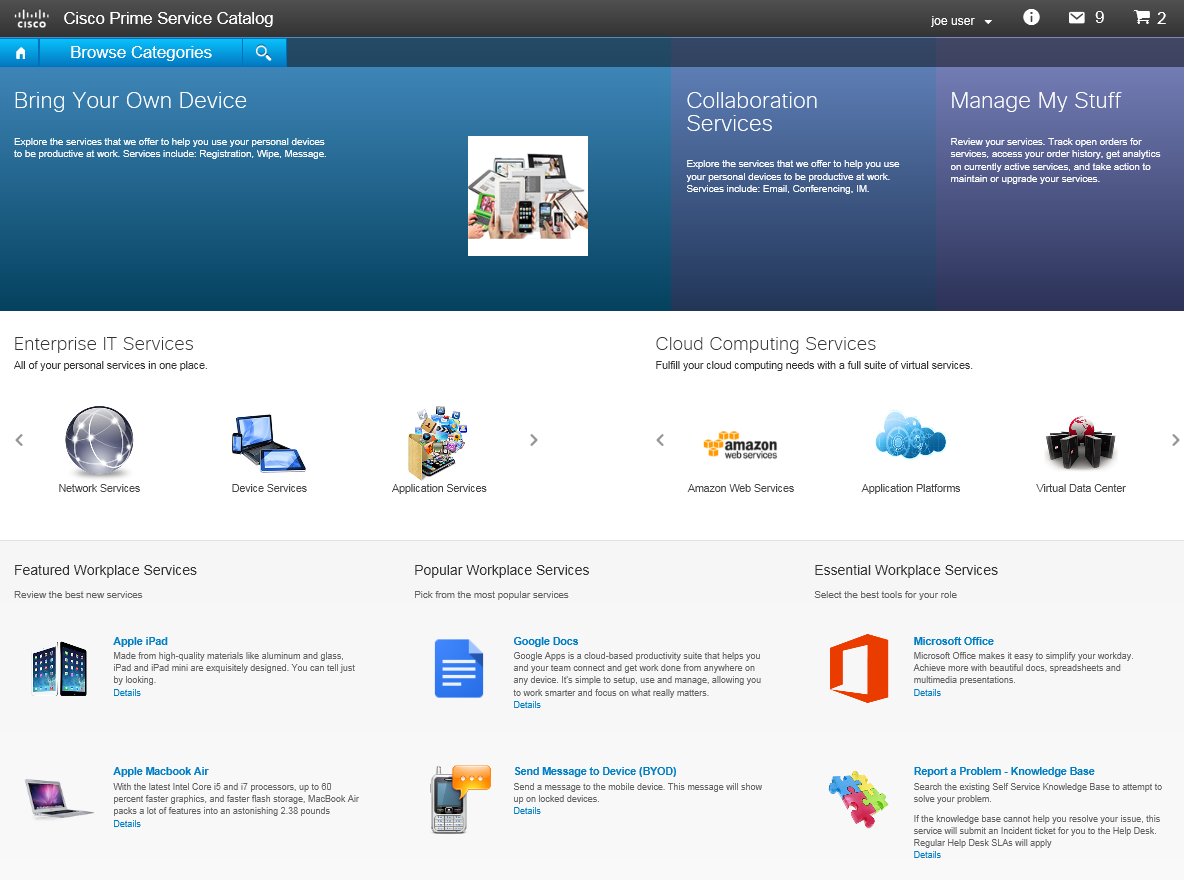

Once the automation workflow has been built and validated, it can be used by the IT admin or by the Operations team to save time and ensure consistent outcome (no manual errors). But it can also be offered as a service to all the departments that depend on the IT for their projects.

And they will certainly appreciate the efficiency improvement:)

This important step will bring you towards the implementation of a Private Cloud. As we all know, the mature adoption of a cloud model can be seen as a journey that implies careful change management (technology, but mainly processes and people are affected). Some companies are afraid of the risk associated with change and don’t start that journey.

But once the automation is in place, you can easily define what services you want to offer and what is the governance model that best fits your organization. This can be eventually based on a product like Cisco Prime Service Catalog, or another framework providing a portal for IT Service Management (ITSM).

Cloud is about delegation (of task responsibility or ownership of resources): offering those in a self-service catalog does not imply automation necessarily (there could be humans behind the portal).

On the other hand, automation can both offer value to the IT Admin (even in the absence of cloud) and be the enabler for the cloud in the mid term (the cloud portal would delegate the automation engine for the provisioning).

You will find more detail in the second part of this post.

Resources

http://lucarelandini.blogspot.it/2016/02/governance-in-hybrid-cloud.html

http://lucarelandini.blogspot.it/2016/10/just-1-step-to-deploy-your-applications.html

http://lucarelandini.blogspot.it/2017/01/hybrid-cloud-lessons-learned.html