Who would have bet on a SRv6 interoperability demonstration at SIGCOMM 2017 conference this summer?

Very few of us actually… For those who are not familiar with the SIGCOMM 2017 conference, this is a highly respected conference where key networking innovations and orientations are being discussed every year by leading vendors, major web and service providers, and academic researchers.

But, why should you care about such a demo?

Well, this is the kind of proof point that should be important to you as you may consider designing your next-generation infrastructure on a nascent technology, such as SRv6. Witnessing strong support and interest from the vendor community is an assurance moving forward you won’t be trapped into any technological dead-end.

So, let’s reflect on what happened lately:

- the first “SRv6 Network Programming” IETF draft was issued in March this year

- the first hardware support of SRv6 VPN and Traffic Engineering on NCS 5500 was showcased at Cisco Live US in June

- the first interoperability demo was successfully delivered at SIGCOMM 2017 conference

I’m not saying this is unprecedented but I’ve rarely seen technologies that get implemented across different vendor’s hardware platforms in less than 6 months after the first publication of the IETF draft.

The scope of the interoperability demonstration we designed for this conference is really impressive. See below some details about the content of this demo.

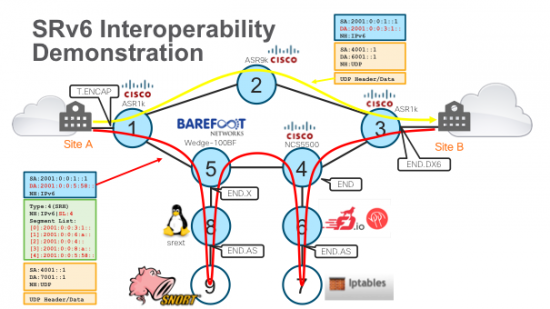

An end-to-end integration of SRv6 VPN, SRv6 Traffic-Engineering and Service Chaining. All of that with data-plane interoperability across different implementations:

- Three Cisco Hardware-forwarding platforms: ASR 1K, ASR 9k and NCS 5500

- Two Cisco network operating systems: IOS XE and IOS XR

- Barefoot Networks Tofino on OCP Wedge-100BF

- Linux Kernel officially upstreamed in 4.10

- Fd.io

It runs on a small network topology formed of two non-SRv6 edge domains located on both sides of an IPv6 core domain (see below diagram).

Each edge domain consists of two Linux machines, one IPv4 only and the other IPv6 only. In the core domain:

- HW node 5 is running P4 implementation of SRv6 on Barefoot Tofino

- HW nodes 1 through 5 are SRv6-enabled

- Node 8 is an SRv6-enabled Linux machine

- Node 6 is a regular Linux machine running FD.io

- Two non-SR services, Netfilter and Snort, are running respectively behind node 6 and 8.

Without getting into too many technical details here, the demo can:

- Handle IPv4 or IPv6 traffic coming from both sites A and B

- Isolate traffic from different VPNs

- Steer the traffic over a different path from the shortest path computed by the routing protocol

- Send the traffic to specific nodes where certain services can be applied to the traffic

This is a nice use case that highlights the power of SRv6 network programming concept. To make things very simple, you have now the ability to code directly into each packet header where the traffic should be sent and how the traffic should be treated. Not only is this simple but it is also extremely scalable as you don’t have to maintain any states in the network … To get more familiar with SRv6 network programming concept, read this great blog posted by Brendan Gibbs, VP Product Marketing.

You can expect to see more refined and complex use cases over the next few months as we closely work with major Service Providers, that are just about to uncover all the capabilities of SRv6 and how to make use of them to develop innovative services.