When you search online, use email, watch a video, or click on a recommended link, then you might’ve used hyperscaler networks that support everything from cloud-hosted applications to neural networks with AI/ML. Applications are generating and ingesting data at tremendous rates, which means data centers are handling massive traffic loads. According to the International Energy Agency (IEA), for every bit of data that travels the network from a data center to end users, another five bits of data are transmitted within and among data centers (“Data Centres and Data Transmission Networks”, November 2021). IEA estimates that 1% of all global electricity is used by data centers, with rising energy use over past years, increasing 10%-30% per year (IEA 2022).

Challenges

To handle these enormous demands, cloud providers add more servers with higher capacities resulting in more data being driven into the network– both inside and outside of the data center. Without properly scaled infrastructure, the network becomes a bottleneck. And that’s when users post about their sub-par experiences.

Given the current environmental and geopolitical concerns, energy efficiency and achieving net zero carbon emissions are increasingly becoming top priorities for cloud providers. But as data centers need to scale and support more bandwidth-hungry applications, the question is how much power, space, and cooling are needed while going green?

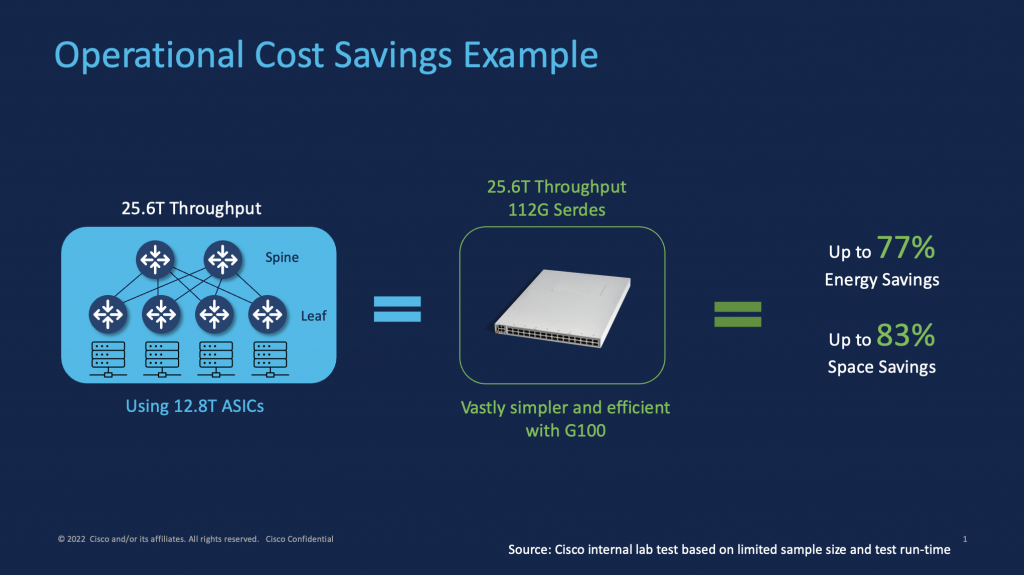

Throwing bandwidth at the problem might seem like an easy fix until the tradeoffs appear. Growing capacity involves more equipment, power, space, and cooling to avoid potential overheating, or risks with running out of rack space. For example, scaling to over 25Tbps capacity in a leaf/spine network using 32x400G switches at 1 RU each would require six switches. That’s roughly 3000 watts consuming 6RU space, not to mention the 36 fans needed for cooling.

Solution

But, if we could build massive capacity in a small footprint, we could tip the cost and performance scales back in favor of the providers and help the environment. What might sound near impossible is now available and shipping, with the newest member of the Cisco 8100 Series, the Cisco 8111-32EH that is capable of 25.6T capacity in a compact 1 RU form factor (see press release). With ultra-fast QSFP-DD800 ports using a Silicon One G100 25.6T ASIC, the Cisco 8111 can support 64x400G ports in the same 1 RU form factor at approximately 700W.

That’s up to a 77% reduction in power, 83% reduction in space and number of fans to achieve the equivalent capacity using multiple 12.8T ASIC switches, based on internal lab study 1 (see Figure 1).

Not only can cloud providers benefit from major operational cost savings and lower their power bills, but this reduction also translates to significant savings in carbon emissions with ~9000 kg CO2e/year in Greenhouse Gas (GHG) reduction (based on internal estimates). And the power savings could be used to add more revenue-generating servers that help cloud providers grow their business.

The massive energy savings are a result of our extensive investments, such as Cisco Silicon One. For example, using cutting-edge 7nm technology helps improve power efficiency, while utilizing 256x112G SerDes helps deliver 25.6T in a single chip for major power/space/cooling reduction.

With high density QSFP-DD800 modules, we’re introducing new 2x400G-FR4 and 8x100G-FR modules that enable high density breakouts to 100G and 400G interfaces, supporting 64x400G ports or 256x100G ports in just 1 RU.

These modules will enable higher radix for next-gen network design and double the bandwidth density of the platform footprint with efficient connectivity over copper, single-mode, and multi-mode fiber. The QSFP-DD800 form factor can support next generation pluggable coherent modules that may require higher power dissipation and still provide the efficiency and cooling capabilities.

By delivering ground-breaking innovations, such as for public cloud data centers, with much higher densities in compact form factors, we can help customers drastically reduce operational costs. Essentially, we’re redefining the economics of cloud networking through cost-effective scale.

Innovations with Cisco 8000 Series

The Cisco 8000 portfolio is used for mass-scale infrastructure solutions to deliver extreme performance and efficiency, including for cloud networking with hyperscalers and web-scale customers adopting hyperscale architectures (see Enabling the Web Evolution). The Cisco 8000 portfolio includes the following products and cloud use cases:

- Cisco 8100 Series products are fixed port configurations in 1 RU and 2 RU form factors that are optimized for web-scale switching with TOR/leaf/spine use cases. This product line includes 8101-32H, 8102-64H, 8101-32FH and now Cisco 8111-32EH. The 8100 can be offered as disaggregated systems using a third-party NOS, such as Software for Open Networking in the Cloud (SONiC), in addition to integrated systems with IOS XR.

- Cisco 8200 Series products are fixed port configurations in 1 RU and 2 RU form factors, including Cisco 8201 and Cisco 8202 that can be used for the Data Center Interconnect (DCI) use case to link data centers using IP transport. These are offered as integrated systems with IOS XR.

- Cisco 8800 Series are modular systems, and include Cisco 8804, Cisco 8808, Cisco 8812, and Cisco 8818 that can be used in a variety of use cases such as super-spine, high-capacity DCI and WAN backbone use cases

More details can be found in the Cisco 8000 data sheet.

The Cisco 8000 gives our customers the flexibility to choose from a range of form factors, speeds across 100G/400G/800G ports and a variety of client optics, integrated systems, and disaggregated systems using SONiC for open-source networking use cases (see Rise of the Open NOS), and leveraging Cisco Automation portfolio.

At Cisco, we meet customers where they are, which means providing solution choices that fit their use cases and requirements to enable the right customer outcomes.

Higher networking capacity is now possible without dramatically higher power bills and inefficient cooling solutions, which leads to rapidly expanding the carbon footprint. Customers can save costs, help the environment, and minimize user frustrations through better experiences. Instead of choosing between going green or scaling big, we’re helping cloud providers do both with Mass-scale Infrastructure for Cloud. Find out more about the Cisco 8000 Series.

Open Compute Project (OCP) Global Summit

The Open Compute Project (OCP) Global Summit is meeting this week (Oct 18th – 20th) in San Jose, and this year’s theme is “Empowering Open”, which we fully support through open collaboration with the open-source community. Two years ago, at the OCP Global Summit, we first introduced Cisco 8000 supporting SONiC with both fixed and modular systems, and continue to collaborate with the OCP community to develop open solutions. For example, at the OCP 2021 Global Summit, Meta and Cisco introduced a disaggregated system, the Wedge400C, a 12.8 Tbps white box system utilizing Cisco Silicon One (see press release).

This year, we are showcasing our 8100 portfolio with 8101-64H, 8102-32FH, 8101-32H along with the new 8111-32EH and QSFP-DD800 optics at OCP. We will also be showing SONiC demos with different use cases, such as dual TOR and with modular systems using the 8800. Cisco will also be speaking at the Executive Talk, featuring Rakesh Chopra on “Evolved Networking, the AI Challenge”.

Visit our booth at Open Compute Project (OCP) Global Summit this week to see our latest innovations.

1 Source : Cisco internal lab test based on limited sample size and test run-time.