This is a story with two protagonists: Low latency “ABR” encoding (where “ABR” stands for “Adaptive Bit Rate”) and the hot ticket that is “Video Aware Networking.” And believe it or not, it will keep you at the edge of your seat. (Stick with me here.)

It goes like this.

Act 1: As broadcasters, “traditional” content providers and distributors, re-tool their shops to reap the benefits of the online, IP video streaming world, a big design goal is to match the (previously) unparalleled video quality of, well, the traditional broadcast. Video streams, distributed in every direction, tightly time-synchronized. So that every TV in the house — my house, your house, your cousin Jimmy’s house — gets the same high quality pictures and the same audio, at the same time.

Enter “latency,” defined here as the number of seconds that elapse, on average, from the time a TV show is broadcasted (again, traditional sense), to the time you’re watching it on TV.

Turns out the number is around six. Six seconds. That’s the bar, for anybody aiming to deliver live video on par with “how it ever was,” since the beginning of television.

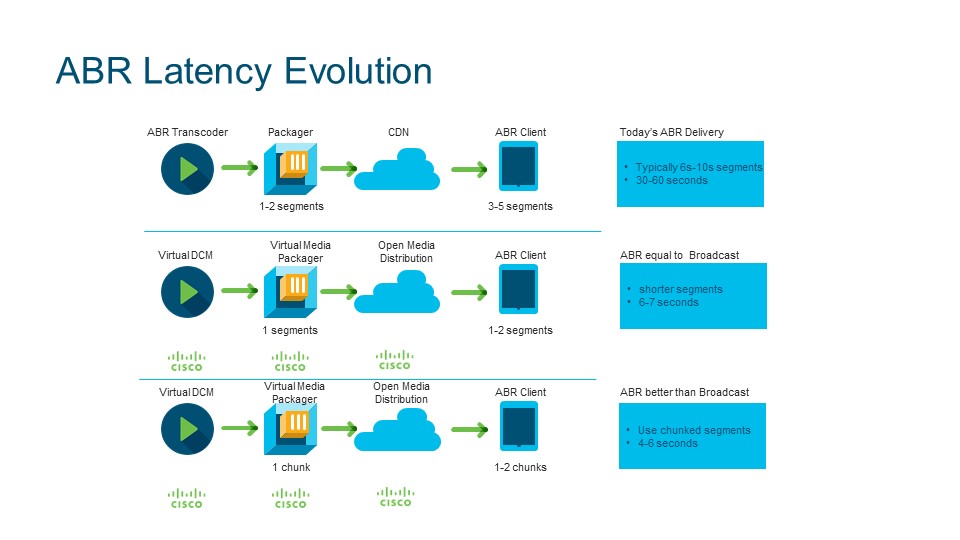

Act 2: Over-the-top video, IP-streaming video, call it what you will — the way we consume video is changing, and part of it impacts that six second latency target. Turns out that IP delivery introduces additional latencies, because, the video is processed multiple times and delivered in fragments. (Those same fragments are what get “adapted” in “adaptive bit rate” encoding, by the way.)

Early on in IP-streaming video, the fragments were pooled into buffers, waiting to play out. Most of them were sized into 10-second segments. Client devices (connected TVs, smart phones, tablets) would buffer about three segments — resulting in roughly 30 additional seconds (10 x 3) of latency at the client.

As a result, if you and I were watching the same show, delivered “over-the-top,” you might see it a half a minute (or more) before I do.

Apple’s latest HLS spec has recommended a lower six seconds segment, but clients were still adding at least 18 seconds of latency (6 x 3). Not to mention the video processing latency added to encode, package and distribute content.

Act 3: Clearly, something was (is) needed to accelerate the process, from the time it leaves the ABR encoder, to the time it reaches the client device. One way is known as “Chunked Transfer Encoding,” which also goes by “HTTP 1.1.” Essentially, it breaks video segments into smaller chunks, of a specific duration. As soon as a chunk is processed, the segment can be published (like a file being transferred) – even before the remaining chunks of that segment are processed. As a result, segments can be sent in near real-time, through the pipeline, chunk by chunk.

Another way to reduce latency is coming from the Common Media Application Format (CMAF), a new media streaming format that provides visibility into the chunks, as they’re being created. That way, every component in the pipeline can know that the chunk they’re receiving and forwarding belongs to a specific segment, to be published.

One caveat — or, opportunity, really — is that Chunk Transfer Encoding and CMAF streaming capabilities need to be added to the entire pipeline. As in, every component (encoder, packager, CDN) needs to be made aware of the chunks. (Which is where “Video Aware Networking” ties in.)

Act 4: At IBC 2017, we demonstrated our work to squeeze more latency out of IP-streaming video transmissions. And guess what, we’re at end-to-end latency of 5 seconds as opposed to more than 30 seconds (what!) in our proof of concept. Here’s how it breaks out:

- Encoder latency reduced to 3 seconds for real-time transcoding and the creation of 10-second segments, comprised of 0.5 second chunks

- Packager latency of 1 chunk at 0.5 second duration

- CDN latency of 1 second

- Client latency of 1 chunk buffer of 0.5 second duration

There’s end-to-end awareness of CMAF chunks, too — every component in the pipeline is aware of the HTTP chunks, and participates to reduce overall latency. It’s an open standards-based implementation (HTTP 1.1 and CMAF) that takes advantage of the underlying, “video aware” network, to squeeze down latencies, and more closely align with the “gold standard” that is traditional broadcast television.

There’s end-to-end awareness of CMAF chunks, too — every component in the pipeline is aware of the HTTP chunks, and participates to reduce overall latency. It’s an open standards-based implementation (HTTP 1.1 and CMAF) that takes advantage of the underlying, “video aware” network, to squeeze down latencies, and more closely align with the “gold standard” that is traditional broadcast television.

So: As dry-as-a-bone tech stories go, I think this one carries a remarkable amount of sizzle. Don’t you?

Insightful article. Loved it!! I have a lot of questions regarding CDN. Please add me to your list or community mpofub@gmail.com