I promised a follow up in my recent blog about Facebook’s new Bryce Canyon storage platform. While the title of this blog may sound like it questions the new platform’s capabilities. It like the previous blog aims to help you understand why it is perfectly suited for Facebook’s unique needs.

The first blog was focused on storage attributes which is near and dear to my heart. But the other thing I noticed about Facebook’s new storage platform was the use of two Mono Lake micro-servers. This little micro-server is pretty cool because Facebook uses it everywhere to maximize component re-usability. When managing infrastructure at massive scale, component commonality can lower equipment costs and increase your operational efficiency.

But while the micro-server is cool, it also has tradeoffs. It’s called “micro” for a reason. It’s a small board with limited real estate offering only a single socket configuration for a low power “system on chip” (SoC) processor, up to four DIMM slots and two M.2 SSD boot drives.

The Mono Lake micro-server is designed to accept a purpose built Intel Xeon D-1500 SOC processor with options up to 16 cores and support for up to 128GB of DDR4 memory. This line of processor was actually jointly designed with Intel to reduce power for the massive scale distributed infrastructure providing service to the Facebook web application.

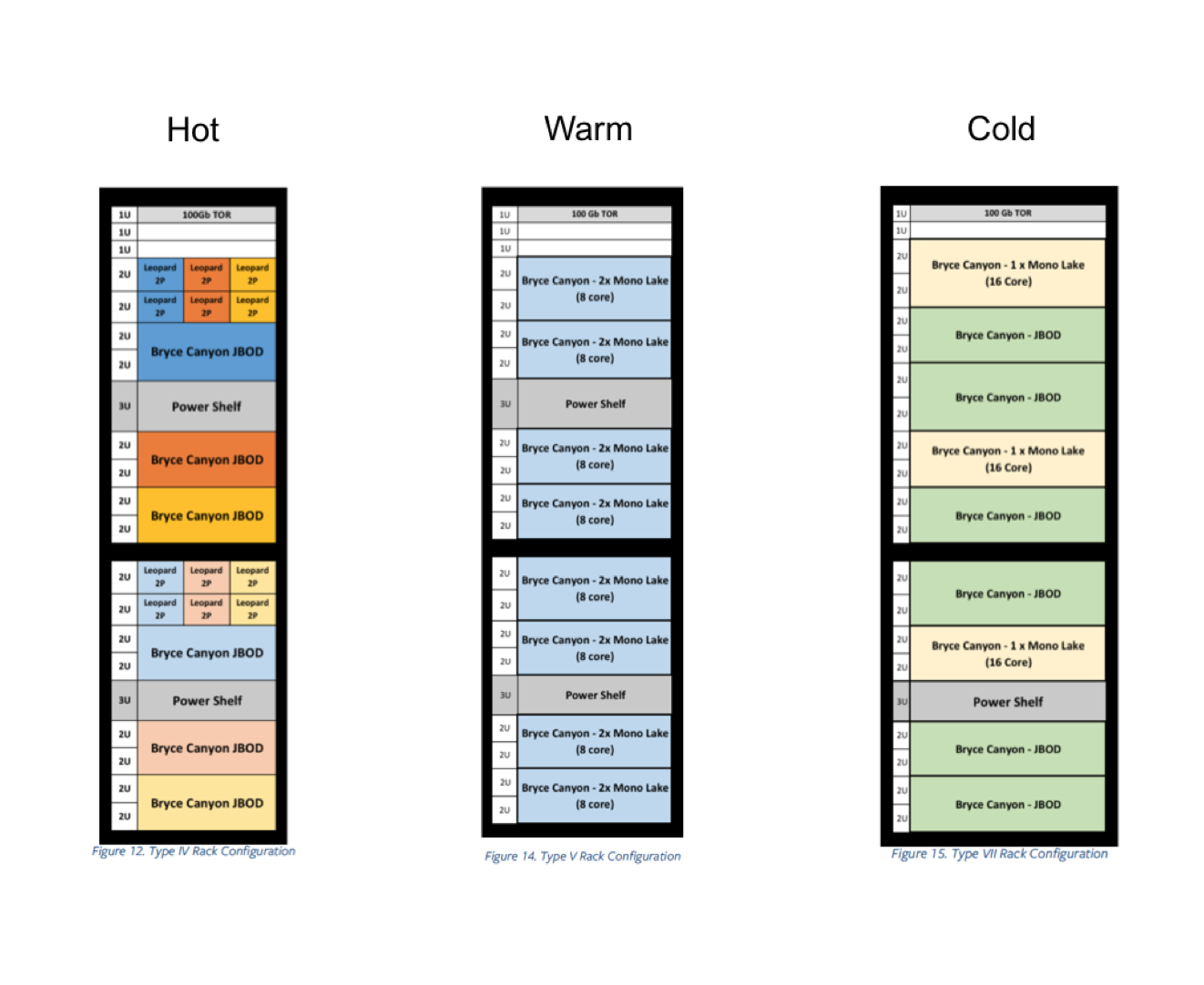

As with most web applications, data at Facebook is tiered across different types of racks depending on how often it’s users need to access that data; i.e hot, warm, or cold. Tim Pricket Morgan details this out quite well in a blog on The Next Platform. These racks are workload optimized for each specific tier utilizing uniquely configured platforms like Bryce Canyon for storage services or their new Tioga Pass server for compute services. The Bryce Canyon hardware specification does a good job showing the various updated rack configurations and their intended deployment scenarios.

Facebook’s data tiering is far more sophisticated than what you see in general purpose data centers even when you are hosting your own web front end. But the workload right-sizing concept is still important. Especially if you have data intensive workloads that need higher core to spindle ratios like you see for hot to warm data store environments. Or on the other end of the spectrum when data is cooling down and want to retain everything but do so cost effectively and incrementally scalable. Regardless of data temperature, picking the right infrastructure for the right job can help you realize immediate ROI but also deliver tremendous long term value when done right.

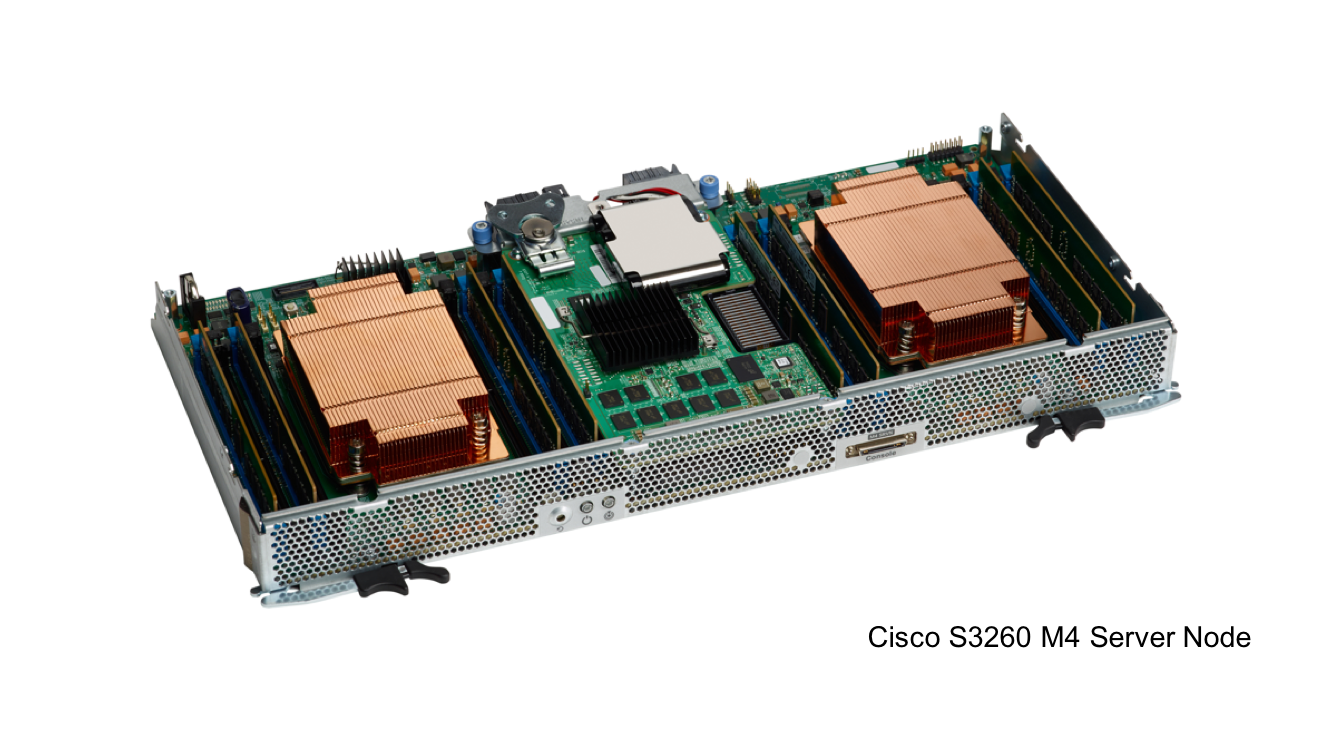

Now comparing Bryce Canyon to the Cisco UCS S-Series. The S3260 similarly is a modular system architecture offering hybrid storage support in single or dual server node configurations. Utilizing dual server nodes, a wide range of processors combined with a big footprint of DDR4 memory enable workload right-sizing support for the most demanding data intensive workloads. In single server node configuration, you can transform the S3260 with expander card options changing it’s functionality for increased storage, I/O connectivity or application acceleration with flash memory. These capabilities combined with the power of advanced compute and storage automation provided by UCS Manager make the S3260 the most versatile storage server in the industry hands down.

Last November we released new M4 server nodes that support Intel’s Xeon E5-2600 v4 processors. These processors are optimized to perform well for a wide range of workloads striking the right balance between performance and power consumption. The S3260 M4 server nodes support processor options ranging from 8 to 18 cores and up to 512GB of DDR4 memory.

A major difference with our S3260 server nodes vs. Mono Lake is form factor. Our dual-socket architecture combined with the current Intel Xeon E5-2600 v4 processors create a total of 36 cores per server node satisfying the most performance hungry use cases. This creates a 1:28.1 core to spindle ratio per server that is attractive for performance hungry workloads like our Big Data and Analytics solutions. As a proof point, check out this great blog from my colleague Rex Backman on recent S3260 big data performance benchmarks validated independently by certified TPC Auditors.

You can also maximize for capacity by scaling down to a single server-node configuration with 16 cores per server node. This particular use case combines a disk expander card to maximize storage capacity and further lowering your $/GB. This flexibility is great for warm storage and active archive use-cases we regularly see from our software defined storage and data protection solution partners.

A great example of this is a recent customer engagement with our data protection partner Veeam. Together we helped Texas Medical Association reduce their backup storage costs by $600K while shortening their backup windows from 12 to 24 hours to 3 to 5 hours by eliminating costly NAS appliances. This disaggregation of legacy storage systems is becoming more commonplace as best of breed storage software is coming together with industry leading data center infrastructure like Cisco Unified Computing System and are delivering more value at much lower cost.

While Facebook being founded as a digital business might be ahead of the game. The rest of us are going through a major digital transformation and are seeking insights from our own data. And to get there we need to start a journey using products that can be easily adopted without throwing the baby out with the bath water.

If you are interested in learning how Cisco Unified Computing System can help you with your data storage needs, here’s a great brochure to get you started. Also be sure to download this free White Paper from Moor Insights & Strategy to learn how active data is creating new business insights.

If you liked this blog please stay tuned for more on data center storage solutions at Cisco and be sure to follow me on Twitter.

great article

great