When I hear “hybrid” I think about cars. Those gas and electric cars that can switch to whichever power source is needed when it is needed the most or makes the most economical sense. The switch is, or at least should not be noticeable. Having been in a hybrid car I’ve experienced the switchover, the interesting thing is that the car itself does not change. The controls are the same; the car steers and moves that same way it did before. I don’t have to learn anything new or make some changes to the way I drive to continue to use the car.

That’s the way hybrid cloud should be, whether I’m using the private cloud in my enterprise or I’m using IT managed provider clouds. If the workload is completely in the provider cloud, split between the provider cloud and private cloud or completely in the private cloud, it really should make no difference to the workload… or me.

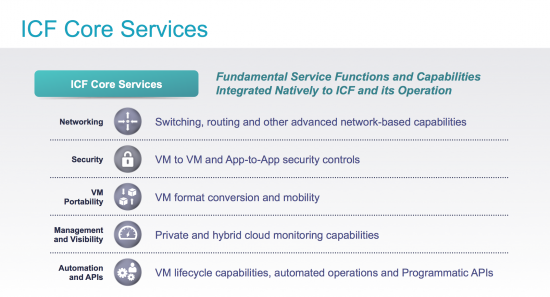

How true are those scenarios though? As soon as part of the workload is in the provider cloud things need to change. Application admins and network admins surely have already been enlisted to figure out how the workload applications can function in the provider cloud and still interact with the private cloud. What services does the workload need? How does workload security work? How does workload routing work? How does the hybrid cloud environment impact the workload? How many different cloud provider APIs will need to be leafed? These are only a few of the considerations there can be many more.

But what if you could put some or all of your workload in the provider cloud and not have to change anything? What if the layer 2 network could be extended into the provider cloud? What if the IP addressing of the workload could remain the same? What if the security of the workload could be maintained? What if workloads on different cloud providers could be managed in a homogeneous fashion? What if there was an API for automation of workload lifecycle?

All those “What ifs” are answered with Cisco Intercloud Fabric

The Intercloud Fabric installation documentation and videos go a long way to get you started, however I wanted to provide a bit more information to help you prepare for Intercloud Fabric installation, configuration and connection to either Amazon AWS or Microsoft Azure or both.

First you’ll need an account at the cloud provider; the account needs and capabilities are different for each provider.

- AWS

- Standard AWS account

- Account Policy requirements

- Full EC2 Access Policy

- Full S3 Access Policy – if you are going to deploy Windows images

- Full AWS Marketplace – if you are going to deploy Intercloud Fabric Router

- To deploy the Intercloud Fabric Router (AKA Cisco CRS100V in AWS Market place) you will need to accept the terms for the image. The CSR image to search for in AWS Marketplace is “Cisco Cloud Services Router: CSR 1000V – Bring Your Own License (BYOL)”

- Azure

- Standard Azure Account

- Intercloud Fabric Router is not yet deployable in Azure

- Intercloud Fabric Firewall is not yet deployable in Azure

Download the attached presentation for a step-by-step guide to getting an AWS or Azure account. Cisco Intercloud Fabric – Cloud Access Keys

The Intercloud Fabric Release Notes detail all the physical and virtual hardware requirements. You will also find the details for which Guest OS versions are supported and any other of the latest caveats related to Intercloud Fabric.

Currently Cisco Intercloud Fabric infrastructure runs on VMware vSphere 5.1 (including update 1) and 5.5, an Enterprise Plus license is not needed. The infrastructure is comprised of three virtual appliances

- Intercloud Fabric Director (ICFD)

- Prime Network Services Controller (PNSC)

- Cloud Virtual Supervisor Module (cVSM)

vCenter is required even if you are deploying on a single ESX host. Intercloud Fabric needs to connect to a vCenter environment.

From a networking perspective you’ll need some IP addresses, management IP address and IP address for the networks that will be extended to the provider cloud. For the ICF Infrastructure the IP requirement is

- ICFD – 1

- PNSC – 1

- cVSM – 1

Each connection from ICFD to a provider cloud requires the deployment of an Intercloud Extender (ICX) this is a VM that will reside in the VMware environment and an Intercloud Fabric Switch (ICS) this is a VM that will be deployed in the provider cloud. These two VMs create the secure tunnel over which layer 2 networking is extended. The ICX/ICS pair (the IcfCloud) can be provisioned as single VM instances at each end of the layer 2 extension or in an HA mode where there is a primary and secondary VM instance of the ICX in the enterprise cloud and a primary and secondary VM instance of the ICS in the provider cloud.

For IcfCloud connections the IP requirement is

- ICX – 1 in standalone mode or 2 in HA mode

- ICS – 1 in standalone mode or 2 in HA mode

There are two network services that can be deployed in Amazon AWS, the Intercloud Fabric Router and the Intercloud Fabric Firewall. The Intercloud Fabric Router is the Cisco CSR1000V and the Intercloud Fabric Firewall is the Cisco Virtual Services Gateway. In the Intercloud Fabric documentation you will see the acronyms CSR and VSG respectively. As the documentation and messaging for Intercloud Fabric evolves there will be standardization on the ICF router and ICF firewall acronyms.

- ICF router – 2

- 1 for management interface

- 1 for sub-management interface

- ICF firewall – 1

Minimal management IP address requirement for AWS deployment is 8

Minimal management IP address requirement for Azure deployment is 5

With the accounts setup and the requirements known, you’re ready to get started and experience all the benefits of hybrid cloud without the hassle.

Once you’ve absorbed all you need to know about Cisco Intercloud Fabric, try it. A 60-day license is available, the license allows Intercloud Fabric the ability to connect to Amazon AWS and Microsoft Azure. Additionally the license allows for deployment of the Intercloud Fabric Router and the Intercloud Fabric Firewall.

You said “But what if you could put some or all of your workload in the provider cloud and not have to change anything?” — Agreed, that would be helpful. Now, let’s move up the value chain, and raise our expectations. What if your cloud management platform could automate the end-to-end provisioning process for Intercloud? What if the skill-set of the sysadmin was also elevated to focus more on achieving meaningful Business Outcomes from cloud service deployments? My Point: the savvy CIO demand for cloud effectiveness should exceed the demand for cloud efficiency, so let’s raise the bar of expectations. Maybe that grand scenario seems like hybrid cloud utopia today, but one day it could become commonplace.

They hybrid car example is a good comparison. User experience should always be top of mind when designing any process.

Thanks for this interesting and informative post about ICF.

Is there any planned interoperability with the following parts:

– KVM as another hypervisor in the private cloud?

– Google Compute Platform as another public cloud provider?

KVM and Hyper-V will be supported in the March 2015 ICF release. Cloud providers are being added every quarter. Google compute could be an ICF cloud provider in the future although no time has been set.

Interesting!