In Part 1 of my blog series, I gently introduced the concept of congestion and proposed an analogy between a storage area network and the highway system. In this Part 2, I will now provide some scenarios to compare.

Congestion scenarios on highway system

Given the above highway system analogy, consider two basic cases for congestion:

Highway Scenario 1:

A new shopping center opens nearby a particular exit and sends out a reasonable number of mailers advertising its grand opening. A crash between two vehicles occurs at that particular exit. This greatly slows down the rate of vehicles that can exit there. If there are more vehicles needing to exit at that same place, then this will cause a traffic backup or congestion as vehicles slowly go around the crash site.

What happens when there is congestion? Traffic will start to back up at the exit and then back on the highways between various interchanges. The congestion may go back a long distance through many interchanges and affect all vehicles utilizing those highway sections and interchanges. Even vehicles that are not on their way to the new shopping center may be affected. The congestion works its way back to the source of the vehicles (the entrances and interchanges where they enter the highway) slowing the rate that new vehicles are allowed into the highway system. The rate of new vehicles entering the system at all points on that common route will be slowed to match the rate of vehicles exiting the highway system.

Highway Scenario 2:

A new shopping center opens and sends out a large number of mailers advertising its grand opening and special low low prices! This solicitation causes many people living in end destinations (homes) to drive their vehicles to the new shopping center via the highway system comprised of Entrances/Exits/Interchanges and the highways themselves.

The vehicles may go through or past several Interchanges/Exits along the way to their destination of the Exit for the new shopping center. Thus, you may have many vehicles attempting to leave at the Exit for the new shopping center. If the exit is functioning as designed, then as long as the rate of vehicles exiting for the shopping center is less than the designed rate there will be no problems or congestion at that exit. However, if the exit is functioning as designed, but the rate of vehicles exceeds the capacity of the interchange, then congestion will result.

What happens when there is congestion? Traffic will start to back up at the exit and then back on the highways between various interchanges. The congestion may go back a long distance through many interchanges and affect all vehicles utilizing those highway sections and interchanges. Even vehicles that are not on their way to the new shopping center may be affected. The congestion works its way back to the source of the vehicles slowing the rate that new vehicles are allowed into the highway system. The rate of new vehicles entering the system at all points will be slowed to match the rate of vehicles exiting the highway system.

Congestion scenarios on Fibre Channel SAN

In a similar manner, Fibre Channel SANs have the same two congestion scenarios:

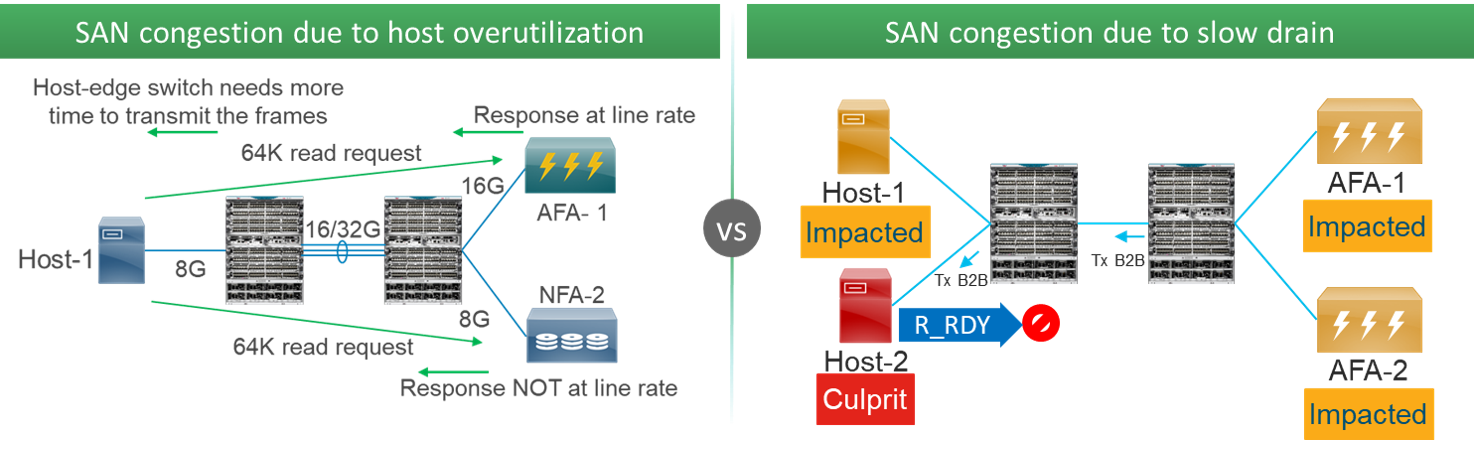

SAN Scenario 1 – Slow Drain:

When an end device on a port solicits data but prevents the SAN from transmitting to it, is like an exit where there was a crash. The end device can do this by exerting buffer-to-buffer flow control. The rate of egress traffic is reduced causing a traffic back up into the SAN through various switches (interchanges) and ISLs (highway sections). Other initiators and targets that are utilizing these same switches and ISLs will be affected. The root cause is a malfunctioning end device on a port in a similar fashion to the exit that is not performing as designed due to the crash.

SAN Scenario 2 – Overutilization:

Both initiators and targets solicit data. Initiators do this via SCSI/NVMe Read commands. Targets solicit data, after receiving SCSI/NVMe Write commands, via the Transfer Ready request. When an end device solicits more data than can be transmitted to it in a given amount of time, it is trying to ‘overutilize’ its port. This is like when the new shopping center sent out the large number of advertisements and ‘solicted’ all the vehicles. An initiator can do this by issuing many SCSI/NVMe Read commands, closely spaced together, requesting a large amount of data.

Summary

Both slow drain and overutilization scenarios tend to show up with the same symptoms on the SAN, but the root cause is different. One would need to see what happens on ports connected to end devices and decide.

If you are asking yourself why I decided to embark on writing this two-part blog series, you should definitely read my next blog on SAN congestion. That was the true goal I had in mind since the beginning, with these Part 1 and Part 2 serving as introduction and to wet your appetite. In my next blog, I will describe Dynamic Ingress Rate Limiting, a specific new approach to counteract slow drain and overutilization within storage networks built from MDS 9000 switches. At least for storage networks, we seem to have hopes to prevent or recover congestion situations. Keep watching this space. Meanwhile, if you find a good solution for preventing highway congestion, don’t be shy and make sure to share it widely.

I’d like to thank Ed Mazurek for inspiring and contributing to this blog series. In the end, he is the one who spent several hours with me in the car. In case of congestion, human beings can at least talk to each other. I’m not sure Fibre Channel frames have the same privilege.

Looking forward for the next blog 😉