As most of you may already know, QUIC is a new transport protocol that began as a Google experiment for HTTP/2, which is now being standardized at the IETF. It will also be the default transport protocol for HTTP/3. As a result, it is likely to be very widely deployed in the next few years. Given the growing popularity of QUIC and its expected widespread deployment, it was essential to provide an implementation of QUIC in the Vector Packet Processor (VPP), both to measure the performance that we could reach with a full userspace QUIC stack, and as an enabler for more innovation around the QUIC protocol.

If you’re not familiar with VPP, you may want to check out the Fast Data Project website or these other blog posts that describe it.

QUIC has several interesting properties compared to TCP. First, it is an encrypted protocol that uses the TLS 1.3 handshake, so it can be used with the same X.509 certificates that are used today for TLS. Second, QUIC provides an arbitrary number of reliable and ordered byte streams to the application. By giving the transport protocol visibility into the application streams, the head-of-line blocking that happens with TCP/TLS is solved. Data that belongs to one stream is not delayed when some packets are dropped on another. Finally, it supports mobility, which allows mobile endpoints to switch networks without breaking the QUIC connections.

Building a QUIC stack in VPP

If you try to use QUIC in an application today, one of the first things you will likely notice is that there are quite a few different implementations of the protocol in several languages, and each of them has a different northbound API.

VPP’s implementation of transport protocols, called the host stack, exposes a socket-like API to ease its consumption by regular Linux applications. It is even possible for some applications to use it unmodified, thanks to a thin translation layer that can be loaded with LD_PRELOAD. For QUIC, we wanted to follow the same philosophy, so we decided to define a new API based on socket concepts to ease the development of QUIC applications.

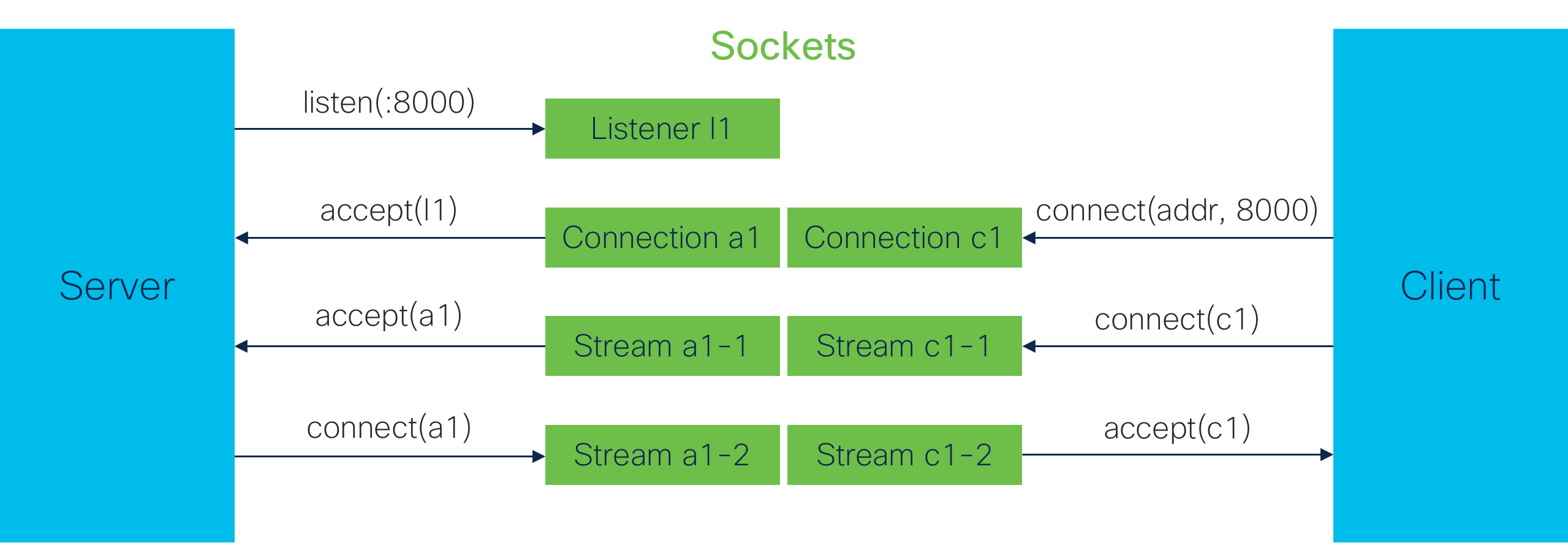

Because of the built-in stream multiplexing layer in QUIC, applications need to handle three types of logical objects: listeners, connections, and streams. These objects have different properties from the traditional UDP and TCP listeners and connections. As a result, the adaptation to socket semantics was not direct. We used three types of sockets to expose the QUIC API, with the following properties:

- Listener sockets: like regular TCP/UDP listeners, they bind to a port and are used to accept incoming connections. Listeners can be closed when the application no longer desires to accept new connections.

- Connection sockets: these sockets are created by connect or accept calls. Unlike TCP or UDP sockets, however, these sockets cannot be used to send or receive data and are only used to manage the streams belonging to the connection.

- Stream sockets: these are the sockets that provide send and receive functions so peers can exchange data. They can be created either by accepting on a connection socket (for streams opened by the peer), or by calling connect on the connection socket to create a new, locally initiated stream. Streams can be closed by either peer at any point during the connection.

Connections can be closed at any point by either peer as well. Closing a connection implicitly closes all the streams that belong to this connection.

QUIC sockets implementation

The VPP host stack has an extensive internal framework, based on the concept of sessions. Host Stack sessions roughly match sockets in the POSIX world. Because QUIC is based on UDP, and the host stack already has a UDP implementation, the QUIC implementation in VPP is both a consumer (of the VPP UDP stack) and a provider (of the QUIC protocol) in the host stack.

For the QUIC protocol logic, we did not reimplement everything from scratch. Instead, we chose to rely on the Quicly library. This library has many properties that make it a great fit for VPP:

- It is written in C and actively maintained.

- It focuses on the QUIC protocol logic only, and is very modular (pluggable crypto APIs and congestion controls, no assumptions about packet handling).

- It does not make any assumption about the threading model of its caller.

- It is released under the MIT license.

With these components in place, all that was left to do was to write the glue code between Quicly and VPP, and modify the host stack a little to fit QUIC streams logic. While doing so, we had to pay attention to several more subtle issues around acknowledgements, memory management, and connection closure handling. In particular, we built our stack so that the data is acknowledged only once it has been read by the application.

We also had to be careful not to let the sender overrun the available memory space in the receiver. Fortunately – and this attests to its maturity – QUIC has several options that let each peer control the amount of un-acknowledged data sent by the other end, and thus the memory required to store it, both at the stream and connection level.

The code is implemented as a VPP plugin and is available in the VPP repository.

Optimization

Of course, since performance is a key differentiating factor for userspace networking in general and VPP in particular, we did not stop there and spent time benchmarking and optimizing our QUIC stack.

Because QUIC is a new protocol, there are no standard performance testing tools like iperf for QUIC. As a result, we built our own iperf-like benchmarking tool on top of the host stack. It opens a certain number of connections, a certain number of streams per connection, and then tries to send data as fast as possible on each stream. This allows us to measure the throughput and scalability of the QUIC stack.

The first thing we noticed was that most of the time was spent in the crypto code, so we set out to optimize that part. We started by replacing Quicly’s default crypto backend, which relies on Openssl, with VPP’s fast native crypto APIs. This simple change yielded a 10-15 percent improvement in performance.

To further improve the performance, we applied a trick that is at the root of VPP’s performance, which is to split the packet processing into elementary steps and apply each step to many packets before moving to the next one. This improves instruction cache efficiency and maintains predictability for data cache usage. For QUIC, we split the packet processing in two steps, protocol processing and encryption/decryption:

- On the RX path, using the Quicly offload API, after the initial packet header is decoded, we decrypt all the available packets before passing them to Quicly for the protocol processing.

- On the TX path, we replace Quicly crypto with no-op handlers that only save the encryption keys and data ranges. The actual encryption is performed for all the packets generated by Quicly at the same time, just before they are passed to the UDP stack.

This optimization brought the total throughput gains to 30 percent, reaching 4.5Gbit/s per VPP worker.

Takeaways

Going through this exercise allowed us to reach our goal of providing a fast and fully userspace QUIC stack. It showed that VPP has reached a maturity where its internal APIs allow rapid optimization of new components with significant results. It also demonstrated that the host stack is flexible enough to easily accommodate new protocols.

We now have a clearer understanding of what the bottlenecks are and will continue to optimize this stack. Keep an eye out for some new innovative applications on the horizon as well!

If you would like to experiment with this yourself, check out the example applications code, and feel free to reach out to vpp-dev@lists.fd.io if you have any questions.

You know what UDP is not good for? Poor quality internet connections that drop packets due to corroded lines, poor signal strength, etc.

Hi Jim,

Although plain UDP is indeed sensitive to poor network conditions, multiple studies have shown that QUIC actually performs better than TCP in lossy or slow networks. See for instance:

https://eng.uber.com/employing-quic-protocol/

https://www.fastly.com/blog/why-fastly-loves-quic-http3

https://blog.apnic.net/2019/09/25/does-tcp-keep-up-the-pace-against-quic/

https://static.googleusercontent.com/media/research.google.com/en//pubs/archive/46403.pdf

http://www2.cs.uh.edu/~gnawali/papers/quic-globecom2016.pdf

https://www.researchgate.net/publication/318801580_QUIC_Better_for_what_and_for_whom

https://arxiv.org/pdf/1906.07415.pdf