Update July 9, 7:55 p.m EST: GitHub removed the DeepNude source code from its website. Read more here.

After 4 days on the market, the creator(s) of DeepNude, the AI that “undressed” women, retired the app following a glut of backlash from individuals including leaders of the AI community:

Although DeepNude’s algorithm, which constructed a deepfake nude image of a woman (not a person; a woman) based on a semi-clothed picture of her wasn’t sophisticated enough to pass forensic analysis, its output was passable to the human eye once the company’s watermark over the construced nude (for the free app) or “FAKE” stamp in the image’s corner ($50 version of the app) was removed.

However, DeepNude isn’t gone. Quite the opposite- it’s back as an open source project on GitHub- making it more dangerous than it was as a standalone app. Anyone can download the source code. For free.

The upside for potential victims is that the algorithm is failing to meet expectations:

The downside of DeepNude becoming open source is that the algorithm can be trained on a larger dataset of nude images to increase (“improve”) the resulting nude image’s accuracy level.

If technology’s ability to create fake images-including nudes- well enough to fool the human eye isn’t new, why is this significant?

Thanks to applications such as Photoshop and the media’s coverage of deepfakes, if we don’t already question the authenticity of digitally-produced images, we’re well on our way to doing so.

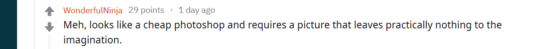

In the below example, Photoshop is used to overlay Katy Perry’s face onto Megan Fox’s (clothed) body:

DeepNude effectively follows the same process. What’s significant is that it does so very quickly via automation. And instead of overlaying 1 person’s face onto 1 (other) person’s body, because it’s a machine learning algorithm trained on a dataset of over 10,000 images of nude women, reverse-engineering the output images to its component parts would be nearly impossible.

All this begs the question- how should we respond? Can we prevent victimization by algorithms like these? If so, how?

What role does Corporate Responsibility play? Should GitHub, or Microsoft (its parent company), be held accountable for taking down the DeepNude source code and implementing controls to prevent it from reappearing until victimization can be prevented?

Should our response be social? Is it even possible for us teach every person on the planet (including curious adolescents whose brains are still maturing and may be tempted to use DeepNude indiscriminately) that consent must be asked for and given freely?

Should we respond legislatively? Legally, creating a DeepNude of someone who didn’t provide consent could be treated as a felony similar to blackmail (independent of the fake image’s use). The state of Virginia thinks so. Just this month, it passed an amendment expanding its ban on nonconsensual pornography to include deepfakes.

If the response should be legislative, how should different countries and regions account for the global availability of DeepNude’s source code? If it becomes illegal to have or use the algorithm in one country and not another, should the code be subject to smuggling laws?

Given that an AI spurred this ethical debate, what about a technological response? Should DeepNude and other AIs be expected or required to implement something like facial recognition-based consent by the person whose image will be altered?

What do you think? How should we as human beings respond to DeepNude’s return- and the moral hazards it and similar AIs create? How should we protect potential victims? And who is responsible for doing so? Join the conversation and leave your thoughts below!

Sorry, but I think it's hilarious to see articles like this with people so outraged over a fake photo! Do it to men too, we don't care, lol. As is usual for the modern leftist, their answer is to "Ban all the things!". You simply can't stop it and only a control freak would even try. It is no surprise whatsoever that one of AIs first uses is for porn. The same was true for the first photo and video cameras. People have been making fake sex pics since before computers, by clipping out heads from photos and pasting them on nude bodies. You literally want to stop a natural progression of something that has been around for MANY decades! But just try to ban it, you'll only make it more popular. Alcohol Prohibition anyone? How is that Drug War going? You people never learn, do you? "But muh consent!" Get a grip on reality, it is filled with countless breaches of consent. Do you consent to be filmed and listened to on a daily basis by your government? Good luck enforcing that consent, lol. Some would say that you need a European view of nudity, but I deeply disagree. It's perfectly fine to be offended and modest, but it's no where near fine to control the actions of others, or to demand that they live by your puritanical standards. Anyone else notice how the left has turned into a bunch of prudes who want to ban everything? Funny that.

No response is needed, there are no moral hazards and the only "victims" are people who make themselves a victim by being bothered by what other people do with readily available content. But since criticism is derogatory, I expect this will be censored too. 1984 was not a guidebook.

Felony action for a fake photo? What if someone cuts out a photo of your face and glues into a magazine? What if I drew a stick figure without clothes, and then wrote your name above it? Is that a felony? What if there’s no nudity, but the fake picture created makes you uncomfortable, is there a crime there, too?

If anything, this software puts into question EVERY nude photo that’s out there. Flooding the internet with fake nude pictures makes “real” nude pictures valueless. As it becomes more prominent, the general public will just assume all nudes are fake.

Plus, we’re talking about charging teenage boys with a felony for curing sexual curiosity with software. Ridiculous.

This is a civil issue akin to defamation and should be treated as such.

I was completely set aghast when I first heard about this. While I for one would never put nude images of myself online, knowing that someone could take any public image of me in a bikini (and I'm sure fully clothed as the algorithm "improved") is a scary thought. Not only could it ruin one's career (most corporations have an image to uphold – including current and future employees), I certainly don't want someone imagining what I look like naked … Let alone have a potentially accurate image!

And while this algorithm has a ways to go before it can 100% fool the naked eye (the example image adding breast size for example), the psychological effects on an unsuspecting "victim" could be devastating.

As to corporate responsibility – we already know this was part of that. How? Because it violated Github's usage "laws" which is why it was taken down. Will it be put up again? Likely. But for now, we celebrate the wins.

Is the response social? You bet! The biggest changes happen because of the loudest (and most repetitive) advocates.

Should our response be legislative? Absolutely. We already have laws in place that are in alignment with this being against the law. But as we have seen throughout time, as technology and the human race has progressed, we must amend laws to be able to apply to the times. America's forefathers had no understanding of today's weapons, but wrote the Constitution in such a way as to cover the future they could see. But political pundits, angry citizens, and lobbyists (not to mention horrific events) have caused nations to review and even redefine said laws.

It would be wonderful if everyone adhered to "consent culture" – but I don't see this happening any time soon. We would have a lot less people getting hurt and jailed, for sure. And technology like DeepNude wouldn't exist.

Now – regarding some of the prior responses to this article …

"What if someone cuts out a photo of your face and glues it into a magazine?" There are already laws against using one's images without consent.

"What if there's no nudity but the fake picture created makes you uncomfortable?" Once again, CONSENT is the key issue here. If you gave your consent for someone to use your photo in whatever way they saw fit, then you gave them the right to do so.

I also disagree that putting fake nudes on the internet makes real ones "valueless" – because those who are seeking nudes will find what they like regardless. Real or not. (Or they'll make their own from the content they do find.)

As to charging teenage boys with a felony for "curing sexual curiosity"? No no … That's not how this works. Once again – consent always plays a part. This kind of thinking is how judges allow rapists to have little to no consequence after violating another person's bodily autonomy. If these "teenage boys" want to "cure their sexual curiosity", there are other ways to do so without violating someone else.

As to "the only 'victims' are people who make themselves a victim by being bothered by what other people do"? This illogical statement opens the doors to someone breaking into your home and stealing your prized possessions … Or even murder because hey – you're not alive to complain. So this "argument" is moot.

And regarding "European view of nudity" – while they have nude beaches, that is not the same as what is happening here.

And finally – to "it's no where near fine to control the actions of others"? You have mentioned several times something akin to this. But if we followed your logic, there would be no laws. Period. No laws protecting anyone from harm by government/insurance/etc, no laws stopping someone from murdering you or breaking & entering, no laws to provide civil peace … None of it.

@Kassandra, I love how people like you will use selective quoting and no critical thinking to suit your narrative. People like me are so used to it, that we outright expect it. You conveniently left out "…with readily available content." from the quote. That allows you to make the ridiculous connection to murder, which is also something I've come to expect. Being bothered by fakes, is like being bothered with you becoming a Meme on the internet for doing something that people find funny. You might find it embarrassing and disrespectful, but in no way should it be criminal. According to people like you, Memes should be illegal without consent. Posting screenshots of any private conversation, no matter how "vile" they might be to you, should be illegal. Posting videos on youtube of people doing hilarious things in public, without their consent, would be illegal. The Wayback Machine would be illegal, since it archives things without consent. In fact, you quoting me would be illegal, because I didn't give consent! The consent argument is completely absurd. The reality is, your consent ends once you consent to putting the information into the public sphere. So yes, you in fact create your own victimhood by not recognizing this simple, well known fact. I've known it as far back as the mid 90s, when first getting on the internet! Which is why seeing people be so outraged about it now, is hilarious. It's like the 90s all over again, as if people had never used the internet before.

Furthering the lack of critical thinking, is my next quote about controlling the actions of others. Again, you took it to an extreme to fit your narrative, since my wording gave you the opening. A critical thinker would have recognized my obvious meaning, thanks to the references to Alcohol Prohibition and the Drug War. You want to control what people do with publicly available information, which as I've mentioned above, is completely absurd. You further prove this, by stating that there are "already laws against using one's images without consent.", which in no way applies to people cutting and pasting magazine images, or even online images! It is freedom of expression, and as I stated above, is done on a regular basis without absurd repercussions. Control freaks are already in charge of social media platforms and they will be paying the price for their thought policing soon enough.

As to ruining a person's career, congrats, you just mentioned something that actually does need to change! No employer should be allowed to fire you for the actions of others. Again, this would be akin to being fired for satire, where someone did one of those silly animations of putting your head on someones body. An totally innocent example would be someone uploading a Christmas animation of your head on the head of one of Santa's Elves, helping to make the toys, with Trump's head on Santa. Then as usual, some NPC leftists get outraged, "Orange man bad!", and control freak youtube pulls the video, but not after your employer sees it and fires you, since the SJWs targeted your company and "damaged" their image. Sadly, that's not even an unrealistic example these days.

Also, a "European view" is not limited to nude beaches. They have a completely cavalier attitude about nudity in general. They are the type to upload nude pictures of themselves to their facebook album and not give it a second thought. They would laugh at the very idea of being so offended over someone making a fake nude of them. While I don't share their views, I am much more of a Libertarian, so I don't take offense easily. I know how people are, especially men. For example, it is a fact that men masturbate to your bikini pictures. They did it as teens with underwear catalogs and some of them never stopped. You might be "completely set aghast" by them doing that without your consent, but too bad, that's life. You posed half naked, and not surprisingly, they got horny. If you want to be in control, then simply don't consent to putting your images in the public sphere. I sure don't! I don't put a single image of myself online and family members were told that I don't want to be on there, adding to the social system's facial recognition database. They might break my consent, which wouldn't make me happy, but I'm certainly not going to pursue legal action against them for it!

This is an important post. Technology has effects in real life and as a society we want to use it to create clear benefits.

In our system of values, DeepNude's positive effects are unclear, selfish at best. The downsides though can be devastating: harassment, humiliation, degradation,… how would it feel if it happened to you or people you love?

As a maker of technology, you always have to consider the balance between positive and negative impacts

Thank you for adding your thoughtful remarks to the conversation, Mark! 100% agree that the technologies we develop need to create clear benefits.

This app is vile, but it's undeniable that sexual fantasies is a huge market ($100B?), that is not going to change. "Creativity"-enhancing tools like these will come back in some form, probably for pros at first

Thanks for writing this! The author of the app clearly didn't think about whether it SHOULD have been built. What a waste of talent

Thank you for sharing this post. As noted by some with great power we have great responsibilities. Applications like this should never be promoted. I'm glad that GitHub took the appropriate action of removing it from the repositories. Obviously, others who have already downloaded this very irresponsible creation of software and will share it everywhere else. Additional training and cultural awareness are needed. It requires education and creative ways of coaching others in understanding respect and consent. Typically, the survivors are continuously victimized. Practicing and imaging by creating these types of software create the delusion that predatory behaviors are a normal thing to do. Keep up the great work in building awareness. This is what great advocacy is about, where people value social justice in all aspects of people's lives.

Thank you for adding your thoughts to the conversation, Maybelyn! IRT your statement "others who have already downloaded this very irresponsible creation of software and will share it everywhere else," a handful of those people have attempted to share links to download the source code in the comments of this blog.

Great but it still available on some websites online like [url removed by admin] where you can download it and its source code as well.

I believe that there are no enough reasons to ban this software but it cannot be removed totally from the internet anyway, I have found this page [url removed by admin] where you can download it and its source code as well.

A legislative response is not whats needed here, a social one is. Surely there are many more unpopular applications which can do the same thing and applications like photoshop can also be used for activities like this. It is the awareness and mentality of people which needs to be changed which should be done through a social response. Good job posting the blog, its a start to spreading awareness about this issue.

Thanks for being part of the conversation and adding your perspective!

Can Neural Network help spot DeepFake Photos?

https://technoidhub.com/machine-learning/can-neural-network-help-spot-deepfake-photos/17159/

Nudity is not pornography.

Biggots who lobbied to take Deepnude down are totalitarian morons, way more dangerous than an app which does NOT create a picture of a real nude woman, but roughly what a painting is about.

Censorship is at its highest on the internet. Now it's time to start taking censors down.