Intelligent machines have helped humans in achieving great endeavors. Machine Learning (ML) and Artificial Intelligence (AI) combined with human experiences have resulted in quick wins for stakeholders across multiple industries, with use cases ranging from finance to healthcare to marketing to operations and more. There is no denying the fact that ML and AI have helped in quicker product innovation and an enriching user experience. But hold on to your thoughts for a moment. Have you ever pondered why ML/AI projects can be cumbersome to deploy? What is the mechanism to secure the model from tainted data or DDoS attacks? Do we have a framework for model governance and performance evaluation post model deployment?

According to Algorithm IA’s survey, 22% of respondents generally take around one to three months to deploy new ML/AI models into production. Not only this, 18% of the respondents took almost more than three months for any new deployments. These delays lead to reduced throughput in long run. As per a survey conducted by IDC in June’2020, 28% of the Machine Learning projects never make it to production deployments. Considering the importance of ML/AI technologies and their varied use cases, the survey results are potentially disheartening.

Let’s try to take a step back and understand why some of the organisations or groups generally take a long time to package and deploy these ML models into production. The very first problem lies in the fact that Machine Learning doesn’t deal just with the code, rather it is a combination of complex real-world data and code. ML models are fed with data from real-world scenarios, which makes them prone to complexities due to the unpredictable nature of the data. The behavior of an ML/AI model depends upon a combination of factors such as data dimensionality, prediction time, data density, etc. Some of these factors are time-dependent and cannot be predicted in advance.

Another major reason why some organizations or groups find it challenging to scale and deploy ML/AI models into production is due to the problem of plenty. Yes, you heard it right. There are a plethora of tool options available out there in the market that can be leveraged to develop, train, test, and deploy the models. As a result, teams might tend to choose varied toolsets as per their needs without throughly understanding the unified tool compatibility. Since ML/AI models are deployed in highly dynamic production scenarios and are expected to self-learn and evolve over a period of time, organisations need to carefully model data pipelines for data pre-processing and data crunching. Considering the importance of ML/AI technologies and their varied use cases, later entrants of the industry may be more focused on consuming the pre-built efficient models instead of re-inventing the wheels.

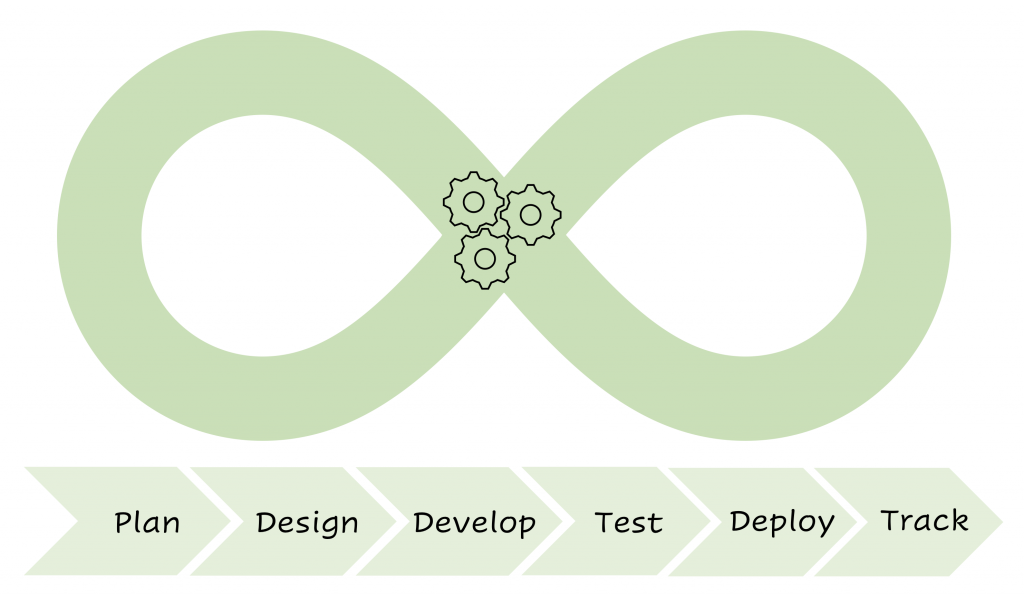

The solution to most of the above-mentioned challenges is Machine learning Operations (MLOps). MLOps is a combination of processes, emerging best practices, and underpinning technologies that provide a scalable, centralized, and governed means to automate and scale the deployment and management of trusted ML applications in production environments. MLOps is a natural progression of DevOps in the field of ML and AI where it provides a framework with multiple features housed under a single roof. While it leverages DevOps’ focus on compliance, security, and management of IT resources, MLOps’ real emphasis is on consistent model development, deployment, and scalability.

Once a given model is deployed, its unlikely to continue operating accurately forever. Similar to machines, models need to be continuously monitored and tracked over time to ensure that they’re delivering value to ongoing business use cases. MLOps allows us to quickly intervene in degraded model(s), thus ensuring improved data security and enhanced model accuracy. The workflow is similar to continuous integration (CI) and continuous deployment (CD) pipelines of modern software development. MLOps provides the feature of continuous training, which is possible because models are continually evaluated for their performance accuracy and can be re-trained on new incoming datasets.

MLOps helps in monitoring the models from a central location regardless of where the model is deployed or what language it is written in. It also provides a reproducible pipeline for data models. The advantage of having a centralized tracking system with well-defined metrics is that it makes it easier to compare multiple models across various metrics and even to roll back to an older model if we encounter any challenges or performance issues in production. It also provides capabilities for model auditing, compliance, and governance with customized access control. Hence MLOps is a boon for groups who are finding it hard to push their ML models into a production environment at scale.

As of now, the MLOps market is in a pretty nascent stage in the industry but considering the importance of ML/AI technologies and their varied use cases, it is predicted that the MLOps market will be over $4 billion in just a few years. It brings the best of iterative development involved in training ML/AI models thus making it easier to package and deploy models to the production environment depending on consumer use cases. Despite being a relatively newer framework, MLOps is expanding its market at a very rapid pace.

Very Informative and beautifully explained!

Glad to know that you liked it. Thank you.

I am starting to hear about MLOps a lot recently and this framework makes so much sense with modern industry needs. Great Article !!

Thank you.

Nicely written Utkarsh!

Thank you, Hector. Grateful for all your support and motivation.

Informative, considering MLOps is expanding its market at a very rapid pace.

Well Explained.!!

Thank you Sayali. Glad to know you liked it.