Written by Vasanta Rao, Technical Marketing Engineer, Transceiver Modules Group (Cisco Optics)

Understanding FEC Part 1: The Basics of FEC

In part one of this two-part blog post, we review the basics of forward error correction (FEC). In part two, we will discuss implementation and the impact of FEC on performance.

Today’s insatiable demand for bandwidth is pushing network speeds higher and higher. Unfortunately, the faster the data rate, the greater the likelihood of transmission errors. As a result, forward error correction (FEC) has become an essential tool for almost any network implementation, particularly those operating at 100G and above. FEC uses a combination of specialized algorithms and redundant data bits to detect and correct a certain number of errored bits in each data block. To learn more about the specifics, read on.

What is FEC?

FEC is a digital process for detecting and correcting errors in a bitstream by appending redundant bits and error-checking code to the message block before transmission. The error-checking code contains sufficient information on the actual data to enable the FEC decoder at the receiver to reconstruct the original message.

FEC uses n-symbol codewords consisting of a data block that is k symbols long and a parity block (the code and redundant bits) that is n-k symbols long (see figure 1). We identify an FEC code with an ordered pair (n,k). The type and maximum number of corrupted bits that can be identified and corrected is determined by the design of the particular error-correcting code (ECC), so different ECCs are suitable for different network implementations and performance levels.

The choice of FEC code to use in a given link is determined by number of sources. Certain standards, such as those created by the IEEE call out specific FEC codes. Module manufacturers must comply with these requirements in order to meet standards. Multi-source agreements (MSAs) may also provide guidelines for which FEC code to use, but they are not necessarily binding. In certain cases, module manufacturers may elect to use a higher-performing FEC code than specified as a move to differentiate themselves from the competition.

When the optical transceiver is operated in a Cisco host, the default FEC is enabled automatically; however, there could be other FEC codes for specific application protocols that can be supported by the host software. The user can decide to enable these, depending on their specific application.

FECs have limitations

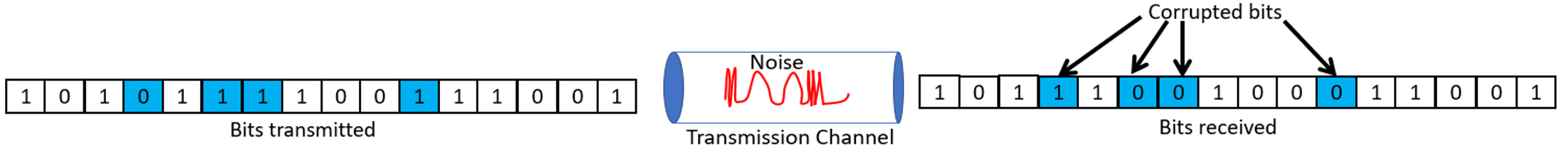

A transmission error occurs when a bit flips from a 1 to a 0 or from a 0 to a 1. Data corruption can occur in the form of single-bit errors or burst errors (see figure 2). A single bit error is a single, random errored bit in a data block. A burst error is a group of changed bits. The errored bits in a burst error are not necessarily contiguous, however.

A wide variety of ECCs have been developed. Some are only suitable to handle single-bit errors, while others work well on both. The Reed-Solomon (RS) family of ECCs is most suitable for optical communications. RS codes use algorithms that operate on symbols rather than on individual bits, which gives them the ability to detect and correct burst errors. RS codes can correct a series of errors in a block of data to recover the original message block. Because they are so effective at dealing with random and burst errors, RS ECCs have been widely adopted by optical communications standards and MSAs such as the IEEE and the 100G Lambda MSA.

In part two, we will discuss the trade-offs involved in any see what they mean for network performance.

For more details on FEC, see our new white paper, “Understanding FEC and Its Implementation in Cisco Optics.”