According to the Merriam-Webster dictionary, container is a noun, and is defined as a receptacle for holding goods. Alternatively, it also means, a portable compartment in which freight is placed (as on a train or ship) for convenience of movement.

In this blog, I’ll be talking about containers in the networking sense and the important role they play in virtualizing data center resources and computing capabilities.

Today, data centers represent the digital foundation for delivering most IT services and providing storage, communications, and networking to the growing number of connected devices, users, and processes that we rely on in our personal and business lives. The increased focus on business agility and cost optimization has led to the rise and growth of cloud data centers. Virtualization is at the heart of the efficient and effective use of data center technology. There are various forms of virtualization, but containers offer a unique set of benefits for particular implementation types.

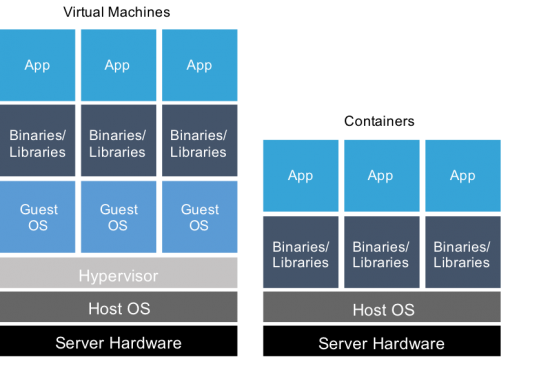

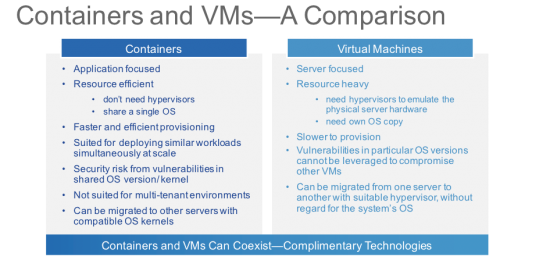

Containers can be defined as operating system (OS) level virtualization. The physical server and the common single instance of OS gets virtualized into multiple isolated guests – each replicating the real server. This approach to virtualization is resource efficient and particularly well suited for the devops culture, allowing development teams to streamline develop-test-production processes. While not suited for multi-tenancy, containers can be deployed for grouping web servers into a single VM, or web applications at scale.

For agile development, applications are often disaggregated into many component-level micro services. By simplifying these complex applications into highly portable, smaller and less risky software components, response times can be reduced to meet business and customer needs. Each component service now becomes an end point to be accessed and shared across network. While containers should not be considered synonymous with a micro-service, they are very well suited for developing and deploying micro-services as well as the associated tools that manage micro-service based applications.

In our latest Global Cloud Index (GCI) Forecast 2015-2020, we include containers in the workload analysis. A server workload is defined as a virtual or physical set of computer resources, including storage, that are assigned to run a specific application. For the purposes of quantification, we consider each workload being equal to a virtual machine or a container. In fact, containers are one of the factors enabling a steady increase in the number of workloads per server deployed. While, we have not parsed out the number of containers separately, we estimate, based on our conversations with industry experts, that today containers are about 5 percent of the total workloads. By 2020, that share will grow to be at least 20 percent or more.

Please feel free to share your comments or opinions about this blog below. For further details on data center and cloud trends, please visit our public website. To further engage and ask questions, we invite you to join the GCI community.