And why 100% detection is grossly misleading

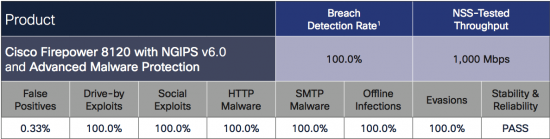

It is with great pride that we received the latest Breach Detection Report from NSS Labs, in which Cisco achieved a 100% detection rate – we simply couldn’t be more pleased to have our products so well-represented and validated in the market, and we truly believe we have the best, most effective security products available today. You can get your complementary copy of the NSS Labs report here.

The Results

“The Cisco FirePOWER 8120 with NGIPS and Advanced Malware Protection received a breach detection rating of 100.0%. The FirePOWER 8120 proved effective against all evasion techniques tested. The solution also passed all stability and reliability tests.”

If you are not familiar with the NSS Labs Breach Detection Report, its simple premise is based on detecting a breach, especially those that bypass traditional detection and protection methods like antivirus and firewalls, and that this detection happens through any means possible. A full description from the 2016 BDS Comparative report for Security from NSS Labs:

“The ability of the product to detect and report successful infections in a timely manner is critical to maintaining the security and functionality of the monitored network. Infection and transmission of malware should be reported quickly and accurately, giving administrators the opportunity to contain the infection and minimize impact on the network. As response time is critical in halting the damage caused by malware infections, the SUT should be able to detect known samples, or analyze unknown samples, and report on them within 24 hours of initial infection and command and control (C&C) callback. Any SUT that does not alert on an attack, infection, or C&C callback within the detection window will not receive credit for the detection.”

This means that the Cisco products detected 100% of the tested breaches within 24 hours, an impressive testament to our commitment to delivering truly effective security to our customers.

The Challenge – Reducing Operational Space

While a tremendous accolade to our engineers, the result is indeed bittersweet. Cisco prides itself in having products that can perform so effectively, but we also work diligently to guide our customers, and a “100% detection” claim without context would confuse security practitioners who look at long periods of arbitrary time, versus reducing the operational space of the adversary.

Therefore, it is questionable whether Cisco or any other vendor should even claim 100% detection as a proof point. Is this a useful measure to push vendors to build better products and provide improved value for our customers? Of course. But in the end, 100% detection of a breach within 24 hours is not what we should be striving for. Asking a simple question illustrates the point well:

If two products scored 100% with product A detecting 100% of breaches within 5 minutes and product B detecting 100% of breaches within 1380 minutes which would you prefer?

Which product do you think the attacker would like to face if given the choice?

Which product would you think the defender would like to have given the choice?

We believe that the time it takes to detect the breach is the better measure and the goal for this measure should be zero minutes. That’s because it would reduce the operational space of the adversary, which is the space and time an adversary has in which to operate after breaching a system. This is far more representative of the effectiveness than the ultimate detection of a breach at some arbitrary future time. Reducing the operational space available to the adversary is what limits the amount of damage done once a system has been breached and it is this time that is the key factor to successfully identifying and mitigating the breach.

Proper Measurement: A ‘For Instance’

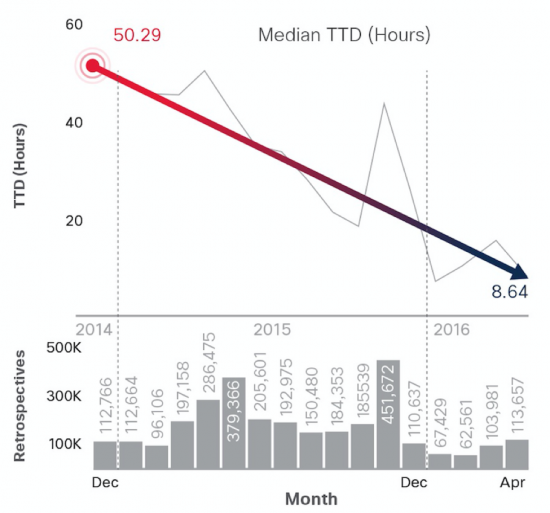

How you should measure the effectiveness of a system is to measure the total amount of time it takes the product to detect the totality of tested breaches. For example – If we assign a greater value to faster detection than we do to slower detection then we can assess overall product effectiveness that is weighted by time. In doing this we do not need to impose arbitrary limits on the length a solution can take to detect a breach and we can better represent the value of that detection. We have referred to and reported on our insights to this metric as our Time to Detection (TTD) since December 2014 and have consistently reduced it from a median of 50.2 hours to a median of 13 hours for this reporting period. Data is available in our 2016 Midyear Cybersecurity Report.

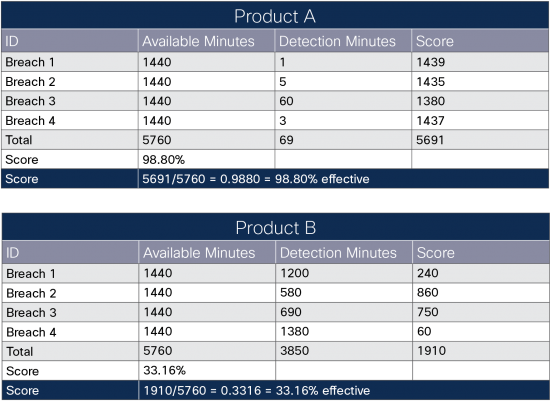

I’ve taken the liberty to model how one such assessment for this breach test might work. While there are many models we could apply, I’ve just inverted the time values and used that as a scoring metric to keep it simple. Simply put, if you have 1440 total minutes to detect a breach and you detect it within one minute you will be given 1439 points for the detection.

EG:

Note that both products detected 100% of the breaches within 24 hours though one product performed significantly better in the TTD and thus reduced the unconstrained operational space of the adversary – and the resulting risk and exposure to your business.

While full data is necessary to perform an exact assessment, we can apply a similar approach with the data that is available from this test. I’ve one such summary below and have eliminated product names as to not mislead defenders or violate usage terms.

What do you think?

There are, of course, other considerations that should be taken into account, such as the actual operational cost of the technology, the real impact of false positives, the value of blocking, and the operational burden any given technology will place on your people and processes. Right now, I would like your feedback on this proposed approach and how it relates to your experiences. Do you think there is a better way to score the effectiveness of security products in detecting a breach? What else do you think is needed or can be improved? Please help us understand what key performance indicators you would like to see, and how you currently measure them or think they can be measured so we can help you deliver more accurate and timely representations in our products.

In the meantime, we do maintain our pride over our current test results, because as stated before, it’s currently our industry’s only measurement. Take a look and consider if we had these measures, as well as the ones I mention above, how that could ultimately help us all better advance our defenses against the adversary.

I think this is great. In the spirit of continuous improvement, sometimes we need to come up with new ways to measure effectiveness.

Our customers require think about TTD(Time to detection ),that is I learn from this blog.

“The ability of the product to detect and report successful infections in a timely manner is critical to maintaining the security and functionality of the monitored network. …should be able to detect known samples, or analyze unknown samples, and report on them within 24 hours of initial infection and command and control (C&C) callback. …will not receive credit for the detection.”

The entire above statement is the problem with all solutions on the market right now. The strategy of detecting and monitoring is a loosing strategy.

***If I am your neighbor and you pay me $100,000+ per year to protect your home while you’re away and all I do is call you when someone breaks in, what good is that?!?!

– The home owner’s response should be, “I’m paying you all this money, why didn’t you stop the intruder?”

As security professionals we need to shift our mindsets away from detection because the damage has already been done at that point. These criminals change everyday and we’re not keeping up with them. 100% detection just means you’re a great spectator, congrats.

Hi Rocco. I disagree completely with the protection mantra. Protection can only be so effective when you are facing motivated and capable attackers. To use your analogy – You are expecting that someone can stop all intrusions into your neighbors house all the time. That is 100% protection when it is attacked every second of every day by multiple attackers with varying levels of experience… all the while the doors and windows have been removed and you only have a broom.

Thinking it is possible, especially with all of the technical debt that has been amassed over the last few decades, is simply planning to fail.

In security world, no 100% detection but in same test environment, it is good enough to measure but Time to Detect is more critical parameter. Totally agree.

100% detection is very impressive and the detection time is great too… but when I’ve used IDS myself in the past, the problem has been the false positives. 0.33% sounds like a small number, but it’s actually pretty huge… and funnily enough the reason it’s huge comes down to your point about measuring the right things. What we really want to see is the ratio of false positives to true positives. So e.g. if we got a score of 50%, that means for every two real threats detected we get a false positive. And if that was the measure, 0.33% would mean a negligible false positive rate. But the measurement reported is actually the percentage of all valid traffic that has false detections. Since valud network traffic vastly outweighs threat traffic this means the ratio is in fact huge i.e. the vast majority of threat reports generated by the system will in fact be false. In my experience this cripples the true effectiveness of IDS. Sadly, users just get used to ignoring what it is telling them. The solution to this is to have a dedicated team going through the logs, who are trained to search for the needles in the haystack regardless of the amount of hay coming their way. I guess it’s a service that Cisco can offer as an add on? But this puts the TCO way up, which makes the offering less attractive to the market. Just my two pence worth.

Hi Raffles. I completely understand your perspective however it is not quite correct because the instrumentation and measurements for this test are not well enough refined, as I think I’ve highlighted in the post.

While false positives should be measured against the entirety, in this test they are measured as a percentage of a number of false positive tests. For perspective, it was one false positive on one file that was only ever seen in one place and could have only ever affected one user. That is a very different thing than .33% of all things are false positives.