We’re always looking for the “quick wins” in security — whether it’s the magic blinky box that you drop into the right place in your network and it stops all the bad stuff (let me know if you find one of those), or the secret incantation that you can perform that doesn’t cost money but adds protection to your armor. The “one weird trick” sometimes leads to clicks; I once got the head of one of the biggest tech companies on the planet to click on my analyst report using that title. But clicks aren’t solutions, and only the customer can decide whether something is really a solution.

In the Security Outcomes Study, we asked respondents which security practices they felt they were managing to do, but at the other end of the scale, we didn’t want to ask them whether they were bad at them. Nobody wants to say, “We don’t care about this practice,” or “We’re really bad at this.” So, we framed it as “agreeing” and “disagreeing” with the practice statements, such as “My organization meets established SLAs or deadlines for remediating disclosed vulnerabilities in systems and software.” We thought it might be easier for someone to say, “I disagree,” because it could be attributed to a lot of factors: they don’t prioritize it, there’s some constraint that they can’t get around, their management doesn’t care about it, they don’t have the budget, they haven’t implemented it across the board, and so on. So when they said they were “disagreeing,” we don’t actually know whether a given practice was hard to do, or blocked for another reason, but we can guess from our own experiences that it might not be straightforward to accomplish.

Given those caveats as a backdrop, let’s look at some comparisons between security practices that many respondents said they were doing or not doing, and security practices that appeared to have a strong statistical correlation with good security outcomes.

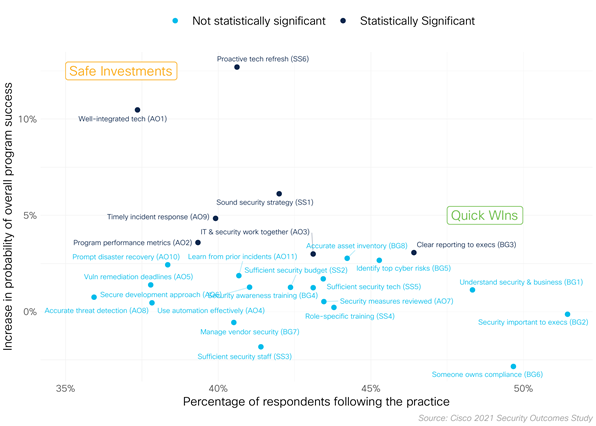

The figure shown here places all security practices on grid according to the percentage of respondents reporting adherence (x axis) and the increase in probability of overall program success attributed to each practice.

Practices toward the upper right are, according to the data, more often implemented successfully and are highly correlated with gains in program success. The somewhat bad/sad news is that there aren’t any practices that land squarely in that quadrant. Sorry folks — as we know, security is hard.

Quick Wins

Short of that, we can examine the lower right and middle-ish areas of the chart to see which practices are still significantly correlated but appear to be achievable by more respondents. “Clear reporting to execs” is probably the quickest win, followed by a toss-up between “Sound security strategy” and “IT & security work together.” That’s pretty interesting because they’re non-technical controls that weren’t that common in our main report. They’re also difficult to substantiate or quantify: what you think is clear reporting to your executives might not seem clear to them. What does a “sound security strategy” look like as opposed to an “unsound” one? And everyone can say that IT and security “work together,” but which areas of cooperation are the most important? We have an opportunity here to dig deeper in future research.

More achievable, plus significantly correlated with overall success? This is probably the closest to a “quick win” that we’re going to get. Don’t ignore the other practices here just because the data scientists didn’t label them as “statistically significant.” If you group a number of them together, you might turn them into significant boosts (see below).

Safe Investments

It’s also worth noting that the practices in the upper left (hard but effective) are the ones that kept showing up in the report. We’ve labeled those “safe investments” because the data strongly supports their weighty contribution to security program success … but those gains likely won’t come easy for every organization. They not only require strong cooperation across the board, with all areas of IT and external partners, but they may be hard to justify from a functional standpoint: the tech works fine, why should we keep updating it?

The one thing this chart doesn’t capture is the potential cumulative effect of doing several of the practices. Each one here is mapped against its individual likelihood of contributing to your program’s success, but if you did, say, five of the ones that most respondents say they were able to do well, how would that increase your outcomes? I’m not a data scientist myself, but I would caution against the temptation to take these numbers and add or multiply them somehow (2% + 2% +3% equals a 7% better outcome!). Remember, these statistics aren’t guarantees, but it’s probably safe to say that even if one practice, successfully done, didn’t pan out to improve your outcomes, doing a few more practices well would raise your chances of getting some level of improvement.

The moral of the story is that “quick wins” in security are neither quick, nor are they a total win. But we did make you click, didn’t we? And you may find some practice areas to focus on that are more easily achievable and have a good chance of incrementally boosting your organization’s security posture.

Additional Resources

- Access the full Cisco 2021 Security Outcomes Study

- A blog series with more relevant information as we continue to analyze the data

- Regions and verticals specific companion reports