As a consultant I have seen many different ‘Data Centers’, from Co-location facilities, to in house and well thought out, to a dirty closet that no one was using. Douglas Alger gave us a tour of Cisco’s Data Center in Allen, TX about a month ago. I was expecting to be impressed and I was not disappointed. Cisco has made a commitment to all of their Data Centers at least Leeds Silver certified. The Data Center in Allen, TX is Leeds Gold certified. Also, Cisco tried to use as much off the shelf components as possible so that this model can be replicated to every Data Center.

Outside of the Data Center building

When driving up to the Data Center it was not the usual look of a Data Center. You really have to know where you are going to find it. The building is surrounded by berms 15-20 feet tall. This is doubles as a camouflage for the building, but it’s primary purpose is to deflect tornados from hitting the building directly. If a tornado is heading for the building, the base would have to climb the berms which in turn would cause the tornado to ‘jump’ over the building.

The roof of the Data Center has a high level of wind tolerance, but the building is constructed in several layers. A tornado could take off several of these layers and the Data Center could continue to operate.

There are the typical barriers expected in a secure facility such as fencing, vehicle barriers, cameras, and a bicycle rack. Yeah, a bicycle rack. Part of the Leeds certificate is the ability for alternate modes of transportation to the office. Installing a bicycle rack and shower inside was an easy way to get additional points for the Leeds certification.

Powering all the things

The Data Center has two separate (side A and side B) 10 MegaWatt feeds from two different electricity service providers. They also have solar panels on the roof that generate about 400 KiloWatts of power.

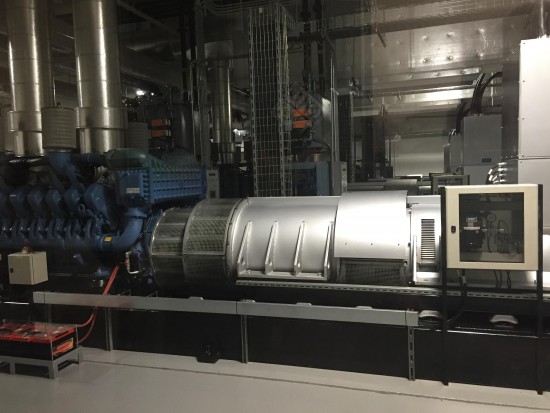

In the Richardson, TX Data Center a few miles away, there are thousands of batteries. Every Data Center uses some sort of battery system to hold the load when the electricity provider fails and until the generators come online to take over. In the Allen DC and their desire to be more environmentally friendly, they decided to not use any batteries to carry the load.

Instead there are four devices that have a fly wheel that is constantly being spun by electricity from the main power feed. Once power is lost from this feed, the fly wheel will continue to spins and provide power to the entire Data Center (side A or B) for 15 seconds. Now 15 seconds is not a lot of time, but the theory is that everything should have A & B power feeds. If one side goes down, the other should be able to carry the entire load alone. Also, the generators are kept in excellent shape and should be able to take on the load within the 15 seconds.

Cooling the oven

Cisco did a fluid dynamics study of the cooling and heating airflow of a Data Center and found out that cold air sinks and hot air rises. So the front of the server cabinets are ventilated to draw in cool air while that backs are not. There are chimneys on the back side of the cabinets that redirect the hot air into a high open ceiling. These chimneys are about 3-4” higher than the cool air vents which blow air down into the cold aisle keeping the airflow separated.

About half of the year the cool air is pulled in from the outside, yes even in Texas it does get cool enough. They operate the servers around 80-82 degrees, so they can pull in outside air more often throughout the year. When the temperature is colder, they mix in the warm air from the servers with the outside air to ensure the cool air entering the data halls is a constant temperature. This system is automated and doesn’t require human intervention. It took Cisco only two years to recover the cost of this system.

Data Hall 401

As expected there were no loose cables laying on the floor or cabinet doors that couldn’t be closed. The data hall was very neat and tidy. My OCD really enjoyed this part. There is no raised floor and everything comes from overhead. There were only a few data cables that went into each cabinet and Cisco is using FCoE to do this.

Each rack had an A and B power feed. The power distribution was modular in that a power bar ran down the rows. Side A in the front and side B in the back. Along this power bar were junctions that allowed non-electricians to connect, for lack of a better term, power outlets. No matter what combination of power feeds a rack needed, you just installed the outlets on the power bar that you needed.

The network equipment was not in the middle of the data hall. Side A was on one side of the hall and side B was on the other. This gives them more physical redundancy for the network itself.

Carriers, aka service providers, had their own area they could access. These spaces were physically separated and not in the data halls. There are two carrier spaces on opposite sides of the buildings with different entrances to the property to provide physical redundancy.

There is a small team of personnel that are responsible for the Data Center itself. They handle all of the installation and removal of equipment. This team does a great job of keeping everything standard, neat, and tidy. This team are the only ones that get access into the data hall. All of the carriers, maintenance workers, visitors, etc have to be escorted into the data halls if they have a valid reason, which is slim, to be in there.

Cisco’s Allen Data Center is a great example on how to build a modern Data Center. The fly wheel generator is very impressive to me. If you haven’t taken a tour of the facility, I suggest you get with your Cisco Account Manager and get one setup.

Douglas wrote several books on Data Center design and you can find them here.