I have previously written a few details about our upcoming ultra low latency solution for High Performance Computing (HPC). Since my last blog post, a few of you sent me emails asking for more technical details about it.

So let’s just put it all out there.

I have gotten many questions, so I’ll list the most common of them in Q&A format (sorry for the length!):

Q: What’s the high-level description of this product?

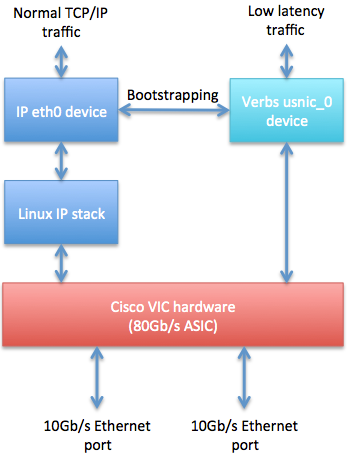

A: In short, usNIC is a software solution (firmware, kernel driver, userspace driver, and Open MPI support) for our existing 2nd generation Cisco Ethernet VIC hardware (Virtual Interface Card). If you already have 2nd generation Cisco VIC hardware, this is a free software update to what you already own. Architecturally, it looks like this:

Q: What does “usNIC” stand for?

A: Userspace NIC. It’s basically another “personality” to the flexible Cisco VIC. The VIC also exposes an “enic” (Ethernet NIC) and “fnic” (Fiber Channel NIC) personalities, too.

Q: Can I send normal TCP/IP traffic over the VIC at the same time as low-latency traffic?

A: Yes; both the Linux IP “ethX” device and low-latency “usnic_Y” devices are available at the same time. But be aware that both types of traffic will go over the same wire, so you’ll be sharing bandwidth.

Q: Is this RoCE (RDMA over Converged Ethernet, which, incidentally, has nothing to do with Converged Ethernet)?

A: No. RoCE is a wire protocol that is best described as “InfiniBand over Ethernet” (a.k.a., IBoE). We saw no value to doing that for MPI support, and instead used our own L2-Ethernet based wire protocol. It basically adds a few integers beyond the standard Ethernet L2 header for source and destination QP number, etc.

Q: What software API is used to access this low latency capability?

A: Our usNIC solution plugs in to the Linux “verbs” API stack. “Verbs” is the upstream Linux kernel API for OS-bypass communications. Once you install the usNIC software, you can run normal verbs support commands (e.g., ibv_devinfo(1)) to see the Linux “usnic_X” devices.

Q: What verbs features do you support?

A: In this release, usNIC supports Unreliable Datagram (UD) verbs queue pairs (QPs). This support is quite analogous to UDP: unreliable MTU-sized messages. UD QPs allow you to inject MTU-sized messages directly on the wire, and receive directed inbound MTU-sized messages from the wire, bypassing the OS in both directions.

Q: Do you support RC and/or RDMA?

A: Not in this release. This release is essentially about supporting ultra-low latency, OS-bypass UD support.

Q: What kind of latency will usNIC deliver?

A: See my prior blog post.

Q: What parts will be open source?

A: Everything above the firmware. There’s a few pieces involved:

- Cisco VIC ethernet kernel driver: we extended our existing upstream Ethernet (“enic”) driver to help support our verbs driver. This will go upstream shortly.

- Cisco VIC verbs kernel driver: this is a new kernel driver that is used for bootstrapping verbs communications. This is waiting for the enic improvements to go upstream.

- Cisco VIC verbs userspace plugin: this is new plugin for libibverbs that supports the VIC usNIC devices. This will likely go upstream at the same time as the verbs kernel driver.

- Open MPI usNIC transport: this is a new Byte Transport Layer (BTL) plugin for Open MPI that uses the usNIC verbs support. It will go upstream within the next week or two.

Q: What version(s) of Open MPI do you support?

A: Support for Open MPI will be ongoing — this is not a “throw code over the wall and forget about it” situation. So it’s relevant to talk about three different versions of Open MPI:

- Open MPI 1.6.5: This is the current stable version of Open MPI. No new features are accepted upstream for the stable series, and usNIC support would be a new feature. So Cisco will be releasing “Open MPI 1.6.5 + usNIC” in binary and source code packages on cisco.com, which, as its name implies, will be Open MPI 1.6.5 plus the usNIC BTL plugin.

- Open MPI 1.7.3: The next release of Open MPI’s “feature” series is expected in August. We plan on having the usNIC transport included in that release.

- Open MPI development trunk: The head of Open MPI development will eventually become Open MPI 1.9. We anticipate upstreaming the usNIC transport to the Open MPI trunk within the next week or two.

Q: Is the new Open MPI plugin for generic UD verbs devices?

A: No, it’s specific to usNIC (in fact, the BTL plugin name is “usnic”). We chose this route for two reasons:

- We can add performance optimizations that are specific to Cisco platforms.

- As we extend VIC low-latency/OS-bypass functionality over time, we can add such capabilities without breaking support for other UD-capable hardware.

Q: How is large message fragmentation/reassembly handled?

A: This is handled at two levels:

- The core of Open MPI does some level of fragmentation and reassembly in order to support striping of large messages across multiple network interfaces.

- The usNIC BTL transport handles fragmenting into MTU-sized messages, transmission, re-transmission when necessary, ACKing, and some level of reassembly. A sliding window scheme is used for retransmission; many ideas and tricks were stolen from the corpus of knowledge surrounding efficient TCP.

Q: Is the usNIC BTL plugin NUMA-aware?

A: Absolutely; we live in a NUMA world, after all. I have an entire upcoming blog entry about one of the NUMA optimizations we use in the usNIC BTL. Stay tuned. 🙂

Q: Is there a convenient mechanism to get all of this software?

A: The plan is that all of the above-mentioned software, as well as new usNIC-enabled firmware for the VIC, will be available on cisco.com for Cisco rack servers in August. Support for VICs in blade servers will come a little later (I can’t quote the exact schedule, but suffice it to say that it’s “soon”).

> ”Verbs” is the upstream Linux kernel API for OS-bypass communications.

Thanks Jeff, I just puked on my keyboard. Seriously, since when is Verbs _the_ Linux kernel API for OS-bypass communications ?

LOL!

I think verbs went upstream on the order of 5-6 years ago…? It really is the only OS-bypass networking API that is upstream. KVM has some interesting possibilities for exposing hardware in an OS-bypass mechanism (even though it’s really intended for virtualization), but that doesn’t provide a common API to doing networky-kinds-of-things.