High-performance computing – or HPC – fuels groundbreaking research. This isn’t news to scientists, but most researchers studying fields other than quantum mechanics and molecular modeling probably don’t realize that they can use a supercomputer to ramp up their analysis.

This power, historically reserved for the realm of theoretical science, is now available to all – economists and sociologists, engineers and anthropologists, linguists and historians.

Just in case the prospect of using a supercomputer to solve our problems seems like a Cold War-era dream, we want to show you a few ways that high-performance computing is fueling research in a variety of disciplines today. Cue our high-performance computing blog series.

First up, natural sciences.

Unlike Matthew McConaughey (ahem, Interstellar), most of us have never been sucked through a black hole. With the closest one (that we know of) nearly 3,000 light years away, we fortunately won’t get close enough to check them out ourselves.

The good news is that we won’t have to. Using HPC, scientists can simulate what might happen in close proximity to a black hole. They can also simulate possible events that could lead to the creation of a black hole, such as the collapse of a star.

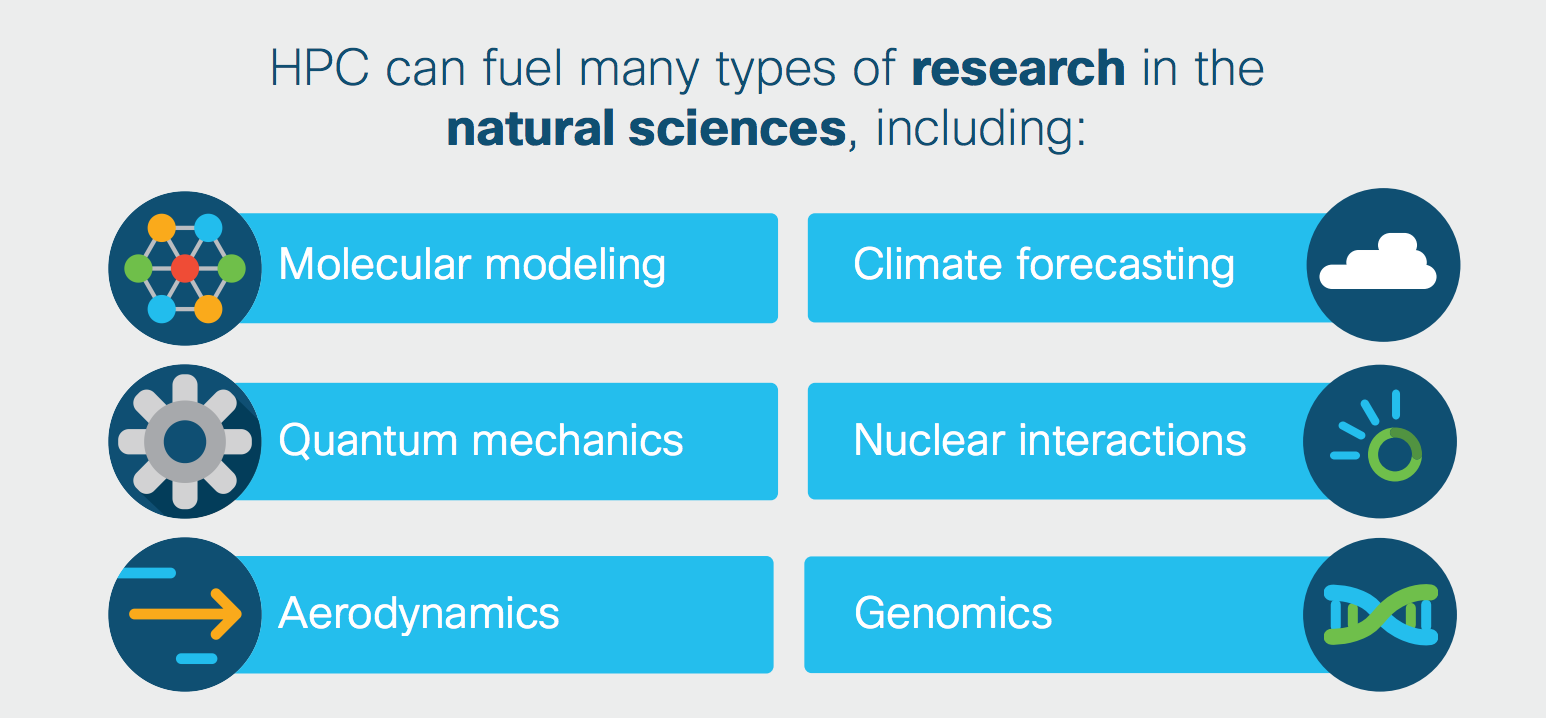

Discoveries about black holes aren’t the only scientific advances made possible by supercomputers. Molecular modeling, climate forecasting, and a variety of other types of research in the natural sciences rely on HPC to crunch the data and power their simulations.

Next up in our high-performance computing use case series? Big data.

Can’t wait for the next post? Learn more about HPC below.

Hi Rob, thanks for a fun but informative article. As you hint at, HPC has long been the domain of research universities, government labs, and only the very largest companies. These were the only entities could afford HPC, and had over the years developed the necessary expertise to build and operate the hardware and software.

But the cloud is making HPC more attainable to everyone. With the capital costs and expertise being supplied by the cloud provider, the price point as well as the learning curve become much more palatable to the average engineer or scientist. We are still in the early days, but there are many companies working towards this.

This article published in Scientific Computing World explains some of the technology developed by my company UberCloud to enable this transformation.

https://www.theubercloud.com/passing-hpc-into-the-hands-of-every-engineer-and-scientist/

Cheers,

Thomas Francis

Head of Products

UberCloud

Looking forward to a HPC use case on getting my teenage daughters up and ready for school in the morning! Thanks, Rob!

CJ Levendoski – Managing Parter, ActionPoint Marketing