I’ll never forget the shiny gold star stickers I received for getting 100% scores on math tests in school. The stars were proudly displayed on our family’s refrigerator door alongside my other sibling’s achievements. Something in me still wants to earn more of those stars.

But our technology jobs today, striving for 100% service availability and earning that metaphorical gold star isn’t, as it turns out, as good a goal as it appears. These days, the gold star standard is deliberately set by service uptime expectations to be short of 100%. Meeting 99.99% or 99.999%, but nothing more, earns us the gold star at the end of the day.

Recent outages at Facebook and AWS remind us of the wide-ranging impacts service disrupts have on end customers. One could be forgiven for thinking outages are inevitable, since modern applications are built with modularity in mind, and as a result, they increasingly rely on externally hosted services for tasks such as authentication, messaging, computing infrastructure, and so many more services. It’s obviously hard to guarantee everything works perfectly, all the time. Yet end-users may count on your service availability and may even be so unforgiving that they may not return if they feel the services is unreliable.

So why not strive for 100% reliability, if anything less can cost you business? It may seem like a worthy goal, but it has shortcomings.

Over-reliance on service uptime

One pitfall with services that deliberately set out to, or even achieve 100% reliability is that other applications becoming overly reliant on the service. Let’s be real: services eventually fail, and there is a cascading effect on applications that aren’t built with the logic to withstand failures of external services. For example, services built with a single AWS AZ (Availability Zone) in mind could potentially count on 100% availability of that AZ. As I am writing this blog post, I have in mind the power outage in northern Virginia that affected the us-east-1 AZ and numerous global Web services. While AWS service has proven to be extraordinarily reliable over time, assuming it would always be up 100% of the time proved to be unreasonable.

Maybe some of the applications that failed were built to sustain the failure of an AZ, but built within a single region. In recent memory, AWS has suffered from regional outage of services. This illustrates the need to develop for multi-region failures, or other contingency planning.

When it comes to building applications with uptime in mind, it’s responsible to assume your service uptimes will fall short of 100%. It is up to SREs and application developers to use server uptime monitoring tools and other products to automate infrastructure, and develop applications within the boundaries of realistic SLOs (Service Level Objectives).

System resiliency suffers

Services built with the assumption of 100% uptime from the services they rely on themselves implicitly do not have resiliency as a countermeasure to service interruptions. But a service that counts on compute infrastructure failures has the logic to fail over to other available resources while minimizing or eliminating customer-facing interruptions. That failure mitigation design could be in the form of failing over to a different Availability Zone (for AWS developers) or distributing an application infrastructure over different Availability Zones. Regardless of the approach, the overall goal is to build resiliency into application services with the assumption that no service is 100%.

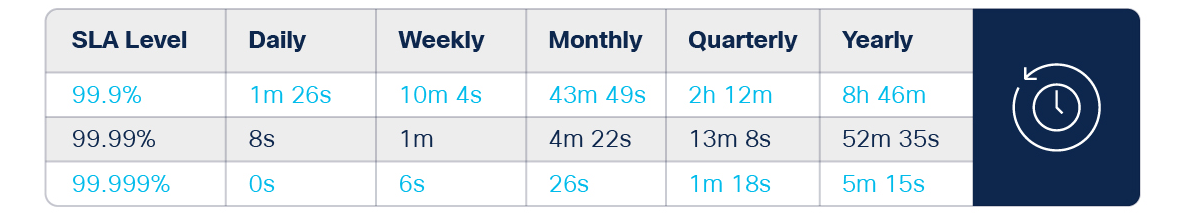

Downtime table for different Service Level Agreements

There are, of course, some systems that have achieved 100% uptime. But that’s not always good. A perfectly reliable system leads to complacent operators, especially in the users of the product. It’s best for SLOs to have maintenance windows, to keep users of the service up to speed on keeping their entire system running, even if a reliable component suffers an outage.

Services are slow to evolve

Another shortcoming of striving for 100% uptime is that there is no opportunity for major application maintenance. An SLO with 100% uptime means there is 0% downtime. That means zero minutes per year to perform large-scale updates like migrating to a more performant database or modernizing a front end when a complete overhaul is called for. Thus, services are constrained from easily evolving to the next best version of themselves.

Consequently, robust SLO’s with enough built-in downtime provide the breathing room needed for services to recover from unplanned and planned downtime to implement maintenance and improvements.

Developers building today’s applications can take advantage of many different services and bolt them together like building blocks – and they can achieve remarkable reliability. However, striving for 100% uptime is an unreasonable expectation for the application and other services the application counts on. It is far more responsible to develop applications with built-in resiliency.

We’d love to hear what you think. Ask a question or leave a comment below.

And stay connected with Cisco DevNet on social!

LinkedIn | Twitter @CiscoDevNet | Facebook | Developer Video Channel