In the past, we have pointed out that configuring network services and security policies into an application network has traditionally been the most complex, tedious and time-consuming aspect of deploying new applications. For a data center or cloud provider to stand up applications in minutes and not days, easily configuring the right service nodes (e.g. a load balancer or firewall), with the right application and security policies, to support the specific workload requirements, independent of location in the network is a clear obstacle that has to be overcome.

Let’s say, for example, you have a world-beating best-in-class firewall positioned in some rack of your data center. You also have two workloads that need to be separated according to security policies implemented on this firewall on other servers a few hops away. The network and security teams have traditionally had a few challenges to address:

- If traffic from workload1 to workload2 needs to go through a firewall, how do you route traffic properly, considering the workloads don’t themselves have visibility to the specifics of the firewalls they need to work with. Traffic routing of this nature can be implemented in the network through the use of VLAN’s and policy-based routing techniques, but this is not scalable to hundreds or thousands of applications, is tedious to manage, limits workload mobility, and makes the whole infrastructure more error-prone and brittle.

- The physical location of the firewall or network service largely determines the topology of the network, and have historically restricted where workloads could be placed. But modern data center and cloud networks need to be able to provide required services and policies independent of where the workloads are placed, on this rack or that, on-premises or in the cloud.

Whereas physical firewalls might have been incorporated into an application network through VLAN stitching, there are a number of other protocols and techniques that generally have to be used with other network services to include them in an application deployment, such as Source NAT for application delivery controllers, or WCCP for WAN optimization. The complexity of configuring services for a single application deployment thus increases measurably.

Cisco was first to address these problems when we started enabling virtual networks with our Nexus 1000V virtual switch. Nexus 1000V was first to implement a technology called “service chaining” in a feature we called vPath. vPath ran in the Nexus 1000V switch and hypervisor to intercept network traffic and re-route it to the required virtual service nodes. I described this all in detail here. Initially, it really wasn’t service chaining because it only worked for one service at a time, specifically the Virtual Security Gateway (VSG) firewall, but eventually we allowed for multiple service node hops between workloads, such as going through VSG and then a NetScaler 1000V application delivery controller.

vPath worked very well in VXLAN virtual overlay networks, and still remains a key component of our Nexus 1000V virtual networking deployments, but vPath is limited to virtual network services, and the ideal service chaining solution should support both physical and virtual services, as well as physical and virtual networks and workloads.

Service chaining has a lot of synergy with Software Defined Networking (SDN) technology because it is essentially a policy construct. For example, a service chain may implement a policy that all TCP port 80 traffic must first pass through a firewall and then an intrusion prevention system (IPS) before ending in a load balancer and the destination address.

This concept is particularly relevant in the Cisco Application Centric Infrastructure (ACI) policy model, where policies are applied to application network profiles (ANP), which define how application workloads and services should work together, and the policies that are implemented throughout the application network. Understanding which policies are applied at which service nodes, and how each workload can reach those services is a foundation of the ACI policy model.

Along comes Network Services Headers (NSH), a new Service Chaining protocol…

Based on the early success of vPath, but needing to extend the concept to physical devices and networks, as well as addressing more “chaining” requirements for more extensive cloud use cases, Cisco has developed Network Services Headers (NSH), a new service chaining protocol that is rapidly gaining acceptance in the industry. Based on lessons learned in earlier versions of vPath, and realizing that NSH would only succeed with broad acceptance from a wide variety of network services and security vendors, Cisco has quickly moved to propose NSH as a standard and has brought a multi-vendor proposal to the IETF.

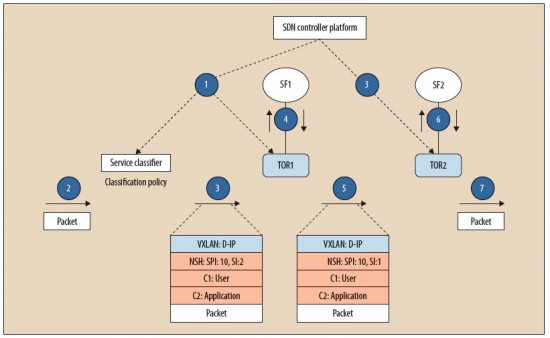

NSH is added to network traffic, in the packet header, to create a dedicated services plane that is independent of the underlying transport protocol. In general, NSH describes a sequence of service nodes that the packet must be routed to prior to reaching the destination address and adds this metadata information about the packet and/or service chain to an IP packet.

NSH is typically inserted by a network node, often on ingress into a network. NSH is inserted between the original packet or frame and any outer network transport encapsulation such as MPLS, VXLAN, GRE, etc. As NSH is transport agnostic, it can be carried by many widely deployed transport protocols.

The concept of a standardized approach to service chaining technology is broadly recognized throughout the industry, and Cisco is gaining a number of supporters for a proposed standard based on NSH to the IETF. For a complete description of the NSH header formats see this IETF draft proposal. This draft specification has been proposed by a team from Cisco, Citrix, Microsoft, Rackspace and Red Hat, including Cisco’s ACI business unit, Insieme. Intel and Broadcom are also interested in supporting NSH in their Network Interface Cards (NIC) and switch ASICs, which will be crucial moving forward.

NSH requires a control plane to exchange values with participating service nodes and to provision those nodes with information such as the service paths (and by association the service chains) that need to be supported. It is applicable to many different environments, so it does not mandate a single control protocol—rather, widely-used control planes must add support for service chaining via NSH.

Commonly used protocols—both centralized and distributed—support (or will evolve to support) NSH-based service chaining and vary depending on deployment and operator requirements. The open source OpenDaylight (ODL) SFC project, for example, provides an SDN approach to service chaining via a controller. The controller platform is agnostic with respect to southbound provisioning protocols, and it uses the appropriate ODL protocols for a given device/service chain combination. These southbound protocols include Open-Flow with Open vSwitch (details) and Netconf/YANG (details).

NSH Benefits

As described earlier, network services are currently tightly linked to topology. The IETF SFC group’s “Problem Statement” draft describes additional limitations in existing deployments. To address these limitations, NSH offers a vastly simplified and unified data plane. Specifically, it decouples the service topology from the actual network topology. Rather than being viewed as a “bump in the wire,” a service function becomes an identifiable resource available for consumption from any location in the network.

Agile, elastic service insertion is a core component of any modern network. As service chaining matures, interoperability is a must. The proposed NSH protocol standard provides the required data-plane information needed to construct topologically independent service paths and greatly simplify the provisioning of network services and the deployment of associated applications.