There has been a lot of recent online discussion about automation of the datacenter network, how we all may (or may not) need to learn programming, the value of a CCIE, and similar topics. This blog tries to look beyond all that. Assume network configuration has been automated. How does that affect network design?

There has been a lot of recent online discussion about automation of the datacenter network, how we all may (or may not) need to learn programming, the value of a CCIE, and similar topics. This blog tries to look beyond all that. Assume network configuration has been automated. How does that affect network design?

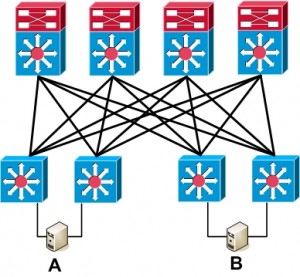

Automation can greatly change the network landscape, or it may change little. It depends on what you’re presently doing for design. Why? The reason is that the programmers probably assumed you’ve built your network in a certain way. As an example, Cisco DFA (Dynamic Fabric Automation) and ACI (Application Centric Infrastructure) are based on a Spine-Leaf CLOS tree topology.

Yes, some OpenFlow vendors have claimed to support arbitrary topologies. Arbitrary topologies are just not a great idea. Supporting them makes the programmers work harder to anticipate all the arbitrary things you might do. I want the programmers to focus on key functionality. Building the network in a well-defined way is a price I’m quite willing to pay. Yes, some backwards or migration compatibility is also desirable.

The programmers probably assumed you bought the right equipment and put it together in some rational way. The automated tool will have to tell you how to cable it up, or it might check your compliance with the recommended design. Plan on this when you look to automation for sites, a datacenter, or a WAN network.

The good news here is the the Cisco automated tools are likely to align with Cisco Validated Designs. The CVD’s provide a great starting point for any network design, and they have recently been displaying some great graphics. They’re a useful resource if you don’t want to re-invent the wheel — especially a square wheel. While I disagree with a few aspects of some of them, over the years most of them have been great guidelines.

The more problematic part of this is that right now, many of us are (still!) operating in the era of hand-crafted networks. What does the machine era and the assembly line bring with it? We will have to give up one-off designs and some degree of customization. The focus will shift to repeated design elements and components. Namely, the type of design the automated tool can work with.

Some network designers are already operating in such a fashion. Their networks may not be automated, but they follow repeatable standards. Like an early factory working with inter-changeable parts. Such sites have likely created a small number of design templates and then used them repeatedly. Examples: “small remote office”, “medium remote office”, “MPLS-only office”, or “MPLS with DMVPN backup office”.

However you carve things up, there should only be a few standard models, including “datacenter” and perhaps “HQ” or “campus”. If you know the number of users (or size range) in each such site, you can then pre-size WAN links, approximate number of APs, licenses, whatever. You can also pre-plan your addressing, with, say, a large block of /25’s for very small offices, /23’s for medium, etc.

On the equipment side, a small office might have one router with both MPLS and DMVPN links, one core switch, and some small number of access switches. A larger office might have one router each for MPLS and one for DMPVN, two core switches, and more access switches. Add APs, WAAS, and other finishing touches as appropriate. Degree of criticality is another dimension you can add to the mix: critical sites would have more redundancy, or be more self-contained. Whatever you do, standardize the equipment models as much as possible, updating every year or two (to keep the spares inventory simple).

It takes some time to think through and document such internal standards. But probably not as much as you think! And then you win when you go to deploy, because everything becomes repeatable.

This can have huge benefits, even pre-automation. When you have to deploy a new office, you already have a picture of the topology (more or less), you know which current device models are used for each role in the site, and no deep thought is required to complete the site addressing. You can also save on a diagram (if model X sites all look alike, one diagram covers all). And on wrapping your brain around how the site works.

There are some other things you need to do to make this work. Advance planning is one. Another is self-discipline, sticking with the design models, no exceptions.

Other rules you follow might be that access switches are always dual-homed to core or distribution switches, the access layer is Layer 3 except in small sites, etc. As soon as you deviate, e.g. daisy-chain switches because fiber wasn’t available back to the distribution layer, you create more work for whoever has to deploy or troubleshoot. This affects not just that site, but any site, since who knows how it might be cabled. And when troubleshooting, staff will have to hand-sketch the topology over and over again — because staff will be too busy to do site-by-site topology diagrams.

I see Layer 3 as providing “snap-in blocks” in a modular design. You can draw a routed portion of the network and have a good idea how it interacts with the rest of the network. The interactions are predictable, and localized, at least as long as you don’t turn your network into a CCIE lab full of static routes, redistribution, route filters, and such.

With Layer 2, you need to know everything a VLAN touches. My personal rule: datacenter VLANs should live only in the datacenter, and must terminate at the core or core/distribution switches. Any user access VLANs either live solely on the access switch, or terminate at the building distribution switches. That keeps things more modular. If you don’t do that, then you can’t just diagram each module, you have to diagram the whole mess, since VLANs go anywhere or everywhere. A symptom of this is when you have a VLAN diagram which is a large wall chart resembling a printed circuit board.

You guessed it, I’m a fan of modular design. Like sub-components that feed into the assembly line as parts of a completed automobile, say.

Over the years I’ve followed Cisco closely concerning the topic of network design, and designed courses on that (and other topics). We already know a lot about what to do and not do concerning network design. See also the CCDA / CCDP courses, certifications, and the related book by John Tiso.

To sum up, what are the early candidates for modules for automation? Remote sites, a user building or campus, and the WAN come to mind as early contenders. I look forward to seeing the network design models implicit in the automation tools as APIC for the Enterprise. See also the Cisco partner site for Glue Networks.

The other clear contender for automation is the datacenter. Agility and vMotion have led us to every VLAN everywhere. Well, that’s simple to understand and diagram! But it brings with it the risk of Spanning Tree instability. Technologies such as vPC, FabricPath, and now DFA and ACI were created to resolve that problem.

If you take a sharp look at FabricPath, DFA, and ACI, as in some of my prior blogs, we see overlays being used in conjunction with edge or leaf switch forwarding tables. The common element is a routed overlay forwarding traffic between the edge switches. The focus has shifted from the FabricPath overlay mapping MAC address to FabricPath edge device as routed tunnel destination,, to forwarding within a VRF, to policy-based forwarding. The overlay technology also changed somewhat in each iteration. The topology design is rather similar, with some subtle changes evolving L2 distribution / access to Spine / Leaf CLOS tree. (See also my Practical SDN blogs.)

Oh, and about that programming for SDN? It can’t hurt to know what is happening under the hood, especially in big networks. Someone will certainly have to be comfortable with how the tools work, integrating the tools, and troubleshooting problems. You’d still better understand how the forwarding technology works and how to troubleshoot it. That way you can understand the impact of any network design constraints the automation tools require.

I’m personally looking forward to CiscoLive in San Francisco, and hearing more / deeper details about DFA and ACI, as well as APIC for the Enterprise. I hope to see you there!

Hashtags:

Hashtags:

#CiscoChampion #NetworkDesign #Automation #DFA #ACI #CCDP #netcraftsmen

Twitter: @pjwelcher