Since we started shipping the Nexus 3548 with AlgoBoost to our customers in the beginning of November, there has been more and more interest in testing and verifying the switch’s latency in different traffic scenarios. What we have found so far is while network engineers might be well experienced in testing the throughput capabilities of a switch, verifying the latency can be challenging, especially when latency is measured in the tens and low hundreds of nanoseconds!

I discussed this topic briefly when doing a hands-on demo for TechWise TV a short time ago.

https://www.youtube.com/watch?v=DN6cohlZqvE&feature=youtu.be

The goal of this post is to give an overview of the most common latency tests, how the Nexus 3548 performs in those tests, and to detail some subtleties of low latency testing for multicast traffic. This post will also address some confusion we’ve heard some vendors try to emphasize with the two source multicast tests.

Unicast Traffic

The most common test case is to verify throughput and latency when sending unicast traffic. RFC 2544 provides a standard for this test case. The most stressful version of the RFC 2544 test uses 64-byte packets in a full mesh, at 100 percent line rate. Full mesh means that all ports send traffic at the configured rate to all other ports in a full mesh pattern.

Figure 1 – Full Mesh traffic pattern

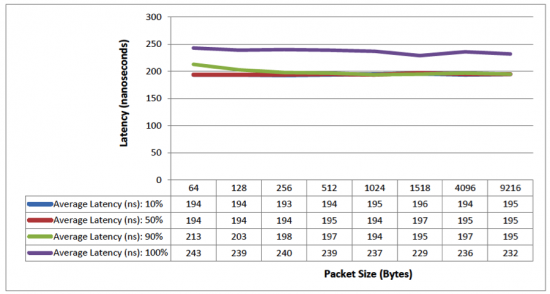

The following graph shows the Nexus 3548 latency results for Layer 3 RFC 2544 full mesh unicast test, with the Nexus 3548 operating in warp mode.

Figure 2 – Layer 3 RFC 2544 full mesh unicast test

We can see that the Nexus 3548 consistently forwards packets of all sizes under 200 nanoseconds at 50% load, and less than 240 nanoseconds at 100% load.

Multicast Traffic

Another common test case is to send multicast traffic between ports and measure the latency. RFC 3918 provides a standard for this test. A typical configuration for a 48-port device is to have a 1-to-47 fan-out. In this test, one port is the multicast source and all other 47 ports are receivers, which join the multicast groups. The following graph shows the Nexus 3548 latency results for Layer 3 RFC 3918 1-to-47 multicast test, with the Nexus 3548 operating in warp mode.

Figure 3 – Layer 3 RFC 3918 1-to-47 multicast test

We can see that the Nexus 3548 consistently forwards packets of all sizes under 200 nanoseconds at up to 90% load, and less than 220 nanoseconds at 100% load.

Multicast Traffic – 2+ Sources

Let’s now talk about a multicast scenario in which two or more ports are multicast sources. For this example we are using 2 sources and 46 receivers on the Nexus 3548.

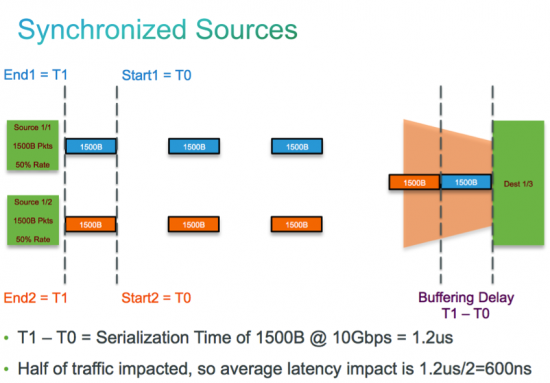

By default, the traffic generator will begin sending packets from the two sources at the exact same time. This method of operation is called synchronous mode. As shown in Figure 4, since two packets at a time are destined for the same egress, one will have to wait in the buffer of the egress port, which introduces latency. This type of non-RFC test will cause issues on any type of switch due to queuing delay. Figure 5 shows the Nexus 3548 latency results when the traffic generator has its two multicast source ports configured in synchronous mode.

Figure 4 – Synchronized sources packet flow

Figure 5 – Layer 3 RFC 3918 2-to-46 multicast 49.9% load test synchronous mode

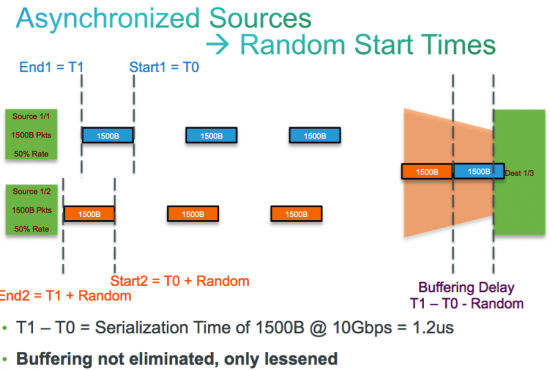

Traffic generators typically have an option for these types of test cases called asynchronous mode. This mode introduces a random delay between packets being sent from multiple sources. As shown in Figure 6, this random delay introduces a decrease in the latency observed since it gives more time to the switch to process the first packet that arrives at the egress port. Figure 7 shows the Nexus 3548 latency results when the traffic generator has its two multicast source ports configured in asynchronous mode. We can see that the latency in asynchronous mode is improved compared synchronous mode, but is higher than what is shown in a standard RFC 3918 test due to buffering delay.

Figure 6 – Asynchronized sources packet flow

Figure 7 – Layer 3 RFC 3918 2-to-46 multicast 49.9% load test

We’ve heard some vendors try to emphasize the two source multicast test to point out a supposed weakness of the Nexus 3548. It’s important to understand that any switch from any vendor would exhibit an increase in latency with this test in both synchronous and asynchronous mode.

Last, we’ve seen customers encounter higher than expected latency when performing 100% line rate testing using a traffic generator. In these cases, we’ve found clocking differences between the traffic generator and the switch causes the issue – the internal system clock of the traffic generator and the switch are not always in sync. The difference between these clocks could be + or -100 PPM per the 10 Gigabit Ethernet standard. In cases where the traffic generator port is clocked slightly higher than the switch, at 100% line rate traffic will be buffered at the egress port, introducing latency. In the opposite case, when the switch is clocked slightly higher than the traffic generator, no traffic will be buffered and the switch’s advertised nominal latency will be seen. To prove this, most traffic generators allow the PPM clock value to be adjusted in the traffic generator port properties.

When troubleshooting a potential clocking discrepancy between the Nexus 3548 switch and a traffic generator, the following NX-OS command can check the PPS the port is receiving:

# sh ha in mtc-usd ni-clk [port#] 268435456

Where [port#] is the port that is receiving traffic from traffic generator (for example).

Using this output, you can determine the difference in PPM between the 3548 and the traffic generator. If the result is a positive number it means the switch is clocked slightly lower than the traffic generator, and if it’s negative the switch is clocked slightly higher.

The sample output below shows a negative offset for PPM, indicating the switch is clocked slightly higher than the traffic generator connected to port 17, meaning we would not expect any buffering to occur during a 100% line rate test.

r4-3548# show hardware internal mtc-usd ni-clk 17 268435456

port PPM

====================================

17 10G:MTC: 0x0c4bf111 P 17 :0x00000000 -1000000.00000

References:

Cisco Nexus 3548 Switch Performance Validation (Spirent)

You may want also to check the following blogs

Introducing Cisco Algo Boost and Nexus 3548 – Breaking 200 ns latency barrier ! by Berna Devrim (@bernadevrim)

and the blog interviews from Gabriel Dixon with Cisco Engineers on various aspects of Nexus 3548 and Cisco Algo Boost.