Recently, you may have noticed Cisco discussing Kubernetes quite a bit. Back in October we announced our partnership with Google to bring joint hybrid cloud solutions to market that include GKE, Google’s managed Kubernetes offering and in February there were a number of articles here on the Cisco Cloud Blog on the Cisco Container Platform, which has Kubernetes at its core. But before both of those announcements, there was Contiv.

At our core, even as we expand into other cloud markets, Cisco is fundamentally a networking company and Contiv plays an important role as servant to that legacy in the microservices future that so many developers are gravitating towards. As more about our relationship with Google becomes public, it is important to revisit this key component that solves a critical problem that faces anybody wanting to run container clusters at scale and in a way that can interact with existing infrastructure.

In other words, let’s revisit why Contiv is so important.

Kubernetes Networking Is Hard

Consider the following, whose source comes from Cisco Principal Engineer Sanjeev Rampal:

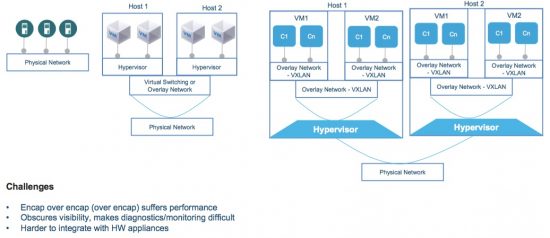

The right-hand side of this diagram depicts one way that a Kubernetes cluster can be deployed, namely on top of virtual machines which have their own set of cascading overlay networks. Kubernetes can similarly be deployed on top of bare metal, which simplifies the diagram slightly, but layering issues still can be problematic. The center and left side of the diagram show existing VMs and bare metal machines that might have resources that different containers on the right-hand side need to access as the images within them form parts of a larger application.

Now suppose that the container C1 on VM1 needs to talk to another container Cn on the same VM. Would you want the network packets going all the way out to the physical network just to facilitate communication between containers running in the same VM? Of course not, that creates performance issues. Throughout the network architecture here, in addition to balancing the performance issues where container-to-container connections need to be balanced with container-to-VM or container-to-bare metal scenarios, the whole thing is difficult to diagnose.

All of these issues illustrate why Kubernetes networking can be a difficult game of prioritizing different needs.

Contiv Gives You Options

Fortunately, Kubernetes was designed to have pluggable network strategies and Contiv gives operators a variety of options to best meet their specific needs. Here’s how it works:

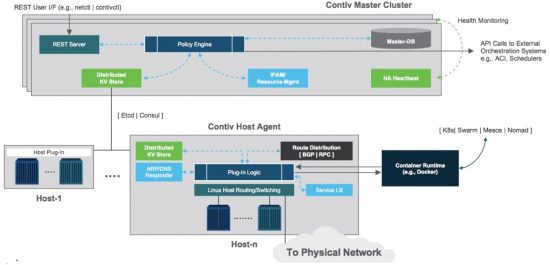

A policy engine plugs into the Kubernetes Master and Host Agents plug into the nodes. Both pieces take advantage of whatever key/value store is installed on the cluster to move policy information around so that network paths can be maintained between different containers in the cluster regardless of what node they are on and physical hardware outside the cluster. The policies can also allow traffic from one source to one destination but deny all other sources, improving the security of the overall system.

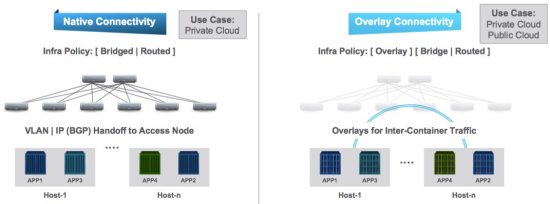

With these pieces in place, the network administrator can then choose from a variety of configuration options including Native or Overlay connectivity:

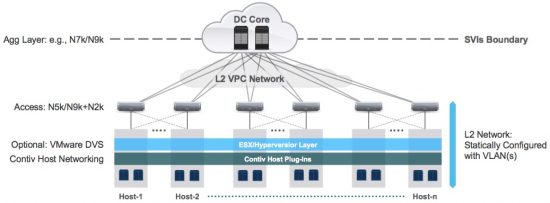

L2+, which provides the ease of L2 but avoids flooding like L3:

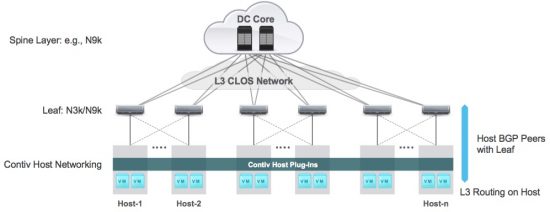

a scalable, distributed Layer 3 fabric:

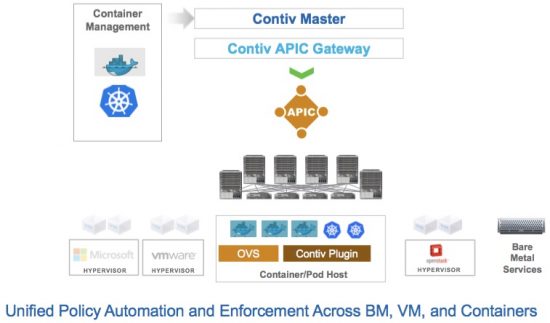

or a full ACI integration so that policies can be configured from a single APIC and control containers, VMs, and bare metal all from one place:

Summary

Kubernetes networking can be hard, as administrators weigh the needs of different container connectivity scenarios. Fortunately, Kubernetes networking is also modular and Contiv can plug in to bring a profile-based approach that can not only simplify a Kubernetes networking implementation but provide options given what a particular network administrator is trying to prioritize. Together, the two become a big part of Cisco’s on premise container strategy and offer compatibility with Cisco’s larger profile-based networking strategy.

Are you really care about contiv for Kubernets?

ex: Kubernets current 1.10.x

contiv installer 1.2.0 just support Kubernetes 1.8. (too bad)

contiv doesn't support NodePort…. for Kubernetes 1.9x or above. (very bad)

Hello there,

It sounds like you'd prefer some specific version support and a particular NodePort feature. That's certainly understandable. The great thing about Contiv being such a vibrant open source community is how easy it is to have voices heard on things like what you're raising. I'd encourage you to let your feelings be known to the community starting here:

https://github.com/contiv/netplugin/issues