The recently concluded OpenStack Austin Summit was a fantastic showcase for the growing maturity and adoption of OpenStack. The evolution of the fundamental infrastructure services for compute, networking, storage, identity together with ancillary services for image management, security, monitoring makes it possible to leverage these building blocks to satisfy interesting use cases.

One such use case is the deployment and management of L4-7 network services in an on-demand, elastic, scalable, and easy-to-consume composable fashion. This complex use case decomposes into several independent but complementary problems. Some of these are being individually tackled in different projects and sub-projects in the OpenStack ecosystem. For instance, the Neutron *aaS sub-projects define the resource model for network services; the Networking SFC sub-project defines traffic steering abstractions; the Astara project, which performs Neutron agent-level network orchestration for services; the Tacker project, which aims to bridge with the ETSI MANO NFV architecture.

For the past couple of years I have been involved with the Group Based Policy (GBP) project that uses policy as a unifying principle to leverage all this richness in the OpenStack ecosystem. GBP provides a framework to achieve high level of automation by deriving network parameters from the definition of intent for application, network, and network services. When a user requests a service chain to be created, GBP understands and manages the resources for those network services based on prior policy definitions.

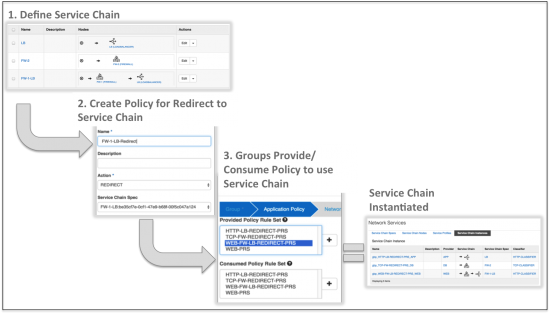

The GBP user workflow for service chain creation can be described in three steps – (1) define the Service Chain (composition of individual network services), (2) create a policy that redirects traffic matching a classifier to the Service Chain, (3) associate this policy with the Groups of endpoints that are communicating.

That’s it! Those three easy steps capture the user’s intent to deploy a Service Chain. The predefined operator policies are able to match the user’s intent to the relevant and optimal rendering of Network Services in the appropriate tenant context. We demonstrated this solution as a part of our Hands-On Workshop at Austin. You will find more details about the workflow and policy configuration here. Even if you missed the workshop (or you attended and want to explore further), we have all the instructions for you to try this with a cloud-in-a-box devstack setup. Give it a spin and tell us what you think.

That’s it! Those three easy steps capture the user’s intent to deploy a Service Chain. The predefined operator policies are able to match the user’s intent to the relevant and optimal rendering of Network Services in the appropriate tenant context. We demonstrated this solution as a part of our Hands-On Workshop at Austin. You will find more details about the workflow and policy configuration here. Even if you missed the workshop (or you attended and want to explore further), we have all the instructions for you to try this with a cloud-in-a-box devstack setup. Give it a spin and tell us what you think.

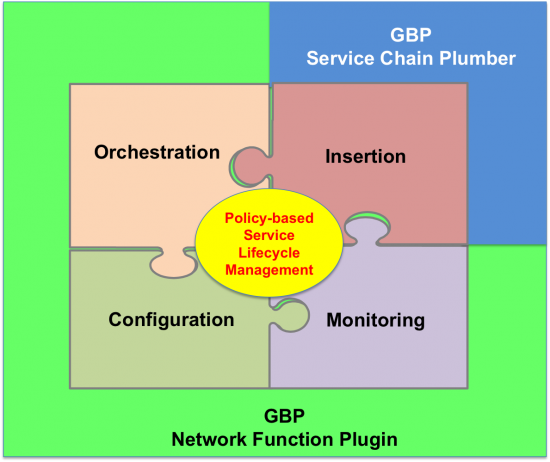

While the above is all good, and is validated in production, what I am really excited about is the next phase of evolution of this feature called Network Function Plugin (NFP). NFP comprehensively addresses the policy-based lifecycle management of Network Services by consuming GBP’s policy and network context, and using it to orchestrate, configure, and monitor the lifecycle of Network Services. NFP seamlessly spans from under-the-cloud to managing the Network Service VMs in over-the-cloud tenant context. No more burning floating IPs for every service VM, and no messy out-of-band external network connectivity even before you can get started. NFP defines southbound interfaces which allow any network service to be easily integrated and realized into a Service Chain. This solution truly democratizes the deployment of network services, we like to call it Bring Your Own Function (BYOF)!

We have been working on the design and implementation of this feature for a few months now, and it was also demonstrated in the Austin Hands-on Workshop. In an upcoming post I will outline how you can write a simple Network Service that can be deployed in a Service Chain with a few easy steps. Stay tuned! Meanwhile, the NFP framework is fast evolving and we invite you to participate in its evolution.

And as a final wrap on Austin, I would like to thank my co-presenters from Ivar (Cisco), Igor (Intel), and David (SunGard) who helped make the hands-on session exciting and successful, and also, in a big way, Hemanth Ravi and his team at One Convergence who have conceived the NFP feature and have been driving its development and evolution.

This is a transition fron BYOD to BYOF. Perhaps, in the next future, BYOsF (sub Function). TQ!