Another year, another CiscoLive. This was the last year in the London venue, and since it was the third time we did it, we had a chance to incorporate learning from the previous two years. As a result, I would say the network was quite a success.

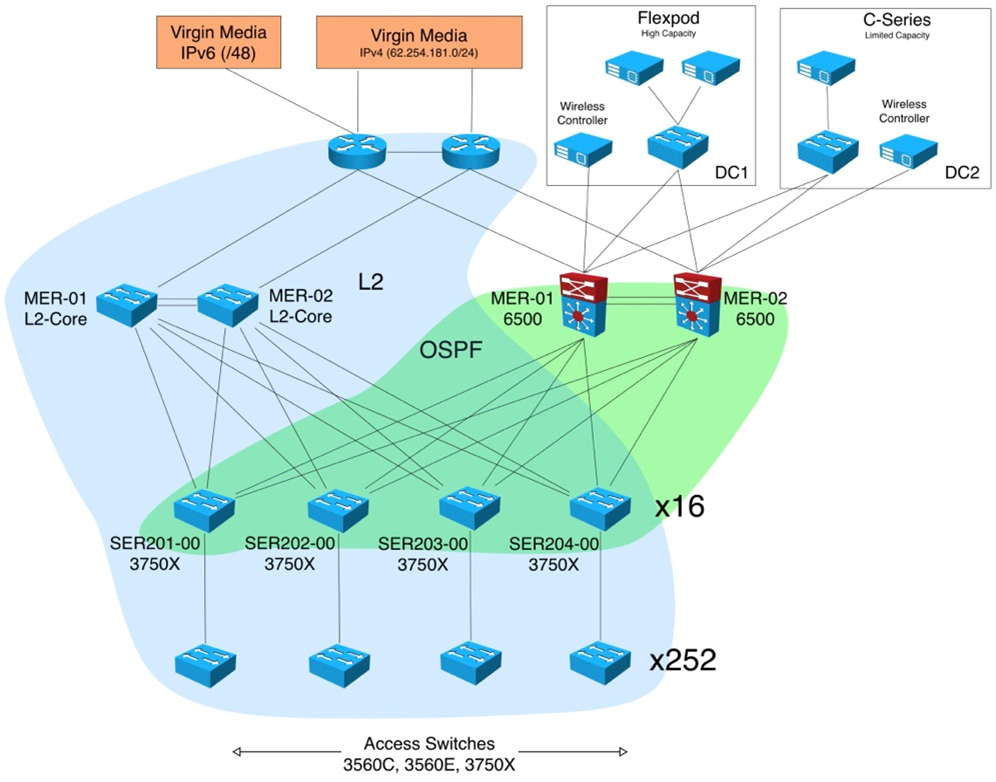

The key element of the design, led by Mark McKillop, was the balance between showcasing the latest technology and maintaining the simplicity of the network. This year we had a mixed L2 + L3 core design. This design helped decrease the impact of various parts on each other. The L2 core was in place for the “special-case” requests, which a routing-based infrastructure could not help with.

For the configuration management, we extended last year’s homegrown scripting tool – and this year it grew into a fairly comprehensive database. The scripting tool contained all the information about port assignments, bandwidth guarantees, and public address assignments. The seemingly “Not Invented Here” (NIH) occurrence is because the needs of the show network are quite a specific niche.

In order to simplify management, no ports were shut by default, but rather the unassigned ports were placed into quarantine VLAN. Placing ports into quarantine VLAN enabled the device to still get an IPv4 address and some limited connectivity. We could also still use the information from DHCP option 82 to adjust the port placement into the correct VLAN. There was no “captive portal” since only the members of the NOC had the access to the tool, we relied on a call back “it’s not working” to fix the ports by hand by one of the members of the NOC who had the access to the tool.

The default interface was a simple dropdown menu, along with the message “You are currently connected to VLAN ….”, we did not need to explain much about the functioning of the tool, everyone in the NOC team could login to the tool from a problematic port and change its VLAN assignment. This new VLAN assignment triggered the configuration change with automatically pushed VLAN-tailored configuration, which proved very handy because the template contained about 20 lines (due to extensive use of various safety features like ACLs, storm-control, BPDU-guard, etc.).

This proved a very pragmatic move because it allowed early diagnostics and intervention of the NOC staff before some of the participants of the conference could, by accident, impact other parts of the network.

This year the network, as usual, was fully dual-stacked. One additional dual-stacked element in was the ciscolivelondon.com website, which was the central information point of the event.

Dual-stacking the website was surprisingly easy. My estimate was that we spent about an hour for the process overall. Half that time consisted of a quick IPv6 refresher course with the administrators of the site to ensure they could be as efficient as with IPv4. The other half hour consisted of testing the operation of the dual stack-enabled site. Since the IPv6 fabric was already provisioned, the mere addition of the IPv6 address was trivial. If your web properties consist of a single site, you really should give dual stack a try now, while the number of IPv6 clients is not too large compared to IPv4.

But hurry up – in our measurements we saw that out of 22149 distinct IP addresses hitting the server, 9794 were IPv6. It’s a pretty impressive number!

As usual, the wireless component of the conference network was the most interesting part. However this year it went much easier! Turns out the deployment gotchas are getting consistent, so I could “catch” the next hurdle in the process before it became a problem.

This year we were running on a 7.3 version of the code for the Wireless LAN Controller (WLC) which incorporated the enhancement of the Neighbor Binding Table aimed at high churn of the temporary addresses on the devices. It performed very well, so the very tricky tradeoffs that we had last year were no longer needed. Everything worked fine with the pretty much default settings.

Last year, background multicast chatter took up a noticable portion of the airtime. So this year we applied some knobs to ensure we minimized that noise.

First, we applied filters to block mDNS on the WLC (udp port 5353 on both IPv4 and IPv6). This alone dramatically decreased the amount of airtime dedicated to “housekeeping” traffic and allowed to give it to the user traffic.

Second, we turned on the multicast RA throttling feature on the WLC to throttle the multicast RAs from the router.

Why would you need to do that? Because otherwise every client that joins the network will trigger a solicited RA, which IOS sends per RFC to the multicast address, thus this packet is resent to all the clients. IOS throttles the sending of the packets itself down to one packet in 3 seconds. However on a large wireless network even such a rate is a bit too much because it has to be sent on all of the access points to all of the clients. The Multicast RA suppression on WLC makes it behave smarter because after the configured threshold is reached, the WLC stops sending the solicited RAs to all the clients and instead forwards it to the client that requested it.

This turned out to further help reduce the amount of multicast chatter with the end result being a fairly comfortable to use network environment.

This year with all the enhancements in the code and operational and process tweaks, the operation of the Cisco Live London network was much smoother and quieter. As a result I was able to also deliver a couple of sessions instead of tending to the network 24X7.

Shameless plug: one of these sessions was “Hitchhiker’s Guide to IPv6 Troubleshooting“. If you find this blog post interesting, you’d likely want to attend this session. It’s 100% based on the practical experiences of deployment and operations of IPv6 and I am happy that it will be featured at Cisco Live Orlando in June as well.

I think it is a very positive indicator that I can now spend more time sharing the experience compared to previous years.

IPv6 in the meantime just works.

See you in Orlando!

What are the new features of IPV6? How is it reflect the previous one? I eager know it.

I think this is an excellent topic for a separate blog post! I will plan to write something up ~ in a couple of months from now and will add the comment here once that blog entry is up. Thanks!

I am extremely inspired together with your writing talents and also with the layout to your

blog. Is that this a paid subject or did you modify it your self?

Anyway stay up the nice quality writing, it’s rare to peer a nice blog like this one nowadays..