Join Developer Days in New York to Learn How to Get to 9X

Two years ago we set out on a quest to tune Cisco Network Services Orchestrator (NSO) for massive deployments. The primary challenge was the transaction throughput since no one wants a network that is slow or non-responsive. Customers will shout before you know it “Make your code run faster” or “My system is hanging”.

Today we are happy to announce that we have a significant performance boost for you. I almost dare to say that NSO 6.0 is “The Perfected Sword.” The magic is within the NSO Release 6.0 and the reimagined concurrency control of transaction management. When we started the project we knew that safe concurrent transaction handling was our best attribute that was our greatest enemy, as well as our biggest potential. We were challenged as we had to perfect something that made us who we are. Now we are proud to claim that you will get three (3) times faster transaction throughput by only upgrading SW, and up to nine (9) times faster if you engage in optimization. If you are new to NSO and don’t care about the history, you can stop reading now, and enjoy the new version!

For those of you who have been with us for a while, or maybe struggled to scale with NSO, I will add a few layers to the history. If you want to know even more and get hands-on, sign-up for our next Automation Developer Days, Nov 29-30 in New York!

Shaping NSO for Increasing Demand

With an ever-growing network demand, we knew we had to be radical. Future networks need to push through more transactions per second than ever before. Our attempts to help customers optimize their service code were not enough. We knew that we were overprotective and performed too much in the critical section and did very little in parallel. So, we put our inventor hat on and found a safe way to increase throughput while keeping the same transactional guarantees.

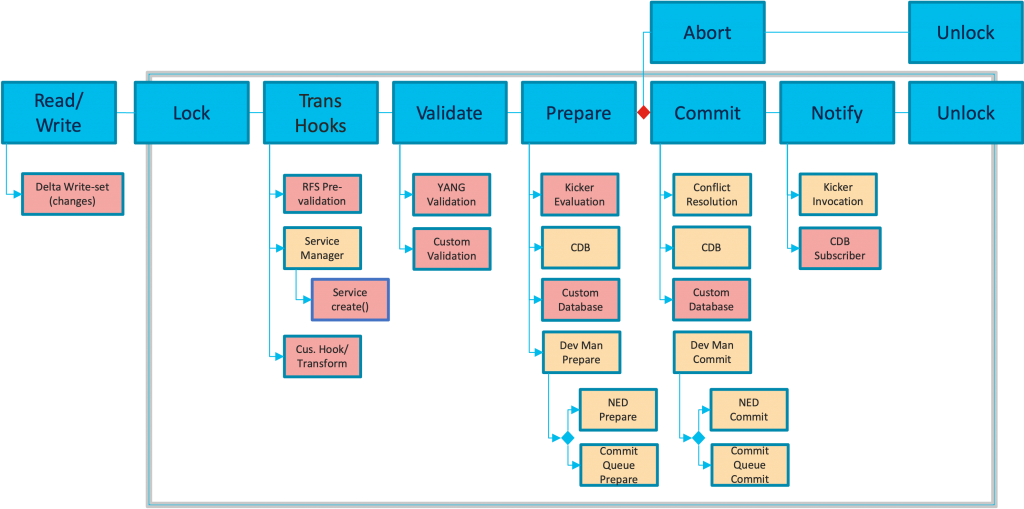

Time consuming things we did in the critical section.

- Rollback file creation

- Service invocation

- Yang validations that are model-driven constructs such as must, when, leafref, etc.

- Kicker evaluations

- Device communication without commit queue

It was tough to realize, but the merits that make NSO so unique also can impact performance at scale. We cannot expect users to write perfect validation expressions just because we know how. We also understood that we could not achieve sufficient gain unless we challenge the NSO heritage and move things out from the protected phase, just enough to release the power. That is what made our transactions fail-safe and also prevented some level of parallelism.

Can we run without locks or can we make the lock shorter? We need to manage any code that runs unprotected without adding too much complexity that eats up the cycles on the other side.

The New Concurrency Model

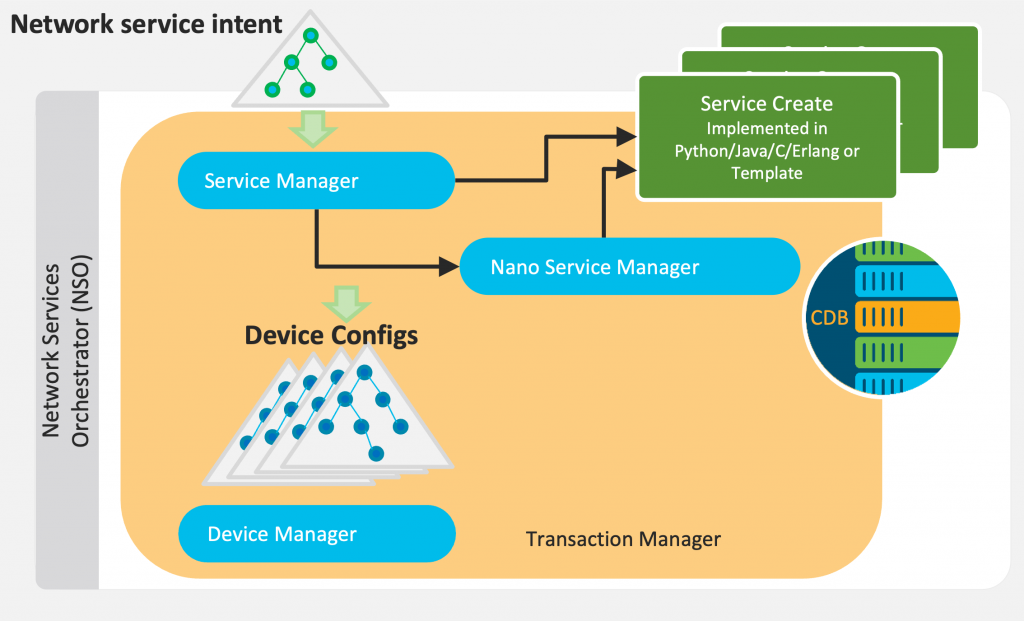

We put a lot of research behind the new design and the parts that control concurrency. The concurrency control of transaction management is the central piece of this project.

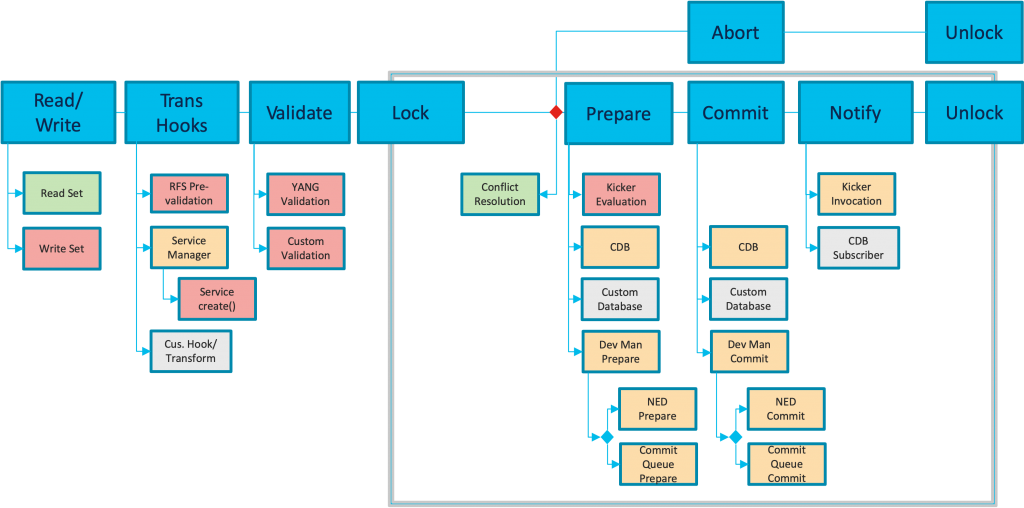

We knew that we could do much more in parallel if we do more “checking” instead of “locking.” We just need to verify that the decisions are still valid when applying the transaction. Service invocations, Yang validations, Rollback file creation, and more could potentially run outside the lock if we find a way to detect interference. We went from a pessimistic view of the transaction to an optimistic view to optimize concurrency.

To ensure transaction guarantees we perform conflict detection to verify the transaction. We basically compare the applied transaction read-set to recently completed transaction write-set. If some transaction has changed what the current transaction read, then the current transaction must either abort or re-run partly. In this way, we only accept consistent changes. Pretty straightforward, right? Of course, if you do your part to ensure your code is conflict-free you will avoid transactions restarts, and NSO can run full speed.

Unexpected Outcomes

Transaction handling is probably one of the more well-tested code sections in NSO for a reason. Changing the core architecture can of course be challenging but opportunities also arise while doing so.

- Lockless dry-run is one of them. The dry-run transactions will never enter the critical section, not even in LSA. It affects most actions with the dry-run option as well as service check-sync, get-modification, and deep-check-sync.

- Improved device locking is another one that allows us to obsoletes the wait-for-device commit parameter. The devices are locked automatically before entering the critical section which simplifies both code and operations.

- Improved parallelism in LSA clusters.

- Improvements backported to the NSO 5.x branch

- Improved commit queue error recovery

- Internal performance improvements in CDB

- Performance Improvement for kicker evaluation

Sometimes it Pays Off to Dare a Little More

Sometimes it is worth trying the more advanced path to reach a certain goal. When you know it works you can simplify and evaluate. Now we challenge you to upgrade to NSO 6.0 and optimize your SW for faster transaction throughput. To learn more I highly recommend the new Packet Pusher podcast that uncovers the new features in NSO 6.0. As the next step, come to Developer Days in New York in November if you want to know more about the details and how you can gain performance with NSO 6.0. You will dive deeper into this topic in hands-on coding sessions led by our experts. If you can’t come to New York or want to come prepared you can always check out the NSO YouTube Channel for the latest content. We have two particular sessions on the new concurrency model from our previous event in Stockholm. One overview session explains what we have done and one session is a deep dive that focuses on the conflict detection algorithm.

Related resources

- Find interactive docs and learning labs for Network Services Orchestrator (NSO)

We’d love to hear what you think. Ask a question or leave a comment below.

And stay connected with Cisco DevNet on social!

LinkedIn | Twitter @CiscoDevNet | Facebook | YouTube Channel