Following on an excellent introduction to this blog series from Scott Ciccone on how Cisco’s solutions can help customers unlock the power of Big Data, this blog focus on management innovation that Cisco UCS brings to Big Data ecosystem.

Access to more and better data is creating new sources of competitive advantage and it has increased relevance in the connected world of IoE. This is aptly reflected in quote from Stanford’s Anand Rajaram – “More data usually beats better algorithms”. Organizations are realizing the essence of this statement as they see increased value from adding more data sources, to discover hidden insights, anticipate trends and make better decisions. New opportunities are constantly being identified and new datasets are added, requiring IT teams to have an agile, reliable and scalable infrastructure. Bottlenecks in infrastructure and complexity in management can have a profound impact on the ability of an organization to react to rapidly changing business needs. This calls for an infrastructure that is flexible, adaptable, scalable and easy to manage, to meet the both the growing and changing workloads in the Big Data ecosystem.

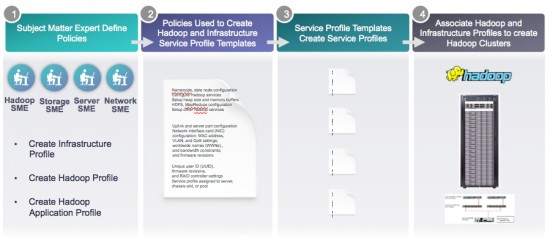

At the core of UCS ability to provide unified management across network, server and storage, is UCS Manager. Every element of the physical infrastructure including server identity (LAN addressing, I/O configurations, firmware versions, boot order, QoS policies) can be dynamically configured through software. This logical abstraction simplifies the configuration of Big Data Hadoop nodes and adapts it dynamically to changing workloads, leading to dramatic reduction in OpEx costs. UCS Central brings these same capabilities across multiple domains and provides the ability to extend to large clusters up to 10,000 nodes within the same management pane.

With the new UCS Director Express for Big Data, these same advantages of flexibility and agility at the physical infrastructure level are now extended into the Hadoop application space. UCS Director Express for Big Data delivers an integrated policy based Hadoop infrastructure on UCS Common Platform Architecture (CPA) for Big Data, delivering a world-class infrastructure architected to provide performance at scale. It is integrated with major Hadoop vendors to provide centralized visibility across the entire Hadoop infrastructure. Enterprises can now provision on-demand Hadoop clusters and manage both physical and software infrastructure from a single management pane.

The advent of Hadoop 2.0 has triggered newer workloads including interactive and streaming workloads that can now run on Hadoop. The industry is poised to witness an unprecedented data growth with IoE and new wave of applications. This calls for architecture that is designed from the grounds-up to be extremely nimble to changing workloads. UCS has been built on this very foundation and is constantly innovating to simplify the operational complexity of managing Big Data clusters. With an agile and automated Big Data infrastructure, enterprise can now shift their focus to realizing business value from Big Data analytics, and faster time to market.

In the next part of this blog series, we take a step further into a more application centric approach. Sandeep Agarwal is going to share how Cisco ACI uses high level policies to dramatically improve cluster performance, secure and end-to-end data path and reprovision automatically for other workloads. To learn more about Cisco’s vision for the pervasive use of Big Data within enterprises, register for the October 21st executive webcast ‘Unlock Your Competitive Edge with Cisco Big Data Solutions’.