Last week I had the rare pleasure of being able to attend a storage conference (rare in the sense that I usually am one of the speakers, rather than one of the attendees). It was SNIA’s Storage Developer’s Conference, and like most events there were both things that were interesting and worthwhile, and things that left something to be desired.

The lasting impression that I walked away with, however, was something that went beyond any one particular conversation, presentation, or technology. Indeed, the thoughts that have been rattling around in my brain for the past week made me realize that, if we (those of us in the industry) aren’t careful, the future looks extremely convoluted and confusing. At worst we may actually wind up mismatching solutions to problems, taking giant steps backwards, locking us into a perpetual game of ‘catch-up’ as we struggle to accomplish what we can do today using traditional storage methodology and equipment

The lasting impression that I walked away with, however, was something that went beyond any one particular conversation, presentation, or technology. Indeed, the thoughts that have been rattling around in my brain for the past week made me realize that, if we (those of us in the industry) aren’t careful, the future looks extremely convoluted and confusing. At worst we may actually wind up mismatching solutions to problems, taking giant steps backwards, locking us into a perpetual game of ‘catch-up’ as we struggle to accomplish what we can do today using traditional storage methodology and equipment

The Polysemic of Storage

“Polysemic” means, essentially, “having multiple meanings.” and the storage industry is rife with them.

As brilliant as some of the sessions were, or as disappointing as some of them turned out to be, there was one major perspective that permeated all of them: there was only one definition of storage – the speakers’, That is, if you happened to come from a different area of the storage industry, well, you didn’t just not count, you didn’t exist in their world.

Sure, we all have strong biases in whatever technology we choose to work within:

- Storage manufacturers, whether they be in SSDs, Flash, or “Spinning Rust,” (as traditional hard drives are now pejoratively called), all see themselves as working in “storage”

- Similarly, manufacturers of storage networks (including Cisco) tend to take a somewhat more holistic view, but treat the traffic that is passed from host to target as “storage,” which means the network is a critical part of the meaning

- Developers, like those at the conference, tend to work more on the calls to and from disks, and see the usage of that data as “storage,” because it’s where the data is often turned into useful information

- Creators of file systems and access protocols, like the presenters who focus on Hadoop, Ceph, SMB3 or NFS4 variants (yes, I’m deliberately lumping these together to prove a point) define storage as a means of access and mobility

If you are not in the storage industry, you may take a look at all of these perspectives (and these are just four, there are many more terms and definitions that are in use) and see that all of them can be considered a part of “storage,” which necessarily becomes more nebulous and poorly-constrained the more elements are added to the mix.

Inside the storage industry, however, it was amazing just how much each of these groups are isolated from one another. What I mean is that there appears to be a willful ignorance of many very intelligent people about the nature of the entire storage ecosystem. Even in the SMB track, which I thoroughly enjoyed, there was a nonchalance about the effect of running increased SCSI traffic over the network to enable improved clustering capabilities in Hyper-V.

Okay, if that sounds like Greek to you, here’s the bottom line: the developers who are creating these technologies seemed to be unconcerned about the ripple-effect consequences of throwing more data onto a network. In other words, at all times they assumed a reliable, stable network with enough capacity is just going to “be there.”

I think the thing that was very troubling to me was that when asked directly about the networking concerns, the response that came back was that “it didn’t matter, we’re using RoCE (RDMA over Converged Ethernet)” In other words, “hey, once it leaves my server, it’s not my problem.”

I think the thing that was very troubling to me was that when asked directly about the networking concerns, the response that came back was that “it didn’t matter, we’re using RoCE (RDMA over Converged Ethernet)” In other words, “hey, once it leaves my server, it’s not my problem.”

I had a very hard time getting my head around this – it seems intuitive (to me, at least) that if you’re going to throw exponentially increasing amounts of data onto a network there may be, uh, consequences. As I’ve noted before, whenever you use a lossless network, like RoCE, you’re going to have to architect for that type of solution.

To these developers, however, it seemed as if they were only caring about what happened inside the server; once a packet left the server it was no longer their problem, and it didn’t exist as their problem until it hit the end storage target.

This is all well and good, until you start getting into the question of Software-Defined Storage.

Software-Defined, or Software-Implemented Storage?

Alan Yoder of Huawei had an excellent observation during a conversation with Lazarus Vekiarides. In a casual discussion regarding SDS, Alan pointed out that much of the characteristics of most SDS wishlists appears to be more of an implementation of storage, rather than a definition of storage. I have to confess, this distinction really spun me for a loop precisely because it underscored the very problem with semantics I was having.

[Update: September 28, 2013: Laz wrote a blog about his thoughts on this as well.]

Why? Because he’s absolutely right. There is a huge difference between creating a system which merely connects the dots and implements a storage system, and one that defines the storage paradigm.

There are management programs which already exist that implement storage and ease the stress of provisioning. UCS Director (née Cloupia), for instance, does this. Software like this allows you to identify a server and storage instance and allows you to identify how much storage you need, how many CPUs to use, and even how long you want the instance to exist. I think (this is outside my wheelhouse, so I admit I’m not as confident as this claim) that there are competitors who have similar types of software solutions as well.

There’s a huge difference between creating a system which merely connects the dots and implements a storage system, and one that defines the storage paradigm

None of these solutions define the storage network, however. There is no (to my knowledge; if I am wrong I will happily correct my assumptions) dynamic observation of the bandwidth of a network and re-allocation of resources based upon usage to alter minimum ETS (Enhanced Transmission Selection, which is what is used to guarantee bandwidth groups in an Ethernet network) capacity.

Why is this important? Because without these types of capabilities this means that you are confining your software to a very, very limited subset of compatible storage protocols and hardware. In this case, you’re relegated to the types of storage solutions that rely on the lowest common denominator of compatibility. In fact, you can go too far: all the high performance of SSDs won’t save you from the layers upon layers of virtualization necessary to make it all work. For many storage applications this means we’ve taken a huge step backwards.

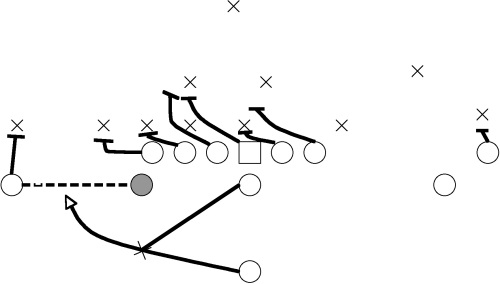

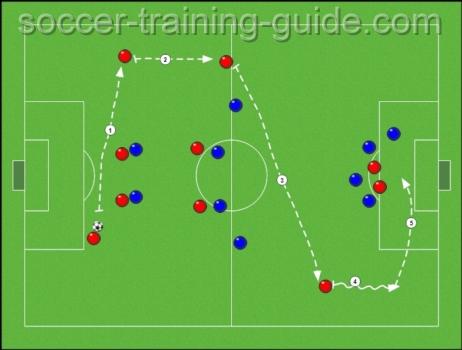

Think of it like this: the game of Football has many meanings, depending upon who you ask. An implementation of football can consist of choosing one of many plays.

However, if you ask someone else about what they consider to be the best plays for football, their idea may look something like this:

You can create a software program to create and implement plays all day long, but it does not define the game of football. To extend the (imperfect) analogy, if Football were Storage, we still do not have any means of being able to define which type of Storage we want to play with, and thus have a clue as to the best way to implement it!

Defining Software-Defined

There are a lot of opinions and projects going on in the industry that have the name “Software-Defined Storage” attached to them. We know, for instance, that EMC has its VIPR program (which always makes me think of the TSA, sadly), and VMware has dipped its toe into (the malappropiately named) VSAN (which is more of a virtual DAS system, but that’s a topic for another day). The OpenStack project is working on including block storage (e.g., Fibre Channel) into its Cinder release, and of course SNIA’s Storage Developer’s Conference had numerous vendors who claimed to have solutions that fit into this “software-defined storage” category as well (e.g., Cloudbyte, Cleversafe, Jeda Networks).

In fact, it seems like nearly everyone and anyone has a “software-defined storage” offering that “prevents vendor lock-in” as DataCore puts it. [September 26, 2013 Update: In the four days since I first posted this blog, vendors EMC, Nexenta, Gridstore, RedHat, HP, VMware, Sanbolic and analysts Howard Marks, 451 Research, have all been running a full-court press on the subject. I wouldn’t hold my breath that they’re all referring to the same thing, though. Someone with more time than I have should probably create some sort of compatibility matrix to figure out just how much of the Venn diagram is covered.]

It seems to me, though, that each of these solutions fails to actually accomplish what they claim to do – define the storage solution holistically. In other words, they either are taking one of the small subsets of storage discussed above and embellishing it, or attempting to implement a better mousetrap for storage access and mobility. Or, to continue the football analogy, many solutions choose a game and then try to figure out how to implement the plays.

None of this should be considered a bad thing, mind you! I’m a big proponent of trying to make storage solutions more coherent and approachable.

I suppose I get concerned, however, when I see that the solutions appear to root us more firmly in any one particular paradigm and lock us out of solutions that hinder us from the flexibility of performance and breadth of storage options that exist today. Isn’t that, after all, what “software-defined” is supposed to liberate us from?

My Thoughts

This is the part where I make the standard disclaimer – these are my thoughts and mine alone, so you should judge them as my mere observations rather than any ‘official’ claim or direction from Cisco or my colleagues. Your mileage may vary, all rights reserved, no animals were harmed in the making of this blog. Don’t read anything strategic into this – these are just my own feverish musings.

In my warped little mind I see a “Software-Defined Storage” environment as one that is longitudinal and holistic. That is to say, the software dynamically adapts to storage characteristics over time, from server through the network to the storage targets. This would mean the definition of each OSI layer to be able to handle optimized storage solutions as per application requirements.

Here’s a pie-in-the-sky example: Data centers have multiple applications that are being run on a variety of storage protocols. Some of these applications require server-to-server communication (such as the SCSI over SMB I described above), some require dedicated bandwidth capacity for low-latency, low-oversubscription connectivity (such as database applications), and some require lower-priority, best-effort availability (such as NFS-mounts).

From a systems and networking perspective, we’re talking about the entire OSI stack, from the physical all the way up to application layer. Each of these have their own best practices and configuration requirements. A software-defined storage solution should be able to access each of these layers and modify the system-as-a-whole to get the best possible performance, rather than shoe-horning all applications into, say, SCSI over SMB because it’s “easiest at layer 4” and most applicable for Hyper-V clustering. (That’s just an example).

Software-Defined Storage implies a Data Center that can change the definition of storage relationships over time, not just tweak attributes

Imagine, though, if you could initiate an application in such a way that the software monitors the data over time and recognizes capacity and bandwidth hotspots, evaluating latency, oversubscription ratios, ETS QoS, and even protocol/layer inefficiencies (like running lossless iSCSI when TCP/IP over a lossy network would actually be more efficient). Take the advances in Flash, SSDs and server-side caching as well as the virtualized storage solutions already well-proven, and provide longitudinal metrics and re-evaluation.

Need more capacity for your mission-critical applications? A finely-tuned SDS would dynamically allocate additional guaranteed bandwidth at Layer 2. Network topologies creating unacceptable iSCSI performance? Change from lossy to lossless, or vice versa. Have a choice between various types of block protocols (e.g., iSCSI, FCoE, Object), have the system do an impact analysis and choose the best definition of that storage solution.

(Ethernet, of course, provides a great basis for this. It’s one of the few media that allow for such diverse modification and alteration for so many applications. There really is no other medium that can allow you to move from lossy to lossless and vice-versa. Just an observation, of course.)

Of course, If this scares you, perhaps “software defined storage” may not be the right way to go. 🙂 There is absolutely nothing wrong with sticking with tried-and-true.

Next Thoughts

I admit that there is more “science fiction” than “data center fact” in what I’ve described. That would be a fair criticism, I believe. However, I think it’s also a fair criticism to make the observation that any proponent of “software-defined storage” that glosses over the entire storage ecosystem, or relies heavily on the silos within which they work as the sole component for SDS breakthroughs, is limited in scope. Either that, or my imagination is far too unlimited. 🙂

As I said, I have no special knowledge or grand design into Software-Defined anything, and I’m still in a “sponge-mode” learning zone, so it would be foolhardy to read too deep into my musings. I do think, however, that this is a subject that deserves open and clear discussion so as to avoid the accusation that “Emperor SDS has no clothes.” I also think it’s the only way If we are to avoid the “buzzword bingo” problem when it comes to storage.

Personally, I think that would be a shame if it wound up that way. The storage industry as a whole – as an adaptive ecosystem – has a real opportunity to do some interesting things and create a true paradigm shift in the way it all works. Storage administrators and consumers are not the most adventurous bunch, of course, but that doesn’t mean that we can afford to think of reliable access to data the same way over the next ten or twenty years.

Ultimately, I think the answer lies in thinking bigger and more expansive, with the true big picture in mind. We miss a unique and potentially revolutionary opportunity if we merely attempt to create a Franken-storage system out of patchwork software implementation silos that think the rest of the ecosystem is “all things created equal.”

Nevertheless, I believe the topic deserves better consideration than it’s been given. I welcome and encourage all thoughts, ruminations, pontifications, elaborations, meditations, and reflections. In other words, tell me what you think. 🙂

Good article, some very good points I’ve also noticed as a critical observer.

1) Among other things, I do Cisco SAN, as in network side of storage. I’m amazed that no EMC tech I’ve ever talked to at a site knows what a FLOGI is or why it might be relevant. “Nothing but the GUI.”

2) Microsoft was (still is) an early denier of network being less than perfect. File shares cause BSOD when the network is down. Worse, dragging a file across the Explorer for a file share (or the CD drive) causes a 10-20 second delay while the file system is accessed or something. That’s just lame programming only tested on a fast LAN.

3) So to put one of your thoughts briefly, maybe SDS should allow us to specify ancillary configuration items, which depend on the specified characteristics (degree of reliability, redundancy, speed) we ask the SDS for?

Some really good points. I will say that I’ve known quite a few EMC people who understood what a FLOGI is, though, to be fair. 🙂

I really like your suggestion in 3). I think that anything that is “Software-Defined” worth its salt should alloe the capability of having the kind of ‘nerd knobs’ for performance tweaking, of course. But what you’re talking about seems to be a few steps beyond that. For example, “reliability” itself is a category within which there are several criteria. Something defined by software should definitely be able to adhere to and monitor those types of criteria, without question.

Thanks for the reply!

Your vision of software-defined storage seems a bit network-centric to me. No surprise, I guess, given where we’re each coming from. 😉 Here’s the thing. Data has an inertia that neither computation nor networking do. It’s *really* expensive to rearrange where data is stored. We’re not talking seconds, which is still an eternity in many computing domains. We’re talking hours. Days. Even weeks. If you want to change replication levels, it’s going to take a while. If you want to enable or disable encryption, it’s going to take a while. If you want to add or remove storage and have the system migrate data so that your disks and servers are all equally utilized . . . you get the idea. Changing protocols or tweaking layer-two attributes is kind of missing all the problems that storage people actually need to worry about.

Software-defined storage is about changing the “shape” of data as it sits at rest, more than it’s about changing the way that you access it. How much durability? How many aggregate IOPS (not MB/s)? What ratio of raw to usable space? Give me storage with these characteristics and *poof* it’s there, but now it needs to persist far longer than any network connection (and through more kinds of failures). It’s not “holistic” to ignore everything that needs to happen on the other side of the servers’ NICs or HCAs. If there needs to be more synergy between SDS reconfiguring the storage and SDN reconfiguring acccess to it then great, but neither should be looking down their nose at the other for failing to do it all.

These are excellent comments, Jeff. I agree with everything you’ve said here. My ‘network centric’ focus is there for 2 specific reasons (3, if you count that this is the area I’m the most familiar ;):

First, in the conversations of SDS, both the storage-side approaches and the application/compute-side approaches neglect the storage network. Either it’s a complete omission (as in the case of Microsoft) or treat it like a hypothetical. Remember high school physics class? Where you’re supposed to “assume a frictionless environment?” It’s a lot like that, where you “assume a reliable storage network.” I wanted to ensure that people realize that the storage component is more than where the data is, but also how the data gets from one place to the other.

Second, I really do think that there is a major difference between defining a storage system and merely implementing one. We have management systems in place for the re-arranging of bits on a wire, just like we have management systems for access to those bits. Those are all ‘software managed,’ which doesn’t even get us to the realm of ‘software-implemented,’ let alone ‘software-defined.’ I think that by ignoring any of the various links in the chain we risk doing ourselves a disservice.

For that reason I agree with you 100% that “changing protocols or tweaking layer-two attributes is missing all the problems that storage people need to worry about.” The network piece is just one of several moving parts, and without question cannot be seen as either the lynchpin or superfluous. Instead, it’s part of an ecosystem.

That being said, i think you are spot-on with your inclusion of the various elements of timing (microseconds v. seconds. v. days v. weeks, etc.). This is the longitudinal element that I was discussing as well (but didn’t go into it very much; brevity was not on my side for this post as it was!).

I do believe there is potential for true change in the paradigm, here. It’s for that reason that I’m glad that we’re opening up the dialogue beyond just the marketing terminology to ask whether or not we are really accomplishing anything or just adding on another block to the Rube Goldberg design of ‘software-defined-X‘.

Thanks for writing!