Over the last couple of years, Data Centers have become a key focus on networking innovations, particularly around the broad area of Software Defined Networking (SDN). At Cisco, for nearly one year, we have been shipping our new way to build a Data Center with our Application Centric Infrastructure (ACI) solution. ACI relies on the vision of using a policy based methodology to enable network switches, services, and hypervisors to establish network connectivity among each other.

At the Open Networking User Group (ONUG), a survey reports that 3% of the networks run by ONUG members are built on open networking whereas 71% are not open at all. The assessment of any system being declared “open” is a subjective term. At Cisco we have built a foundational infrastructure in ACI, that relies on open protocols and programming constructs such as APIs, in order to provide a solution where the network becomes ‘invisible’ to the end user, and the network devices and services modules, such as firewalls, load balancers, physical and, virtual switches are automatically configured based on the end user intent.

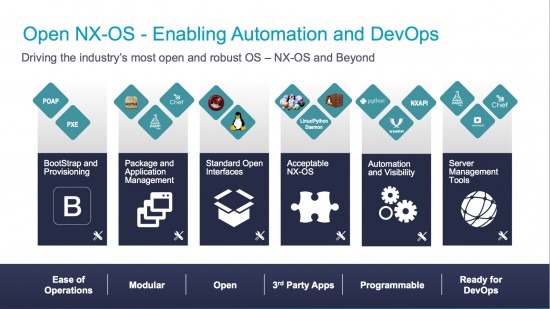

ACI is one element of a broad portfolio of solutions for open networking at Cisco in the Data Center. The same Nexus devices that support ACI enable alternately an end user to run the usual operating system (NX-OS) which today provides today a platform for open protocols, APIs and the capability for users to run their own apps if they wish to do so.

Cisco offers many options for open networking such as the VXLAN & EVPN fabric running also on an SDN type of infrastructure. This not to say we are changing directions, but rather it’s to say that we want to give flexibility to users. All these protocols and ways to achieve a fabric buildup exist today and the multitude of technical choices with Cisco to build a Data Center fabric allows many different use cases and integration options in existing network infrastructures.

I am excited about our network innovation efforts alongside of ACI providing open programmability with what we now call “open NX-OS”. We have added innovations including an object model on NX-OS platforms where API’s can be used in a similar programming methodology as ACI. We expose access to the linux kernel, to the bash shell allowing users to instrument the control plane. We provide integration using YUM of third party, open source and user built custom applications in a common linux RPM packaging format on the switch itself. One example is to install the LLDP package RPM in order to use this variance of LLDP on the switch as the LLDP enabled feature. Upgrades are also possible via RPM as well as software patching which we’ve been shipping for quite some time.

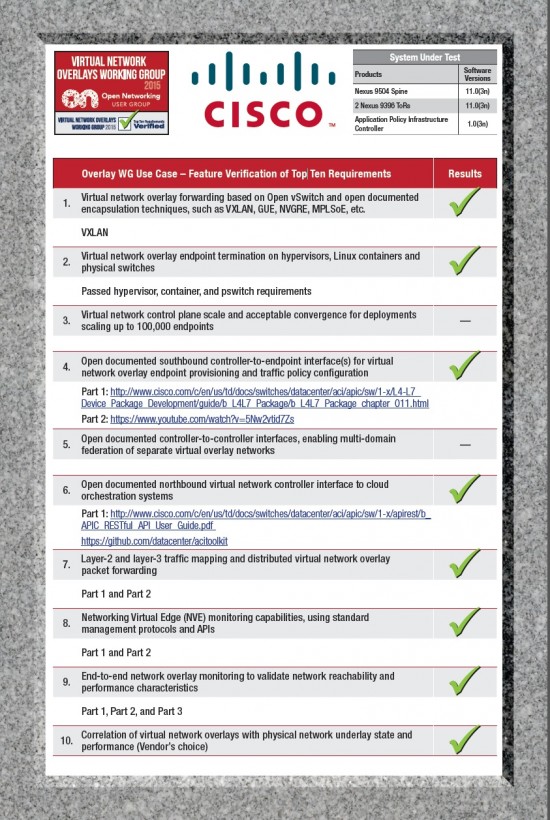

Cisco ACI was a highlight this week at ONUG. ONUG published the first set of results from its Vendor Feature Verification Testing and Cisco Application Centric Infrastructure participated. We passed all the applicable top ten vendor use cases to be a verified vendor of the ONUG Virtual Network Overlays Working Group. If you look across the ten criteria identified by the ONUG working group, you see a number of places where ACI stands out from alternatives in the market.

Cisco Virtual Network Overlays WG Poster

- By bringing together the physical and virtual environment, Cisco ACI was the only solution that allow ACI users to run whichever hypervisors they decide, integrate physical servers, as well as Docker based containers – all simultaneously.

- They can also integrate, seamlessly configure, and operate service chaining for L4-L7 devices (load balancers, firewalls etc.).

- ACI users enjoy a rich level of overlay network monitoring with atomic counters, health scores, statistics and alerts. By mapping physical health score and fault information to virtual networks or application groups, Cisco ACI allows users to immediately trouble shoot across the physical underlay and overlay environment.

More information about ONUG:

http://blogs.cisco.com/datacenter/it-business-leaders-open-up-onug

More information about EVPN and VXLAN:

VXLAN Comes of Age with BGP-EVPN

Nice article, is there any more information on platforms that can take advantage of open-NX-OS and where the object models can be found?

The Nexus 9000 platforms are enabled for open NX-OS, we are working on the object model publication and it will be posted on our website. In the meantime, you can email me at lucien@cisco.com for more information.