The Cisco HyperFlex Data Platform (HXDP) is a distributed hyperconverged infrastructure system that has been built from inception to handle individual component failures across the spectrum of hardware elements without interruption in services. As a result, the system is highly available and capable of extensive failure handling. In this short discussion, we’ll define the types of failures, briefly explain why distributed systems are the preferred system model to handle these, how data redundancy affects availability, and what is involved in an online data rebuild in the event of the loss of data components.

It is important to note that HX comes in 4 distinct varieties. They are Standard Data Center, Data Center@ No-Fabric Interconnect (DC No-FI), Stretched Cluster, and Edge clusters. Here are the key differences:

Standard DC

- Has Fabric Interconnects (FI)

- Can be scaled to very large systems

- Designed for infrastructure and VDI in enterprise environments and data centers

DC No-FI

- Similar to standard DC HX but without FIs

- Has scale limits

- Reduced configuration demands

- Designed for infrastructure and VDI in enterprise environments and data centers

Edge Cluster

- Used in ROBO deployments

- Comes in various node counts from 2 nodes to 8 nodes

- Designed for smaller environments where keeping the applications or infrastructure close to the users is needed

- No Fabric Interconnects – redundant switches instead

Stretched Cluster

- Has 2 sets of FIs

- Used for highly available DR/BC deployments with geographically synchronous redundancy

- Deployed for both infrastructure and application VMs with extremely low outage tolerance

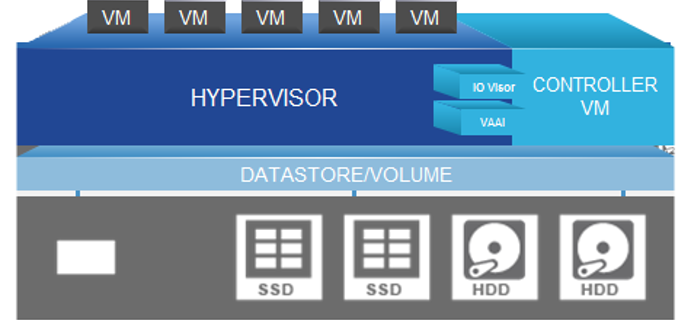

The HX node itself is composed of the software components required to create the storage infrastructure for the system’s hypervisor. This is done via the HX Data Platform (HXDP) that is deployed at installation on the node. The HX Data Platform utilizes PCI pass-through which removes storage (hardware) operations from the hypervisor making the system highly performant. The HX nodes use special plug-ins for VMware called VIBs that are used for redirection of NFS datastore traffic to the correct distributed resource, and for hardware offload of complex operations like snapshots and cloning.

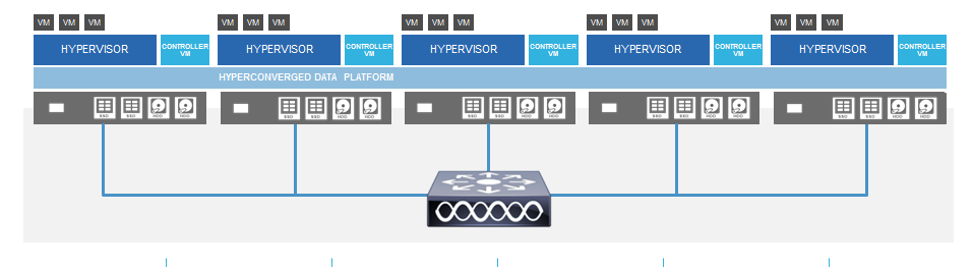

These nodes are incorporated into a distributed Zookeeper based cluster as shown below. ZooKeeper is essentially a centralized service for distributed systems to a hierarchical key-value store. It is used to provide a distributed configuration service, synchronization service, and naming registry for large distributed systems.

To being, let’s look at all the possible the types of failures that can happen and what they mean to availability. Then we can discuss how HX handles these failures.

- Node loss. There are various reasons why a node may go down. Motherboard, rack power failure,

- Disk loss. Data drives and cache drives.

- Loss of network interface (NIC) cards or ports. Multi-port VIC and support for add on NICs.

- Fabric Interconnect (FI) No all HX systems have FIs.

- Power supply

- Upstream connectivity interruption

Node Network Connectivity (NIC) Failure

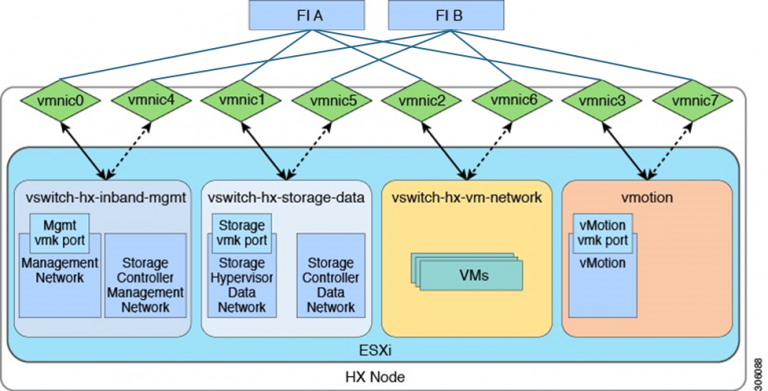

Each node is redundantly connected to either the FI pair or the switch, depending on which deployment architecture you have chosen. The virtual NICs (vNICs) on the VIC in each node are in an active standby mode and split between the two FIs or upstream switches. The physical ports on the VIC are spread between each upstream device as well and you may have additional VICs for extra redundancy if needed.

Let’s follow up with a simple resiliency solution before examining need and disk failures. A traditional Cisco HyperFlex single-cluster deployment consists of HX-Series nodes in Cisco UCS connected to each other and the upstream switch through a pair of fabric interconnects. A fabric interconnect pair may include one or more clusters.

In this scenario, the fabric interconnects are in a redundant active-passive primary pair. In the event of an FI failure, the partner will take over. This is the same for upstream switch pairs whether they are directly connected to the VICs or through the FIs as shown above. Power supplies, of course, are in redundant pairs in the system chassis.

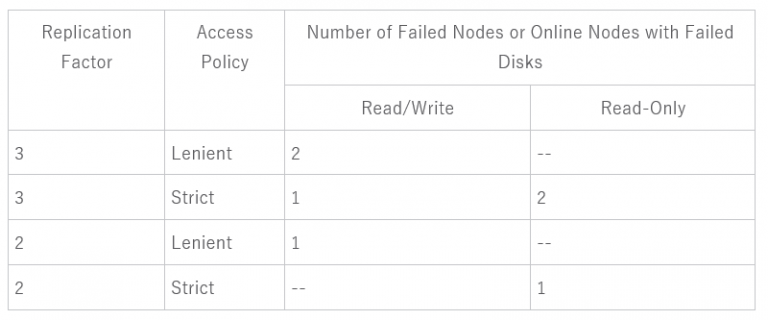

Cluster State with Number of Failed Nodes and Disks

How the number of node failures affects the storage cluster is dependent upon:

- Number of nodes in the cluster—Due to the nature of Zookeeper, the response by the storage cluster is different for clusters with 3 to 4 nodes and 5 or greater nodes.

- Data Replication Factor—Set during HX Data Platform installation and cannot be changed. The options are 2 or 3 redundant replicas of your data across the storage cluster.

- Access Policy—Can be changed from the default setting after the storage cluster is created. The options are strict for protecting against data loss, or lenient, to support longer storage cluster availability.

- The type

The table below shows how the storage cluster functionality changes with the listed number of simultaneous node failures in a cluster with 5 or more nodes running HX 4.5(x) or greater. The case with 3 or 4 nodes has special considerations and you can check the admin guide for this information or talk to your Cisco representative.

The same table can be used with the number of nodes that have one or more failed disks. Using the table for disks, note that the node itself has not failed but disk(s) within the node have failed. For example: 2 indicates that there are 2 nodes that each have at least one failed disk.

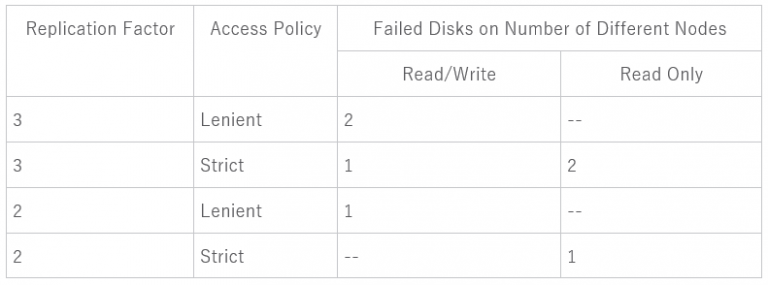

There are two possible types of disks on the servers: SSDs and HDDs. When we talk about multiple disk failures in the table below, it’s referring to the disks used for storage capacity. For example: If a cache SSD fails on one node and a capacity SSD or HDD fails on another node the storage cluster remains highly available, even with an Access Policy strict setting.

The table below lists the worst-case scenario with the listed number of failed disks. This applies to any storage cluster 3 or more nodes. For example: A 3 node cluster with Replication Factor 3, while self-healing is in progress, only shuts down if there is a total of 3 simultaneous disk failures on 3 separate nodes.

3+ Node Cluster with Number of Nodes with Failed Disks

A storage cluster healing timeout is the length of time the cluster waits before automatically healing. If a disk fails, the healing timeout is 1 minute. If a node fails, the healing timeout is 2 hours. A node failure timeout takes priority if a disk and a node fail at same time or if a disk fails after node failure, but before the healing is finished.

If you have deployed an HX Stretched Cluster, the effective replication factor is 4 since each geographically separated location has a local RF 2 for site resilience. The tolerated failure scenarios for a Stretched Cluster are out of scope for this blog, but all the details are covered in my white paper here.

In Conclusion

Cisco HyperFlex systems contain all the redundant features one might expect, like failover components. However, they also contain replication factors for the data as explained above that offer redundancy and resilience for multiple node and disk failure. These are requirements for properly designed enterprise deployments, and all factors are addressed by HX.